You have met the IBM 704 before in this series. Five tons of vacuum tubes and magnetic cores. The machine that gave us FORTRAN, Daisy Bell, and the first operational weather forecasts at the Joint Numerical Weather Prediction Unit. Every IBM 704 in the world ran programs that humans wrote – line by line, instruction by instruction, telling the machine exactly what to do.

In the summer of 1957, a psychologist at the Cornell Aeronautical Laboratory in Buffalo, New York, asked a different question.

What if the machine could teach itself?

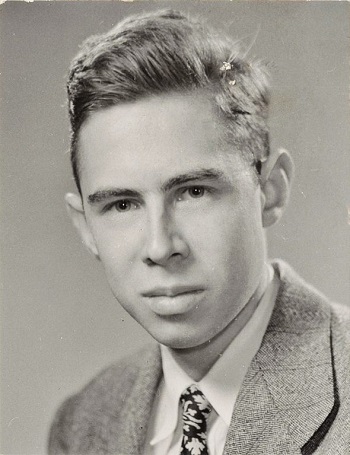

Frank Rosenblatt

Frank Rosenblatt was born on July 11, 1928, in New Rochelle, New York. He was, by every account, a genius – but not the narrow kind. He played classical piano on a grand piano he bought for his house in Brooktondale (though he had a weakness for improvising endlessly on “Three Blind Mice”). He built a personal observatory on a hilltop behind his house, doing most of the masonry himself, and housed a 12-inch Cassegrain telescope inside it. He pursued SETI research. He was active in Eugene McCarthy’s 1968 presidential campaign and protested the Vietnam War. He studied psychology, mathematics, neurophysiology, astronomy, computing, and music.

His colleague George Nagy said it best: “During my career I got to know some very bright persons. Knowing Frank made me appreciate the difference between ‘very bright’ and ‘genius.’”1

In 1946, Rosenblatt graduated from the Bronx High School of Science in New York City. The class of 1945 – one year ahead of him – included another brilliant student named Marvin Minsky. The two knew each other. They would spend the next two decades on opposite sides of the most consequential argument in the history of computing.

Rosenblatt studied at Cornell, earning a B.A. in 1950 and a Ph.D. in psychology in 1956. His dissertation involved building a computer for psychometric analysis using IBM equipment – so he was already fluent in machines before the perceptron. He joined the Cornell Aeronautical Laboratory in Buffalo, a defense-funded aviation research center, as a research psychologist.

And there, on an IBM 704, he built the first machine that could learn.

The Perceptron

The idea came from the brain.

In 1943, neurophysiologist Warren McCulloch and logician Walter Pitts had published a mathematical model of the neuron – a simple unit that receives inputs, weights them, and fires if the total exceeds a threshold. It was elegant but static. The weights were fixed. The neuron couldn’t learn.

Rosenblatt’s insight was to make the weights adjustable. Feed the network an input. If it gets the answer right, do nothing. If it gets the answer wrong, adjust the weights slightly in the direction that would have given the right answer. Repeat thousands of times. The network will learn.

He called it the perceptron.

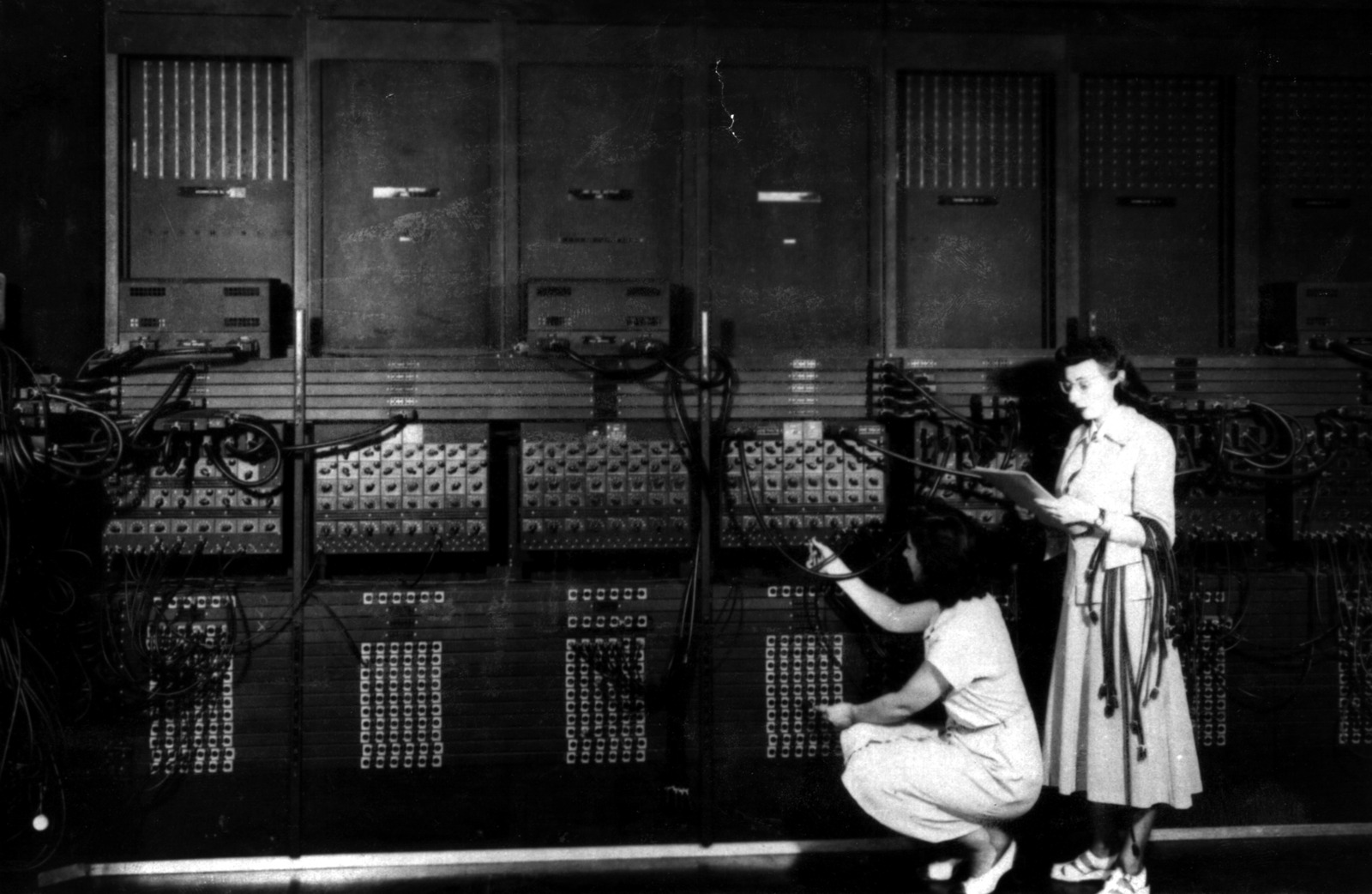

In 1957, Rosenblatt wrote a software simulation and ran it on the IBM 704 at the Cornell Aeronautical Laboratory. The project was called Project PARA – Perceiving and Recognizing Automaton. He fed the machine punch cards with marks on the left or right side. After 50 trials, the IBM 704 had taught itself to tell the difference.

No one had programmed it to distinguish left from right. It had learned the distinction from examples, by adjusting its own internal weights. This was fundamentally different from everything that had come before. Every computer program in history – from ENIAC’s ballistic tables to Phillips’ climate model – was a set of instructions written by humans. The perceptron wrote its own rules.

The Hardware Machine

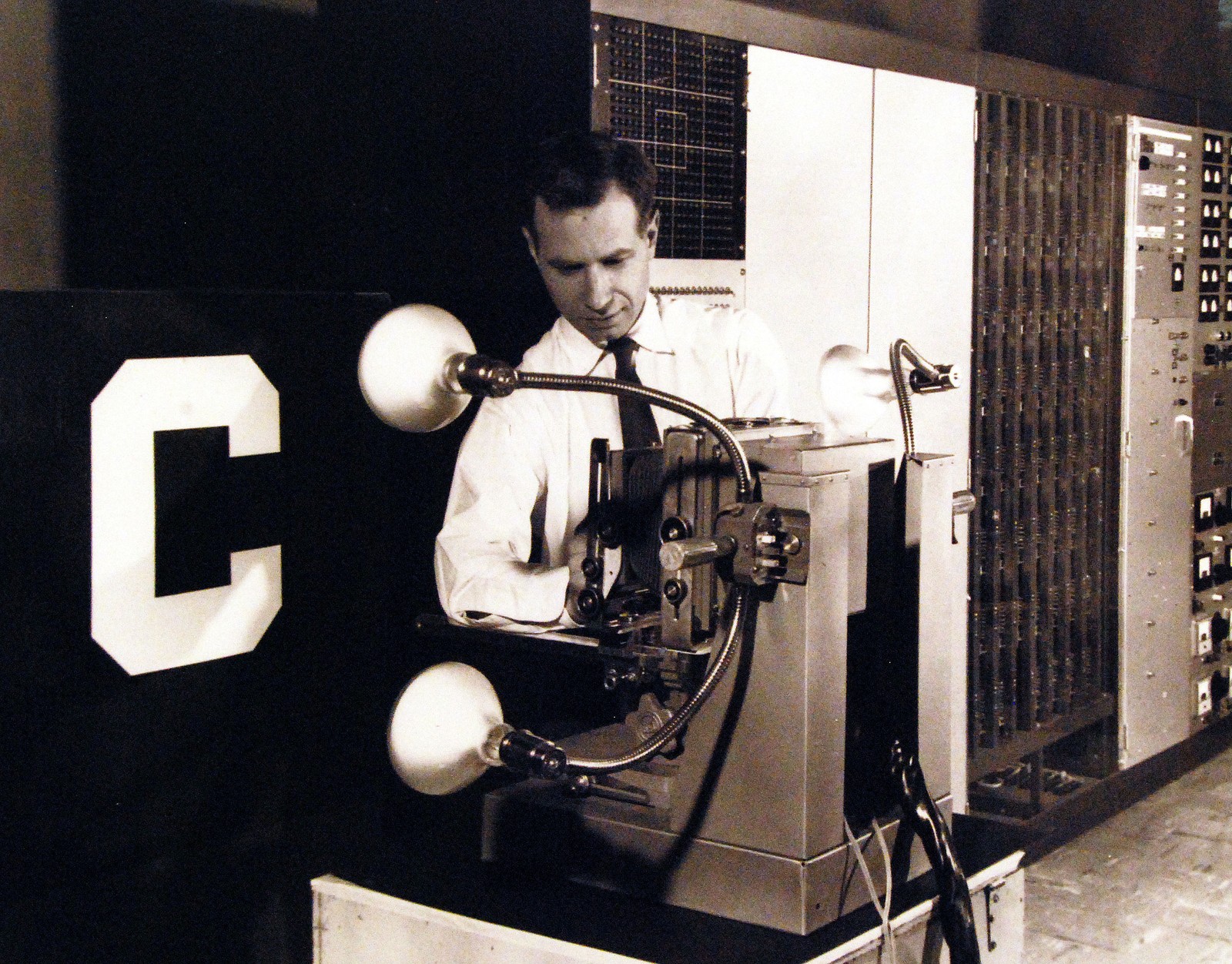

But Rosenblatt wasn’t satisfied with a simulation. He wanted to build the thing in metal.

The Mark I Perceptron, constructed in 1960 with funding from the Office of Naval Research, was an extraordinary object:

- 400 photocells arranged in a 20x20 grid – the machine’s “retina”

- 512 association units in a hidden layer, randomly wired to the photocells

- 8 response units for output

- Connections between layers encoded as potentiometers – physical dials

- Learning performed by electric motors that turned the dials

The machine literally turned knobs to learn. When it got an answer wrong, tiny motors adjusted the resistance on hundreds of potentiometers, changing the weights by fractions of an ohm. When it got an answer right, the motors stayed still. Over hundreds of trials, the dials settled into positions that encoded the correct pattern.

It worked. The Mark I Perceptron achieved 99.8% accuracy distinguishing squares from circles, 100% on squares versus diamonds, and could classify the letters X and E with 100% accuracy after just 20 training examples. It sits today in the Smithsonian National Museum of American History.

The Hype

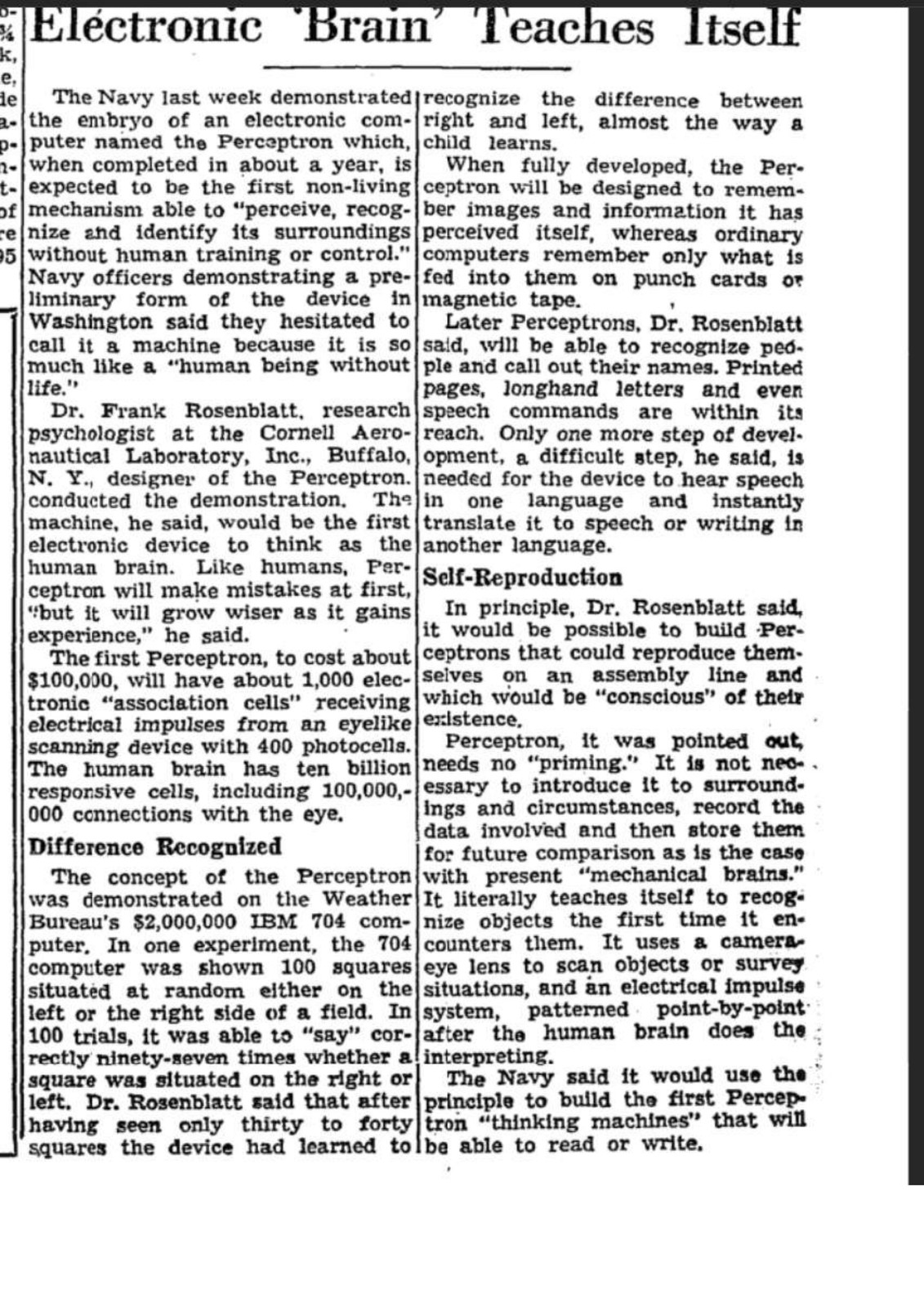

On July 7, 1958, the Office of Naval Research held a press conference in Washington, D.C. Rosenblatt demonstrated the perceptron on the IBM 704. The next day, the New York Times ran a story on page 25:

“NEW NAVY DEVICE LEARNS BY DOING: Psychologist Shows Embryo of Computer Designed to Read and Grow Wiser”

The Times reported the perceptron to be “the embryo of an electronic computer that the Navy expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence.”2

Rosenblatt himself was bold but not quite that reckless. He called it “the first machine which is capable of having an original idea.”2 He said future perceptrons would be able to recognize people and call out their names. He even said that “in principle, it would be possible to have perceptrons that would reproduce themselves on an assembly line and that would be conscious of their existence.”2

The caveats – “in principle,” “future,” “possible” – disappeared in the headlines. The Cold War was on. Sputnik had launched nine months earlier. The military was hungry for technological miracles to parade before the press, and a machine that could learn sounded like the future.

The hype spiraled. And it created a target.

The Classmate

A thousand miles north of Washington, at MIT, Marvin Minsky watched the perceptron coverage with growing irritation.

Minsky – Bronx Science class of 1945, one year ahead of Rosenblatt – had built his own neural network machine as a graduate student at Princeton in 1951. He called it SNARC (Stochastic Neural Analog Reinforcement Calculator). It used 40 neurons made from vacuum tubes and had adjustable weights. It could learn to navigate a maze. Then Minsky abandoned neural networks entirely and pivoted to symbolic AI – logical reasoning, rule-based systems, programs that manipulated symbols rather than learning from data.

By the late 1950s, Minsky was at MIT, building what would become the MIT AI Lab. He was one of the four organizers of the 1956 Dartmouth Conference – the founding event of artificial intelligence as a field. The Dartmouth vision was symbolic: intelligence as logic, reasoning as symbol manipulation, AI as programming.

The perceptron was the opposite philosophy. Not logic but learning. Not programming but experience. Not symbols but connections.

The two approaches – the two Bronx Science boys – were on a collision course.

Perceptrons (1969)

In 1969, Minsky and his MIT colleague Seymour Papert published a book called Perceptrons: An Introduction to Computational Geometry. It was a mathematical analysis of what single-layer perceptrons could and could not do.

What it proved was technically correct. A single-layer perceptron cannot compute the XOR function – cannot learn to distinguish inputs that are “one or the other but not both.” More broadly, single-layer perceptrons can only classify patterns that are linearly separable – that can be divided by a straight line (or a flat plane, in higher dimensions). Many interesting problems are not linearly separable.

What the book implied was devastating. Minsky and Papert wrote that adding hidden layers might help, but then added: “Virtually nothing is known about the computational capabilities of this latter kind of machine. We believe that it can do little more than can a low order perceptron.”3

This was a prediction. It turned out to be spectacularly wrong.

But the damage was already done. The book became a weapon. AI research groups were competing for limited funding, and the Minsky-Papert critique gave funding agencies an excuse to cut neural network research. Why invest in machines that can’t even learn XOR?

Rosenblatt protested. He had already addressed multilayer networks in his 1962 book Principles of Neurodynamics, which covered four-layer perceptrons with adjustable weights. But no one had solved the credit assignment problem – how to determine which weights in hidden layers to adjust when the output is wrong – and without that solution, multilayer perceptrons couldn’t be trained effectively.

The field called it the XOR problem. It would take 17 years to solve.

Researcher H.D. Block called the Minsky-Papert book “seriously misleading.”4 Bernard Widrow complained they had defined perceptrons too narrowly.4 Jordan Pollack observed that “what was a small proof concerning a global issue not being detectable by local detectors was interpreted by the community as a rather successful attempt to bury the whole idea.”4

Minsky himself later compared his book to Lovecraft’s Necronomicon: “a book known to many, but read only by a few.”5

The Winter

The timing was catastrophic.

In 1966, the ALPAC report had already killed U.S. funding for machine translation, after the Georgetown-IBM experiment on the IBM 701 had overpromised and underdelivered. In 1973, Sir James Lighthill – Lucasian Professor of Mathematics at Cambridge, the chair once held by Newton – published a report for the British government that savaged the entire field of AI. He wrote: “In no part of the field have the discoveries made so far produced the major impact that was then promised.”6

The British government gutted AI funding at all but two universities. DARPA followed suit. The Mansfield Amendment of 1969 required all DARPA-funded research to have direct military applications, and neural network research had none to show.

By 1974, funding for AI – especially neural networks – was nearly impossible to find. The period from roughly 1974 to 1980 is called the first AI winter. The perceptron was buried.

And the man who invented it was already dead.

July 11, 1971

On a Sunday afternoon, Frank Rosenblatt went sailing on Chesapeake Bay. It was his 43rd birthday.

He drowned in a boating accident. The exact circumstances are not well documented. He was eulogized in the U.S. House of Representatives – a mark of how significant his work was considered even then.

He had been acting section chair of Neurobiology at Cornell. He had moved into brain research, investigating memory transfer through brain extracts. He was at the height of his career.

He never saw what happened to his idea – neither the winter that buried it, nor the spring that would eventually vindicate it.

The Vindication

It took 15 years for the first thaw.

In 1982, physicist John Hopfield revived interest in neural networks with a new recurrent network model. In 1986, David Rumelhart, Geoffrey Hinton, and Ronald Williams published a paper in Nature titled “Learning representations by back-propagating errors.” They had solved the credit assignment problem.

Backpropagation works by running the network forward, measuring the error at the output, and then propagating that error backward through the hidden layers, adjusting each weight by a tiny amount proportional to its contribution to the error. It is, in essence, a sophisticated version of exactly what Rosenblatt’s electric motors were doing in 1960 – turning the dials in the direction that reduces the mistake.

The technique had actually been invented earlier – by Paul Werbos in 1974 and Seppo Linnainmaa in 1970 – but Rumelhart’s 1986 paper was the one that the field noticed. Suddenly, multilayer networks could be trained. Minsky and Papert had been wrong. Hidden layers could do far more than “a low order perceptron.”

Then came the revolution.

In 2012, Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton entered the ImageNet visual recognition challenge with AlexNet – a deep neural network with eight layers. Every other team used hand-crafted feature engineering. AlexNet used learning. It crushed the competition: 15.3% error versus the runner-up’s 26.2%. Yann LeCun called it “an unequivocal turning point in the history of computer vision.”7

AlexNet was trained on two Nvidia GPUs in Krizhevsky’s bedroom at his parents’ house. The machine that learned had come a long way from 400 photocells and electric motors.

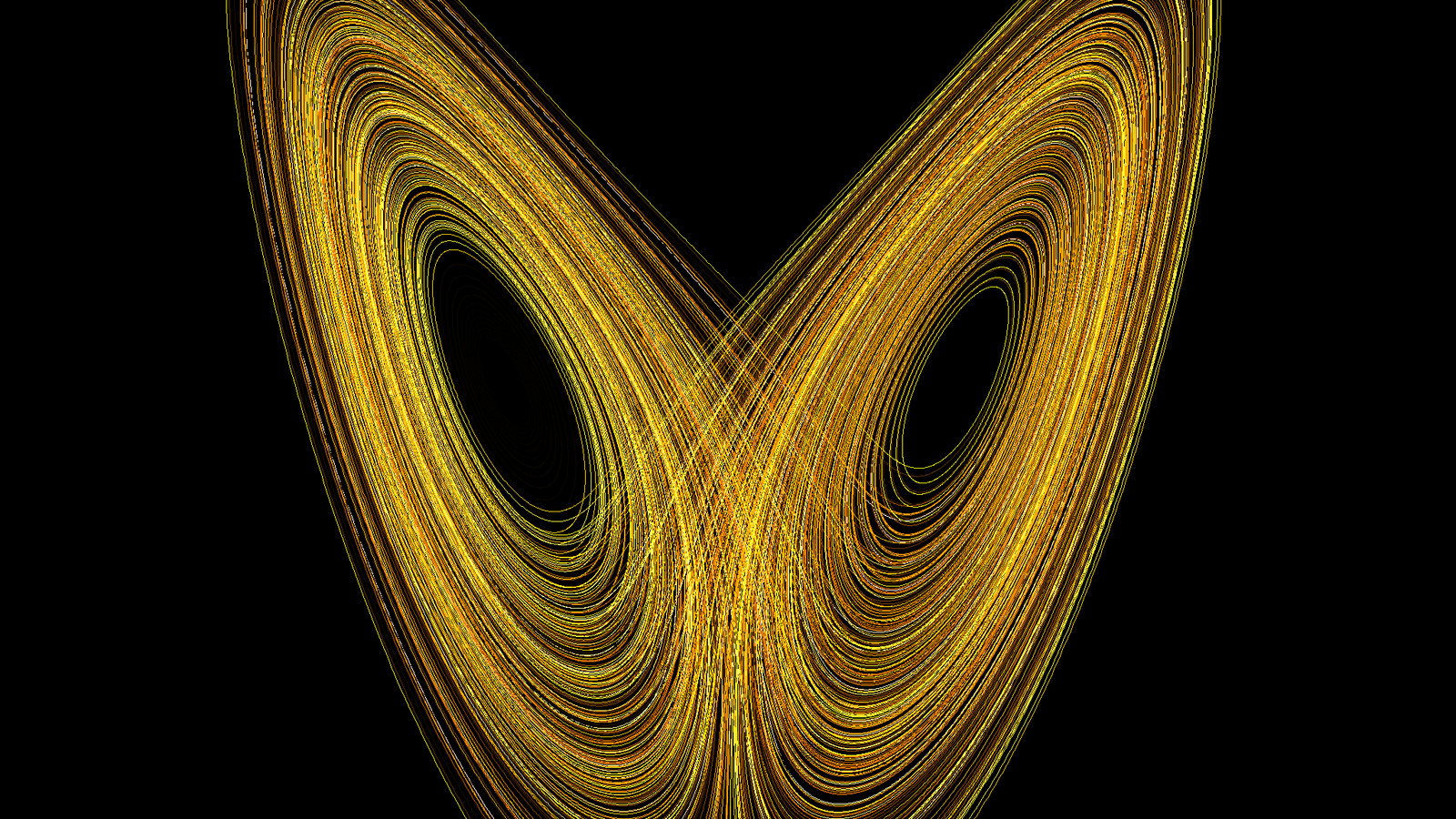

Modern deep learning – the transformers behind ChatGPT, the convolutional networks behind self-driving cars, the recurrent networks behind weather forecasting models like GraphCast and Pangu-Weather – are all direct descendants of Rosenblatt’s perceptron. The core idea is identical: layers of artificial neurons with adjustable weights, learning from data by minimizing error. Every time a neural network adjusts a weight during training, it is doing exactly what the Mark I Perceptron did with its electric motors in 1960.

As the Cornell Chronicle put it in 2019: Frank Rosenblatt was right. He was just sixty years too early.

Two Boys from Bronx Science

The Perceptron story is also the story of two visions of intelligence.

Marvin Minsky and his allies at the 1956 Dartmouth Conference believed that intelligence was about logic – manipulating symbols according to rules, the way a mathematician proves a theorem. Their heirs built expert systems, logical programming languages, and knowledge databases. They believed you could program intelligence from the top down.

Frank Rosenblatt believed that intelligence was about learning – adjusting connections based on experience, the way a brain rewires itself. His heirs built neural networks, deep learning systems, and eventually the large language models that power today’s AI. They believed intelligence had to be learned from the bottom up.

For 50 years, the symbolic camp won the funding wars, the academic appointments, and the narrative. Neural networks were dismissed as a dead end – a mathematical curiosity killed by the XOR problem, buried by Minsky and Papert, forgotten by a field that thought the future was logic.

Then, around 2012, the perceptron’s grandchildren started beating every symbolic system ever built. At everything. Image recognition. Speech recognition. Translation. Game playing. Protein folding. Weather prediction.

The quiet psychologist with the grand piano and the telescope had been right all along. The machine that learned was the future. It just took the rest of the world sixty years to catch up.

Footnotes

References

Rosenblatt and the Perceptron:

- Cornell Chronicle (2019). Professor’s Perceptron Paved the Way for AI – 60 Years Too Soon. Cornell

- Rosenblatt, F. (1958). The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain. Psychological Review, 65(6), 386-408.

- Rosenblatt, F. (1962). Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms. Spartan Books.

- Smithsonian National Museum of American History. Electronic Neural Network, Mark I Perceptron. Collection

- Dartmouth Libraries. Writing Frank Rosenblatt Back Into AI History. Dartmouth

- Cornell eCommons. Frank Rosenblatt Memorial. PDF

The 1958 Press Conference:

- New York Times (1958, July 8). New Navy Device Learns by Doing. p. 25. AITopics archive

- Principles of Deep Learning. The First AI Press Conference. Article

Minsky and Papert’s Critique:

- Minsky, M. & Papert, S. (1969). Perceptrons: An Introduction to Computational Geometry. MIT Press. (Expanded edition 1988.)

- Olazaran, M. (1996). A Sociological Study of the Official History of the Perceptrons Controversy. Social Studies of Science, 26(3), 611-659.

The AI Winter:

- Lighthill, J. (1973). Artificial Intelligence: A General Survey. Artificial Intelligence: A Paper Symposium, Science Research Council.

- ALPAC (1966). Languages and Machines: Computers in Translation and Linguistics. National Academy of Sciences, Publication 1416.

- Crevier, D. (1993). AI: The Tumultuous History of the Search for Artificial Intelligence. Basic Books.

- McCarthy, J., Minsky, M., Rochester, N. & Shannon, C. (1955). A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence. Reprinted in AI Magazine, 27(4), 2006. PDF

Image Credits:

- Rosenblatt portrait: Cornell University. Wikimedia Commons

- Mark I Perceptron: Smithsonian National Museum of American History. Wikimedia Commons

The Vindication:

- Rumelhart, D., Hinton, G. & Williams, R. (1986). Learning Representations by Back-Propagating Errors. Nature, 323, 533-536. Nature

- Krizhevsky, A., Sutskever, I. & Hinton, G. (2012). ImageNet Classification with Deep Convolutional Neural Networks. Advances in Neural Information Processing Systems, 25.

Previously in this series: The Man Who Forecasted Weather with a Pencil – The Number That Connects Turbulence to War – The Line That Models Cannot Draw (Part 1) – Reading the Sky – The Ghost in the Grid – The Man Who Tamed the Equations – The First Climate Model Had 5 KB of RAM – The Butterfly That Broke the Forecast – From Cables to Chaos – The Swedes Got There First – The Magician Who Told No Secrets – The Blueprint Von Neumann Gave Away

-

Nagy, G. (2011). “Frank Rosenblatt: A Personal Recollection.” In the Cornell eCommons Frank Rosenblatt Memorial volume. PDF. ↩

-

New York Times (1958, July 8). “New Navy Device Learns by Doing,” p. 25. AITopics archive. Rosenblatt’s own quoted remarks are from his Office of Naval Research press conference reported in the same article. ↩ ↩2 ↩3

-

Minsky, M. & Papert, S. (1969). Perceptrons: An Introduction to Computational Geometry. MIT Press, p. 232. ↩

-

Olazaran, M. (1996). “A Sociological Study of the Official History of the Perceptrons Controversy.” Social Studies of Science, 26(3), 611-659. ↩ ↩2 ↩3

-

Crevier, D. (1993). AI: The Tumultuous History of the Search for Artificial Intelligence. Basic Books, p. 105. Minsky’s own remarks on the book’s reception also appear in the expanded 1988 edition of Perceptrons, prologue. ↩

-

Lighthill, J. (1973). “Artificial Intelligence: A General Survey.” In Artificial Intelligence: A Paper Symposium, Science Research Council. ↩

-

LeCun, Y. (2019). Interview quoted in Metz, C., “Turing Award Won by 3 Pioneers in Artificial Intelligence,” New York Times, March 27, 2019. NYT. ↩