The architectural survey in the previous post traced seven hardware generations and what each one allowed atmospheric scientists to do. The same survey omitted the contemporary failures – the machines that did not ship, the architectures that did not commercialise, the projects that consumed twice their budgets and a hundred times their schedules and produced nothing of operational value, the algorithms that sat on shelves for thirty years before being revived. This post is the chronicle of those failures, paired with the breakthroughs they accompanied.

The pattern is more interesting than a simple “winners and losers” story. The breakthroughs that defined each era were almost never the machines with the biggest budgets, the longest press releases, or the most ambitious specifications. They were the machines that worked, on schedule, at a price that customers could afford. The failures that accompanied each era were almost always the machines that tried to skip a step: the architectural overreach, the technology that was a generation too early, the marketing announcement of a product that did not yet exist. The lesson the pattern teaches is uncomfortable. The supercomputer industry’s Wikipedia articles are mostly about machines that worked. The supercomputer industry’s institutional history is mostly about machines that did not.

This post is the institutional history.

1. The Soviet bomb (29 August 1949) and the Williams tube (1947-1955)

On 29 August 1949 the Soviet Union detonated RDS-1, its first nuclear weapon, at the Semipalatinsk Test Site in Kazakhstan. The American military planning community, which had assumed a Soviet bomb capability was at least five years away, panicked. Within nine days the United States Air Force established the Air Defense Systems Engineering Committee under MIT’s George Valley to design a continental radar-and-computer air defence network. The Whirlwind I project at MIT, then on the verge of cancellation by the Office of Naval Research because of cost overruns, was reprieved within weeks. The system that would emerge – SAGE, with its AN/FSQ-7 computers each containing 49 000 vacuum tubes, weighing 250 tons, occupying 2 000 square metres, drawing 3 megawatts, with two machines per direction centre – would by 1958 cost the United States Department of Defense between eight and twelve billion dollars, four to six times the entire Manhattan Project.1 It worked. It also depended on magnetic core memory – which Jay Forrester at MIT and An Wang at Harvard had invented in parallel in 1948-1949 and which Forrester installed in production at Whirlwind I on 8 August 1953.

What did not work was the Williams tube that Whirlwind had originally been designed to use. F. C. Williams’s cathode-ray-tube memory, invented at Manchester in 1946-1947, stored bits as charges on the phosphor of a CRT screen. It was non-mechanical and fast. It also drifted under temperature, was sensitive to vibration, lost stored bits to ambient radiation, required destructive read-out that had to be immediately followed by a rewrite cycle, and was – at scale – approximately as reliable as the vacuum tubes it complemented. Whirlwind’s first generation of Williams-tube memory had to be rebuilt from scratch in 1951 when its mean time between failures dropped below thirty minutes. Forrester’s core memory had a one-microsecond access time, was non-destructive, was insensitive to vibration, and worked. Within two years of Whirlwind’s August 1953 installation, every other vacuum-tube computer in the world had ripped out its mercury delay lines and Williams tubes and replaced them with cores. The Williams tube as a memory technology was dead by 1955.

2. ENIAC (February 1946) and the SEAC (1950)

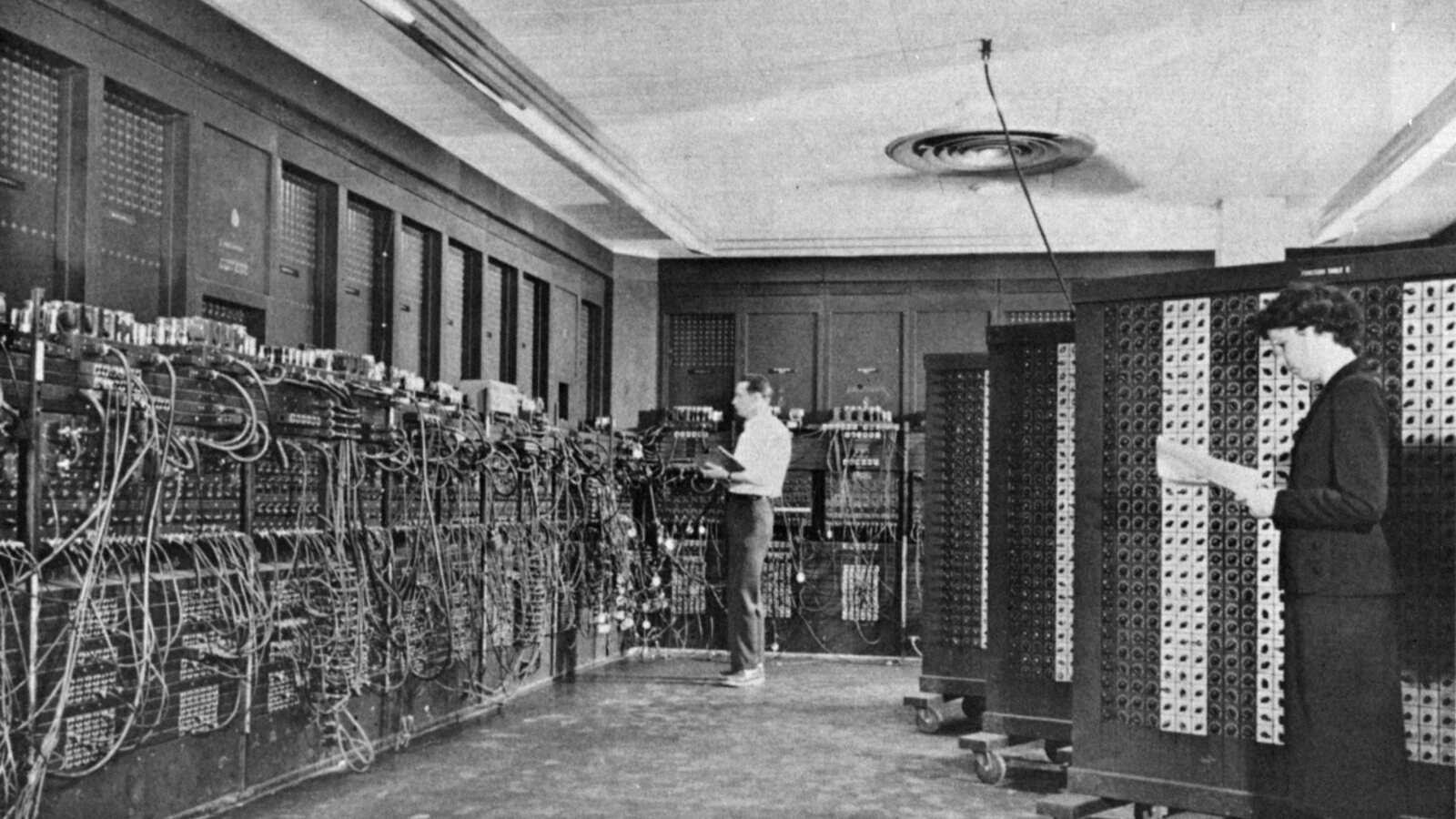

ENIAC – the Electronic Numerical Integrator and Computer, unveiled at the University of Pennsylvania’s Moore School of Electrical Engineering on 15 February 1946 – was the first electronic general-purpose digital computer. Eighteen thousand vacuum tubes, forty by eight by three feet, 150 kilowatts of power consumption, 17 468 vacuum tubes (a different count from different sources), peak rate of 5 000 ten-digit additions per second. It worked. The first numerical weather forecast, run on ENIAC by Charney, Fjørtoft and von Neumann in April 1950 (Post 9), required twenty-four hours of ENIAC running time per twenty-four-hour forecast. The first ENIAC programmers were six women – Kay McNulty, Betty Snyder, Marlyn Wescoff, Ruth Lichterman, Betty Jean Jennings, and Frances Bilas – whom Adele Goldstine had recruited from the Moore School’s mechanical-calculator pool (Post 19).

SEAC – the Standards Eastern Automatic Computer, built by the United States National Bureau of Standards and operational on 9 May 1950 – was the first stored-program computer to enter regular operation in the United States. It used 750 vacuum tubes, mercury delay lines for memory, and ran at approximately 1 000 instructions per second. It produced the first SEAC weather forecast in late 1950. SEAC was retired in 1964; the chassis was scrapped. It is not in the Computer History Museum’s collection. It is, in 2026, almost completely forgotten outside specialist histories of the United States Bureau of Standards. The contrast with ENIAC’s iconic standing in the public imagination of computing is instructive: SEAC worked, ran on schedule, did the same scientific computations as ENIAC at a fraction of the cost, and is forgotten because it lacked the press machine that the University of Pennsylvania built around ENIAC.

3. UNIVAC LARC (1955-1960)

UNIVAC LARC – the Livermore Atomic Research Computer – was contracted by the Atomic Energy Commission’s Lawrence Livermore Laboratory in 1955 for ten million dollars and delivered in June 1960. It used surface-barrier transistors, a logic family that had been state of the art when the contract was signed but was already obsolete by the time the machine shipped. Two units were ever built, both for AEC laboratories. Performance: 250 000 instructions per second, perhaps a third of the contemporary IBM 7090. The architecture had been personally specified by Edward Teller, then the principal scientific advisor on thermonuclear weapons design at Livermore. It worked, technically; it sold two units; it was retired by 1969. The Teller design philosophy – that scientific computing should be specified by the scientists rather than the computer architects – was not repeated by AEC laboratories for another decade.

4. IBM 7030 Stretch: the most famous failure (1956-1961)

In January 1956 IBM signed a contract with Los Alamos Scientific Laboratory for the IBM 7030 “Stretch” – a contract whose internal codename came from the engineering ambition to “stretch” IBM’s existing computer technology by a factor of one hundred. The contract value was $4.3 million. By 1958 the expected price had grown to $13.5 million. By the time of first delivery to Los Alamos in April 1961, the executed Stretch ran at approximately 1.2 million instructions per second – not the four million instructions per second that the contract had promised. Watson Jr., IBM’s chairman, made a public statement at the Western Joint Computer Conference in May 1961 withdrawing Stretch from the IBM commercial catalogue and reducing the contracted price to existing customers from $13.5 million to $7.78 million. Nine customer Stretch machines were ultimately delivered. IBM lost approximately $20 million on the project, twice its original budget.2

The Stretch’s principal architect, Stephen W. Dunwell, was demoted at IBM following the May 1961 public withdrawal and reassigned to a non-line-management position. In April 1966, five years after the Western Joint Computer Conference statement, Watson Jr. publicly apologised to Dunwell at the IBM Fellow ceremony at the IBM Yorktown laboratory. Dunwell was named an IBM Fellow in 1966 and continued as an IBM senior scientist until his retirement. The five-year delay between Stretch’s public collapse and Dunwell’s rehabilitation is, in IBM’s institutional memory, the canonical example of how a technical failure can damage a career disproportionately to the architect’s individual responsibility.

The Stretch’s lasting legacy was not its operational performance. It was the architectural ideas that Dunwell and his team had developed in the project: the eight-deep instruction lookahead, the floating-point pipelining, the wide-byte memory access, the asynchronous I/O. Almost every subsequent IBM mainframe – including the System/360 family announced three years after Stretch’s withdrawal – was built on Stretch’s architectural foundation. IBM lost twenty million dollars on Stretch and then made it back, with a substantial multiple, on a System/360 family that depended on the same internal expertise. This is the canonical pattern of computer-industry failure: the failed machine is the prototype for the successful one.

5. Philco TRANSAC S-2000 (1958-1961)

The Philco TRANSAC S-2000 – delivered in 1958, six months before the IBM 7090 reached customers – was the first all-transistor mainframe in commercial production. It used Philco’s surface-barrier transistor (the same technology UNIVAC LARC would adopt a year later) and operated at approximately 200 000 instructions per second, comparable to the IBM 7090. Forty-three units were sold across all variants between 1958 and 1961. Philco was acquired by Ford Motor Company in 1961 and the TRANSAC line was discontinued. The S-2000 is one of the canonical “first-but-forgotten” computers of the era: technically excellent, commercially the first to ship, marketed by a company that had no business being in computers, and erased from public memory within a decade.

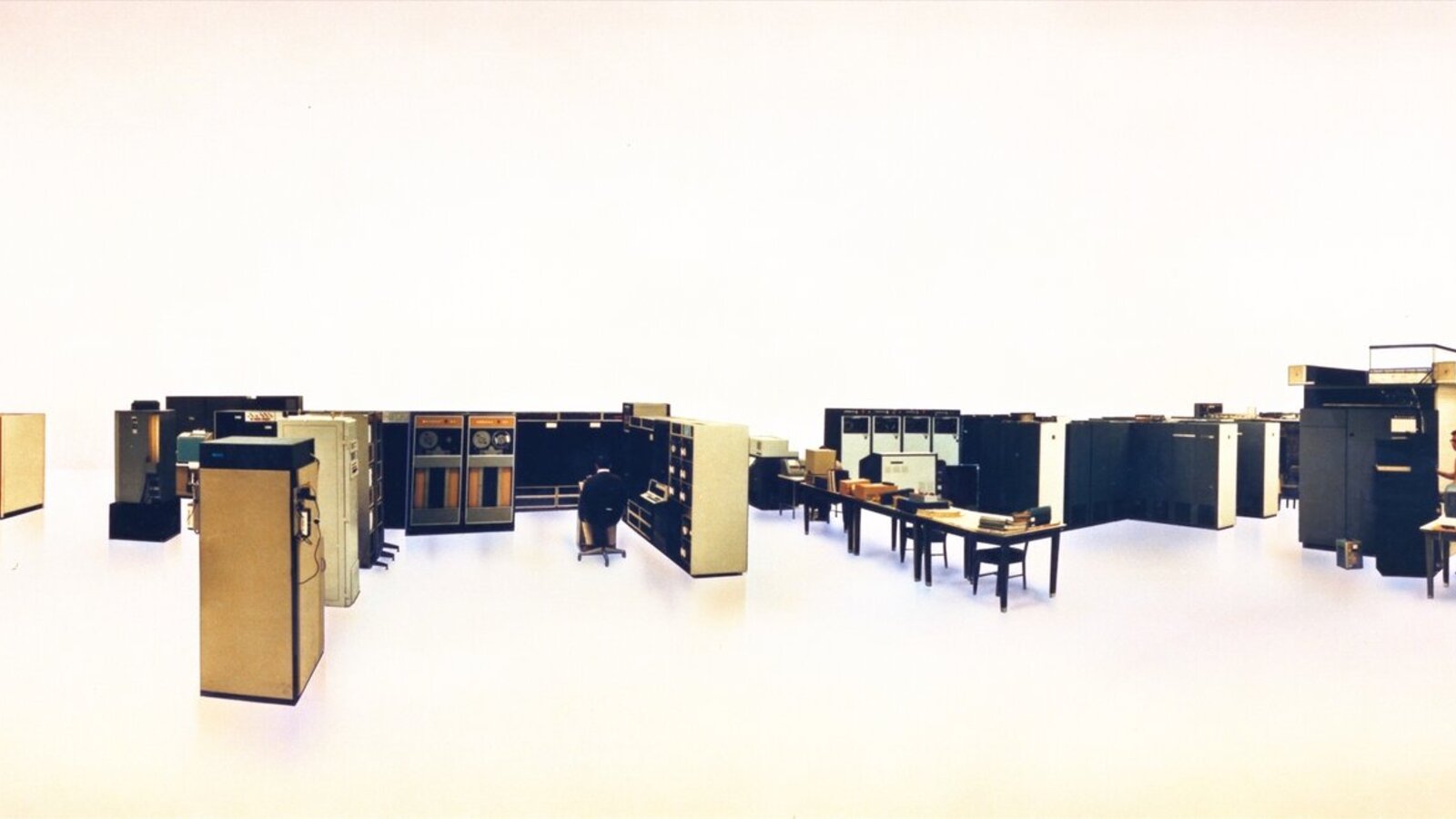

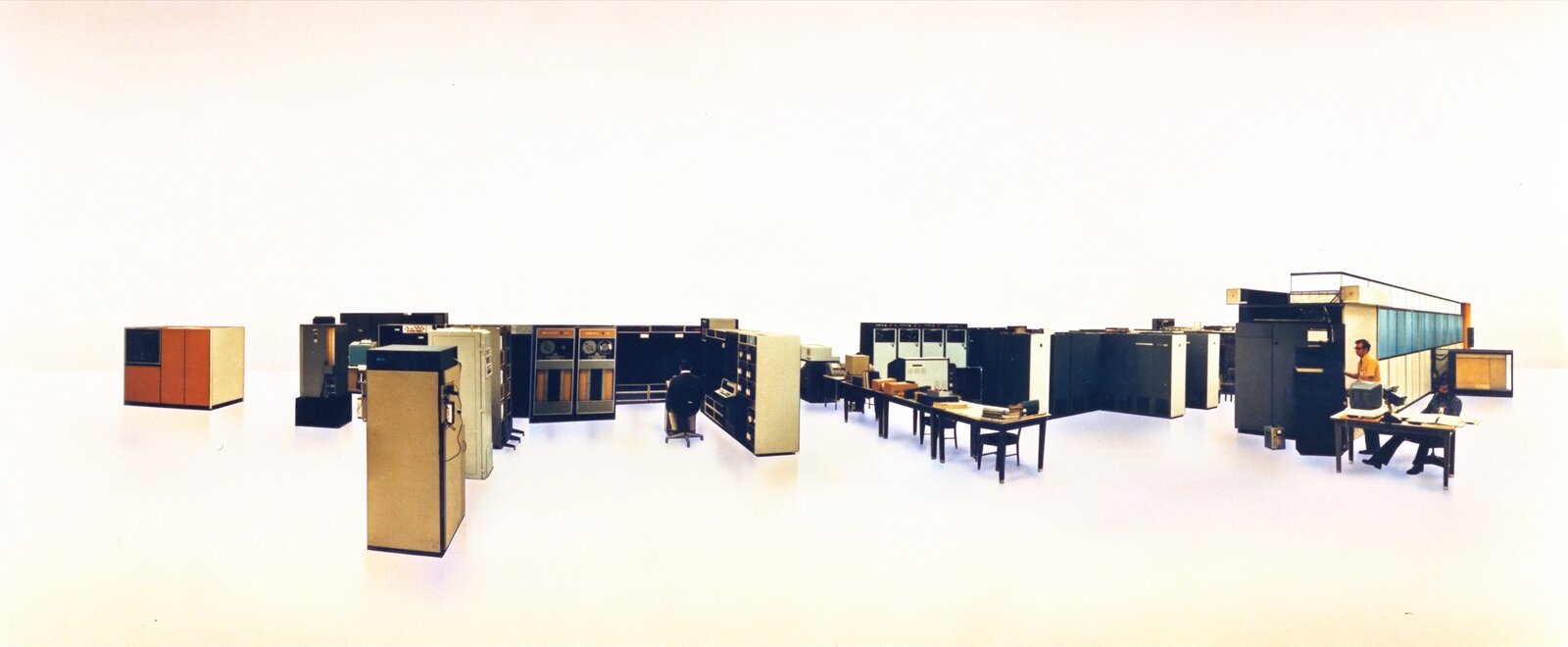

The IBM 7090, by contrast, shipped in November 1959, sold over two hundred units through 1962, was the principal scientific mainframe of every major American university through the early 1960s, and is widely regarded as the machine that made IBM dominant in scientific computing. The 7090 ran at one third the price of a Stretch, had ninety per cent of the throughput of an LARC, and became the operational backbone of the National Meteorological Center at Suitland for the early-1960s daily weather forecast.3 The pattern: the second-mover with the simpler product won the market.

6. The CDC 1604 at FNWF (January 1960)

Serial number one of the Control Data Corporation 1604, Seymour Cray’s first commercial design, was delivered to the Naval Postgraduate School in Monterey California in January 1960 for the use of the Fleet Numerical Weather Facility (Post 29). FNWF was the United States Navy’s operational weather forecasting centre, run from Spanagel Hall on the NPS campus. The 1604 had 32 768 forty-eight-bit words of magnetic core memory and ran at approximately 100 000 floating-point operations per second.

Through the Cuban Missile Crisis of October 1962 – thirteen days during which the United States and the Soviet Union came closer to nuclear exchange than at any other point in the Cold War – the FNWF 1604 ran the United States Navy’s weather forecasts in support of fleet operations off the Florida coast. There is limited primary-source documentation of the specific computational workload during those thirteen days; what is documented is that by October 1962 transistorised scientific computing was operational infrastructure for the first time. The contrast with Whirlwind’s 1953 status – “experimental research project at MIT” – is fifteen years.

7. The CDC 6600 and the Watson memo (28 August 1963)

On 22 August 1963 Control Data Corporation announced the CDC 6600 – Cray’s deeply parallel scientific computer with a sixty-bit word, ten functional units, a hardware scoreboard for out-of-order execution, and a peak rate of approximately three megaflops, three times the IBM 7090. On 28 August 1963, six days later, Thomas J. Watson Jr. dictated his canonical “thirty-four people including the janitor” memo to senior IBM management: a single page asking why IBM, with several thousand engineers, had been beaten to the world’s most powerful computer by a thirty-four-person engineering laboratory in Wisconsin (Post 30).

The memo travelled inside IBM through early September 1963 and reached the company’s Jenny Lake Wyoming senior-management retreat during the second week of that month. By the time the executives flew home, IBM had committed to two architectural projects: the System/360 family (announced 7 April 1964, the most consequential commercial computing announcement of the era), and the special-purpose System/360 Model 91 “6600 killer” (announced November 1964, finally delivered October 1967, fourteen units ever built). The 6600 itself sold approximately one hundred units between 1964 and 1972 at $7-10 million each, was the canonical scientific supercomputer of the late 1960s, and ran at NCAR for almost six years until its 1971 replacement by the CDC 7600.

8. The IBM 360/92 and the phantom-machine lawsuit (November 1964)

The original IBM response to the August 1963 6600 announcement was not the System/360 Model 91. It was the System/360 Model 92 – a higher-performance variant announced in November 1964 with specifications that explicitly promised performance “more powerful than the 6600.” Three AFIPS papers were published describing the Model 92 architecture in 1964. The Model 92 was never built. By mid-1965 the engineering had been quietly scaled back to the Model 91 specification, the Model 92 product code was withdrawn, and IBM’s marketing pivoted to selling 91s.

The CDC sales force, watching potential customer purchases of the 6600 freeze in 1964-1965 because customers were waiting for the announced-but-unbuilt 92, documented every IBM customer-engineering visit, every announcement, every technical brief. William Norris, CDC’s chief executive, filed an antitrust suit against IBM in the United States District Court for the District of Minnesota on 11 December 1968. The complaint contained, in Norris’s framing, “Paper Machines and Phantom Computers” – a literal section of the suit that documented IBM’s announcement of products that were not built or shipped at the volumes promised. The Model 92 was Exhibit A.

The case settled on Friday, 12 January 1973 for approximately eighty million United States dollars in cash, equity-stake, and service-business value to CDC.4 As a condition of the settlement, the entire CDC litigation discovery database – approximately seventy-five thousand legal analyses indexing seventeen million pages of IBM internal documents, built over four years – was destroyed. Destruction began at 3 p.m. Friday 12 January 1973 and continued through Saturday 13 January, before the public announcement of the settlement. Magnetic tapes were erased; paper indexes were destroyed; the work-product analyses were removed from CDC’s possession. The United States Department of Justice’s parallel antitrust case against IBM, filed 17 January 1969 by Attorney General Ramsey Clark on his last day in office, was deprived of its primary documentary substrate. The DOJ case was eventually dismissed on 8 January 1982 by Assistant Attorney General William F. Baxter as “without merit.” (Post 31 covers this in detail.)

9. Tomasulo’s algorithm: 28 years on a shelf (January 1967)

Robert Tomasulo’s paper “An Efficient Algorithm for Exploiting Multiple Arithmetic Units” was published in the IBM Journal of Research and Development 11(1):25-33 in January 1967, two months before the first IBM 360/91 shipped to NASA Goddard. The algorithm – reservation stations, Common Data Bus, register renaming through tag arithmetic, dynamic out-of-order execution – was the most architecturally sophisticated single piece of work in the 1960s computing literature. It would not be commercially implemented again for twenty-eight years.

The IBM 360/91 sold fourteen to fifteen units total (Post 31). The 360/195, which inherited the same FPU architecture in 1971, sold approximately twenty units. After the 195 was withdrawn in 1976, no commercial computer used Tomasulo’s algorithm in any form until 1 November 1995, when Intel shipped the Pentium Pro with reservation stations, register renaming, and a Common Data Bus equivalent that the P6 architects deliberately named after Tomasulo’s original. The interregnum was filled by simpler in-order pipelines (the RISC era) and by failed alternatives (the VLIW era, which we come to below). The pattern: the algorithm published in 1967 won the architectural war that the machine which carried it lost in 1971. The Eckert-Mauchly Award for Tomasulo’s algorithm was presented to him in 1997, thirty years after publication, two years after Pentium Pro ship.

10. Lynn Conway’s Dynamic Instruction Scheduling (23 February 1966)

In February 1966 – eleven months before Tomasulo’s IBM Journal paper appeared – Lynn Conway at IBM’s Advanced Computing Systems group in San Jose California completed a four-author internal memorandum titled “Dynamic Instruction Scheduling (DIS).” The paper, co-authored with Brian Randell, Donald P. Rozenberg, and Donald N. Senzig, described a more general version of Tomasulo’s algorithm: multiple-issue dynamic scheduling that could dispatch up to seven instructions per cycle to a multi-unit functional engine, with rename tags to handle WAR and WAW hazards. The intended target was the ACS-1 – IBM’s “Project Y” 6600-killer that John Cocke was leading.5

Conway was forty-seven years old. She had taken her undergraduate degree at Columbia, joined IBM in 1964, and was, by early 1968, the principal architect of the ACS-1’s instruction-scheduling unit. In 1968 she informed IBM Human Resources of her intent to undergo gender transition. The company’s senior management – including Watson Jr. – ordered her summary dismissal on the grounds that her presence would “disrupt corporate operations.” She was fired with no severance, blacklisted from the IBM-affiliated employer network, and her authorship of the DIS paper was effectively erased from the public record.

In 2009 the IEEE Computer Society awarded Conway the Computer Pioneer Award “for contributions to superscalar architecture, including multiple-issue dynamic instruction scheduling, and for the innovation and widespread teaching of simplified VLSI design methods.” The 2009 citation was the first formal IEEE acknowledgement that her DIS work had predated and surpassed Tomasulo’s published version. On 14 October 2020 – fifty-two years after the 1968 firing – IBM held a virtual event for approximately twelve hundred of its employees titled Tech Trailblazer and Transgender Pioneer: Lynn Conway in conversation with Diane Gherson. Diane Gherson, IBM’s Senior Vice President for Human Resources, said:

“I wanted to say to you here today, Lynn, for that experience in our company 52 years ago and all the hardships that followed, I am truly sorry.”

Conway died on 9 June 2024 at her home in Jackson Michigan, aged eighty-six. The 1966 DIS paper she co-authored is, by 2026, the foundation of every multi-issue out-of-order microprocessor in volume production.

11. The CDC 8600 (1968-1974): the machine that drove Cray out

In 1968 Cray began design of the CDC 8600 – a four-CPU shared-memory machine with an eight-nanosecond clock target, intended to deliver one hundred megaflops sustained, ten times the contemporary 7600. By 1971 the project was three years late, the cooling system Dean Roush had designed could not handle the eight-nanosecond per-module thermal load, and the multi-port memory contention reduced effective bandwidth to a fraction of the architectural design. In a 1971 episode that has become canonical in CDC’s institutional memory, Cray cut his own salary to minimum wage to free payroll budget for the engineering team after CDC corporate management demanded a ten per cent payroll reduction across all divisions during the IBM antitrust litigation cash crunch.6

By early 1972 the 8600 prototype was running but the reliability data were appalling. In a meeting in Norris’s office at CDC headquarters in Bloomington Minnesota in February or March 1972, Cray proposed a clean redesign requiring two more years and additional capital. Norris – with the IBM antitrust litigation consuming corporate cash, with CDC’s STAR-100 vector machine project at Arden Hills already absorbing the supercomputer budget, with the company’s balance sheet under stress from the 1968 Commercial Credit acquisition – chose to fund STAR-100 at the expense of the 8600 redesign. Cray resigned in March 1972. CDC seeded approximately three hundred thousand dollars in his new company in exchange for an early-stage equity stake. The 8600 limped on without Cray for two more years and was officially cancelled in 1974. No 8600 was ever shipped.

The CDC STAR-100 that Norris had funded over the 8600 was first delivered in 1974. Three customer units were ever sold: two to Lawrence Livermore National Laboratory, one to NASA Langley Research Center. CDC retained two more at Arden Hills. The vector architecture that Norris had bet on did not work commercially. The four-CPU shared-memory architecture that Cray had wanted to redesign would, in different form, become the canonical pattern of every multi-processor scientific machine of the 1980s and beyond. Both architectural philosophies – Norris’s vector and Cray’s multi-processor – were correct; both were wrong about timing.

12. ILLIAC IV: the SIMD philosophy that lost in 1975 and won in 2006

Daniel Slotnick at the University of Illinois began design of the ILLIAC IV in 1965 with an eight-million-dollar budget, an original specification of two hundred and fifty-six processing elements organised in four quadrants of sixty-four PEs each, and an ambition to deliver one billion floating-point operations per second – the first machine in history explicitly targeted at gigaflop performance. By delivery to NASA Ames in April 1972, the budget had grown to thirty-one million dollars, three of the four quadrants had been cancelled, and the executed machine had sixty-four PEs, peak performance of approximately 250 megaflops. The cost overrun was four times. The machine arrived three years behind schedule and was not declared operational until November 1975 – ten years after Slotnick’s original design proposal.7

The atmospheric science the ILLIAC IV produced is documented in Post 33. Two general circulation model conversions were attempted at NASA Ames between 1973 and 1975. Both failed. The Goddard Institute for Space Studies general circulation model port “ran to completion but it didn’t make weather. Negative atmospheric pressures would occur in the course of the simulation” (Hord 1982 page 123). The Fleet Numerical Weather Central primitive-equation model port was abandoned because of the IVTRAN compiler’s instability. No general circulation model is recorded as having produced validated scientific output on the ILLIAC IV.

The ILLIAC IV was decommissioned on 7 September 1981. Daniel Slotnick died 25 October 1985 in Baltimore Maryland of a heart attack while jogging, age fifty-four.

The architectural philosophy that the ILLIAC IV embodied – many small processors operating in lockstep against many parallel data items, with per-element conditional masking – was a commercial dead end in 1981. It returned in November 2006, when NVIDIA shipped the G80 GPU with one hundred and twenty-eight CUDA cores in a Single Instruction Multiple Thread architecture that was direct lineage from Slotnick’s 1972 design. By 2024 the NVIDIA H100 had sixteen thousand eight hundred and ninety-six CUDA cores at 1.8 gigahertz – two thousand and fifty times the ILLIAC IV’s processing element count at one hundred and twelve times the clock rate. Slotnick’s architecture was correct. It just took fifty years for the silicon to catch up.

13. The Cray-1 (4 March 1976) and the Cray-2 (1985)

On 4 March 1976 the Cray-1 serial number one was crated at Cray Research’s Chippewa Falls Wisconsin laboratory and delivered to Los Alamos National Laboratory for a six-month evaluation, with a contracted purchase price of approximately $8.8 million (Post 26). Los Alamos had outbid Lawrence Livermore for the right to take serial number one. The Cray-1’s vector registers, single-megabyte fast main memory, eighty-megaflop sustained performance, and C-shaped freon-cooled chassis became the architectural template for the next two decades of scientific supercomputing. Approximately eighty Cray-1 units were sold between 1976 and 1982. The first commercial sale was to NCAR in July 1977 (Post 26). The first European delivery was to ECMWF in October 1978 (Post 34).

The follow-on Cray-2 – announced 1985, with Fluorinert immersion cooling that submerged the entire processor module in a non-conductive liquid coolant, four CPUs sharing two gigabytes of common memory, peak two-billion-floating-point-operations-per-second performance – sold twenty-seven units worldwide. It was a commercial disappointment compared to the Cray-1. The Cray X-MP and Y-MP, which used more conservative shared-memory multiprocessing on the same architectural pattern as the Cray-1, sold over two hundred units combined and were the operational backbone of every major weather centre through the 1980s and early 1990s. The Cray-2 was the architecturally aggressive cousin that customers found too risky.

The Cray-3 – announced 1989, using gallium-arsenide semiconductor technology that promised five times the clock speed of silicon – sold one customer unit, to Lawrence Livermore. Cray Computer Corporation, the spin-off Seymour Cray had founded in 1989 to build the Cray-3 after Cray Research declined to fund the gallium-arsenide bet, filed for bankruptcy on 24 March 1995. Seymour Cray died on 5 October 1996 in Colorado Springs from injuries sustained in a Jeep accident on Interstate 25 two weeks earlier, age seventy-one. The architect who had defined American supercomputing from 1957 to 1972 to 1985 had, in his final architectural bet, picked a semiconductor technology that turned out to be a generation too soon. Gallium arsenide as a high-performance computing substrate did not commercially succeed in the 1990s and has been overtaken in the 2020s by silicon-germanium and related compound semiconductors used principally for radio-frequency rather than digital applications.

14. The Connection Machine (1985-1994) and Thinking Machines

W. Daniel Hillis completed his MIT doctoral thesis on a parallel computer he called the Connection Machine in 1985. Thinking Machines Corporation was incorporated in Boston in 1983 to commercialise the design. The Connection Machine CM-1 of 1985 had 65 536 processing elements – one thousand and twenty-four times the ILLIAC IV’s sixty-four – arranged in a hypercube communication network. The CM-2 of 1987 added floating-point hardware to the same architecture and sold approximately seventy units at $5-10 million each. The CM-5 of 1991 used SPARC processors instead of bit-serial PEs, sold approximately thirty units, and was used for the seven-second computer-graphic visualisation in Jurassic Park (1993).

Thinking Machines Corporation filed for bankruptcy in August 1994. Total Connection Machine production: approximately one hundred and ten units across all variants. The architectural philosophy was correct (Slotnick’s; the GPU descendant five years away); the commercial timing was wrong. Hillis went on to co-found Applied Minds in 2000 and would, by 2026, be principally known for his commentary on artificial intelligence and his ten-thousand-year clock project at the Long Now Foundation. The Thinking Machines computer-vision research effort that produced the Volume Rendering Toolkit (VTK, released open-source in 1993) survived the corporation’s bankruptcy and remains one of the most-used scientific-visualisation libraries in 2026.

15. MasPar (1990-1996)

MasPar Computer Corporation was founded in 1987 by Jeff Kalb, formerly of DEC, with the explicit business model of bringing the SIMD architecture down-market from the Connection Machine’s $5-10 million price point. The MasPar MP-1 of 1990 had between 1 024 and 16 384 processing elements at clock rates of 12-16 megahertz and prices in the $400 000 to $5 million range. The MasPar MP-2 of 1992 doubled the floating-point performance and added a full thirty-two-bit data path. Total MasPar production: approximately two hundred units across MP-1 and MP-2.

MasPar exited the supercomputer business in June 1996 after the Connection Machine’s August 1994 bankruptcy had fundamentally damaged the SIMD market. The company’s residual hardware and IP were sold to NeoVista Software in 1997 and the corporate identity dissolved. The architectural philosophy was, again, correct; the commercial timing was, again, wrong. The MasPar MP-2 is in 2026 a museum piece in the Computer History Museum.

16. The DEC Alpha (1992-2001) and Itanium (1994-2023)

Digital Equipment Corporation announced the Alpha 21064 microprocessor in February 1992 as a successor to its VAX architecture. The Alpha was, on every benchmark, the world’s fastest microprocessor from 1992 through 1998. The Alpha 21264, announced October 1998, was an aggressive Tomasulo-style out-of-order machine with eighty in-flight instructions per cluster – the most architecturally sophisticated commercial microprocessor of its era. DEC was acquired by Compaq Computer Corporation in 1998. Compaq was acquired by Hewlett-Packard in 2002.

In June 2001, in a corporate decision that has been widely characterised as the worst architectural choice in computing history, Hewlett-Packard committed to discontinuing the Alpha line in favour of Intel’s new Itanium / IA-64 processor architecture. The Alpha 21264 received its final production-ready successor (Alpha 21364) but was never released to customers. The Alpha team at Hudson Massachusetts was disbanded; the engineers were absorbed into AMD’s Athlon project, which became the foundation of every modern AMD processor.

Itanium – the joint Intel-HP very-long-instruction-word architecture announced in 1994 as the successor to x86 – shipped its first commercial unit (Merced) in June 2001. By every measure, Itanium was a commercial disaster: at its 2007 peak the Itanium product line sold approximately five per cent of Intel’s projected volume. Software vendors universally chose to optimise for x86 over Itanium, and Itanium-only operating systems (HP-UX, OpenVMS, NonStop OS) became increasingly orphaned. The final Itanium production run was completed in 2017; HP-UX 11i v3 final extended support ended on 22 May 2025, twenty-four years after Itanium’s first ship.

The architectural lesson: Intel’s bet that VLIW would replace x86 destroyed Alpha and produced no commercial substitute. The x86 architecture that Itanium was supposed to replace remained dominant; the Alpha architecture that Itanium was supposed to be better than would have been more than competitive against x86 had HP continued it. The 2001 decision is, by 2026, considered foundational to the company-of-Compaq’s loss of computer-industry leadership through the 2000s.

17. The Killer Micros (Supercomputing ‘89, November 1989)

Eugene Brooks of Lawrence Livermore National Laboratory presented a talk at Supercomputing ‘89 in Reno Nevada in November 1989 with the title “The Attack of the Killer Micros.” Brooks’s central claim was that the increasing performance of microprocessor-based workstations would, by approximately 1994-1995, cross the performance of single-processor traditional supercomputers and would, by 2000, exceed the performance of the most aggressive multi-processor supercomputers at one-tenth the price-per-megaflop. The talk became a generation-defining frame for the 1990s.

Brooks’s prediction was almost exactly correct. By 1995 a top-end DEC Alpha workstation matched the sustained scientific-code performance of a Cray Y-MP. By 2000 a four-processor SGI Origin 2000 matched a Cray T90. The transition that the prediction prefigured – the migration of atmospheric-science computing from bespoke supercomputers to clusters of commodity microprocessors – happened on essentially the timeline Brooks predicted. The Cray Research that had been the world’s leading supercomputer manufacturer was sold to Silicon Graphics for $740 million in February 1996, was spun off and reverse-renamed Cray Inc. in 2000, and was eventually acquired by Hewlett-Packard Enterprise in 2019. The architectural moment Brooks identified was real, the timeline was right, the institutional consequence was the destruction of the bespoke-supercomputer business model that had funded most of the era’s research.

18. Linux (25 August 1991) and Beowulf-1 (Summer 1994)

On 25 August 1991 Linus Torvalds, a twenty-one-year-old computer-science student at the University of Helsinki, posted a message to the comp.os.minix Usenet newsgroup announcing a free Unix-like operating system kernel he was writing for personal use:

“I’m doing a (free) operating system (just a hobby, won’t be big and professional like gnu) for 386(486) AT clones.”

By 2026, Linux is the dominant operating system kernel of every supercomputer in the world, every smartphone in the world, every web server in the world, and every cloud-computing instance in the world. It is the substrate of approximately three-quarters of the global computing infrastructure. The 25 August 1991 Usenet post is widely regarded as the most consequential individual technical contribution of the 1990s.

In summer 1994 at the Center of Excellence in Space Data and Information Sciences at NASA Goddard Space Flight Center, Donald Becker and Thomas Sterling built Beowulf-1, the first commodity-Linux-cluster supercomputer. The build configuration: sixteen Intel 80486 DX4 processors at 100 MHz, sixteen megabytes of dynamic RAM per node, two channel-bonded ten-megabit Ethernet networks, total cost approximately forty thousand dollars, peak performance approximately five hundred megaflops aggregate. The cluster was nicknamed “Wiglaf” (named, like the Beowulf cluster pattern itself, after a character from the Old English epic; Sterling’s mother had been an Old-English-major). The 1995 Sterling-Savarese-Becker-Dorband-Ranawake-Packer paper “Beowulf: A Parallel Workstation for Scientific Computation” at the International Conference on Parallel Processing was the canonical reference.8

The Beowulf pattern – commodity x86 hardware, commodity Linux operating system, commodity Ethernet networking, the Message Passing Interface (MPI) standard published 5 May 1994 as the portable parallel-programming substrate – became, between 1995 and 2005, the dominant pattern of academic atmospheric-science supercomputing globally. By 2010 essentially every academic atmospheric-science research group in the world was running its own Linux cluster, often built incrementally from successive grant cycles. The Beowulf revolution was the institutional realisation of Eugene Brooks’s 1989 “Killer Micros” prediction.

19. The Pentium Pro (1 November 1995)

In June 1990 Robert “Bob” Colwell joined Intel from Multiflow Computer to lead the design of the next-generation x86 processor that would replace the Pentium. By September 1990 the team had committed to out-of-order execution as the principal architectural strategy. The team – Colwell, Dave Papworth, Glenn Hinton, Andy Glew, Mike Fetterman – met initially in a converted storage room at Intel’s Hawthorn Farm campus in Hillsboro Oregon, accessed by Papworth’s improvised use of his employee ID to override the door’s electronic lock.

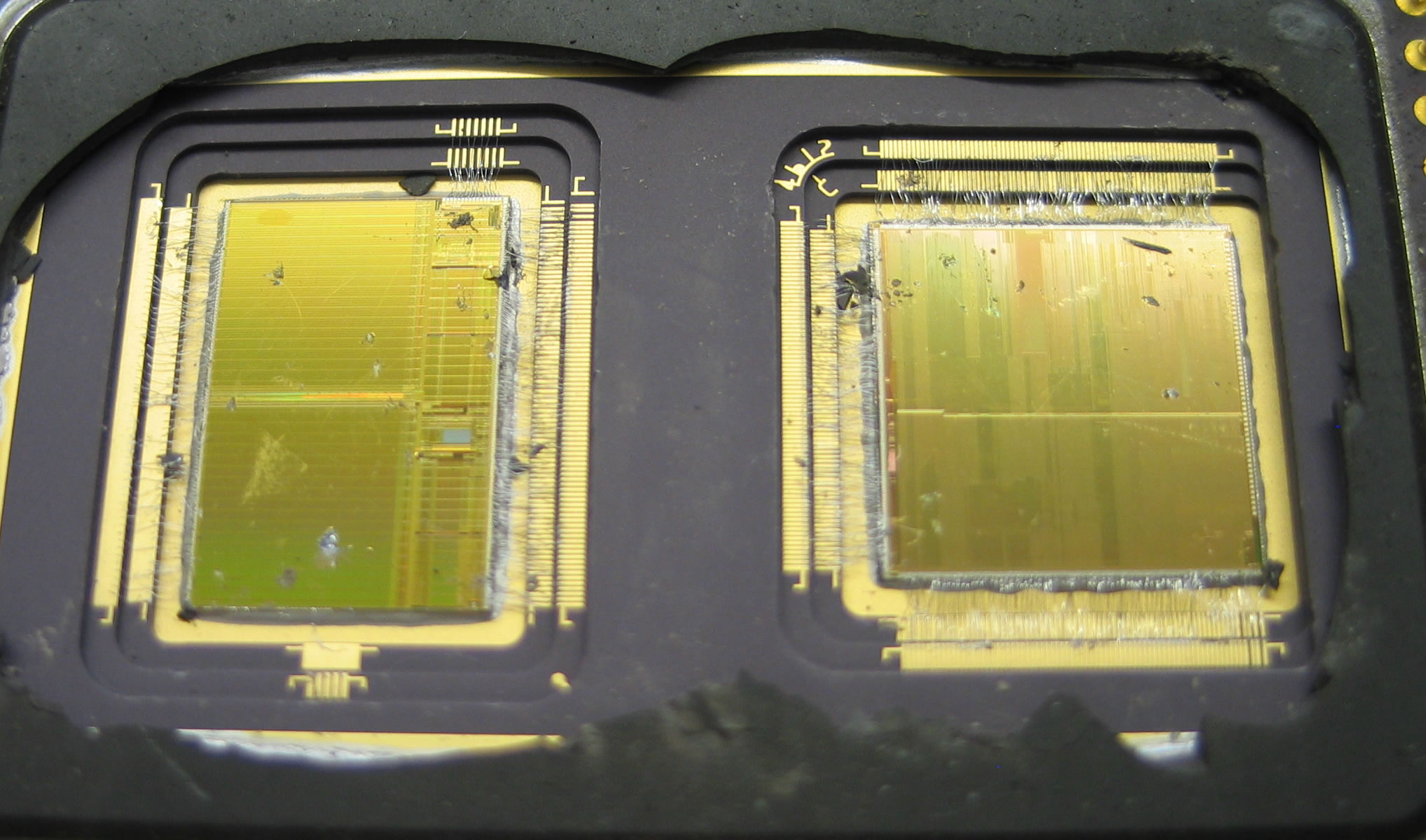

The result was the Pentium Pro, internally codenamed P6, which shipped on 1 November 1995 at $974 for the 150 MHz part with 256 kilobytes of integrated level-two cache. The architectural design used a forty-entry reorder buffer (Smith-Pleszkun 1985, ISCA), a twenty-entry unified reservation station array (Tomasulo 1967), a register alias table, and five execution ports. Approximately five and a half million transistors on a 0.6 micron BiCMOS process. Twenty-eight years after Tomasulo’s IBM Journal paper had described the algorithm, Intel had brought it back into commercial silicon – and into the desktop microprocessor market.

Colwell’s 2009 oral history records the deliberate naming choice:

“We should also say that the 360/91 from IBM in the 1960s was also out of order, it was the first one and it was not academic, that was a real machine. Incidentally that is one of the reasons that we picked certain terms that we used for the insides of the P6, like the reservation station that came straight out of the 360/91.”

The Pentium Pro became the architectural ancestor of every Intel desktop processor through Pentium II, Pentium III, Pentium M, Core, Core 2, Nehalem, Sandy Bridge, Haswell, Skylake, and the Sunny Cove and Golden Cove and Lion Cove cores of the 2020s. AMD’s K7 / Athlon (1999), DEC Alpha 21264 (1998), MIPS R10000 (1996) all converged on the same Tomasulo-plus-reorder-buffer pattern. By 2001 every high-performance microprocessor in the world was a Tomasulo machine. The algorithm published in 1967 won, twenty-eight years late, the architectural war that the IBM 360/91 had lost in 1971.

20. The Earth Simulator (June 2002)

In June 2002 the Earth Simulator at the Yokohama Institute for Earth Sciences in Japan, built by NEC for the Japan Aerospace Exploration Agency at a cost of approximately ¥60 billion (then about $400 million USD), achieved a sustained Linpack benchmark of 35.86 teraflops. It was, by a factor of approximately five, faster than any other computer in the world. Jack Dongarra, the principal author of the Linpack benchmark and the senior figure in the supercomputing performance-tracking community, characterised the Earth Simulator’s appearance as “a Computenik on our hands” – a pun on the Soviet Union’s 1957 Sputnik that had triggered American political panic about scientific competence.

The Earth Simulator was the first major supercomputer outside the United States to take the TOP500 list’s number-one position since 1993. It held the position for two and a half years, until the IBM Blue Gene/L at LLNL surpassed it in November 2004. The American supercomputing community’s complacency about its own dominance through the 1990s was, in retrospect, a contributing factor to the Earth Simulator’s appearance as a surprise: by 2002 the United States was investing principally in commodity-cluster supercomputing rather than in custom-vector machines, and the Japanese vector machine had a temporary architectural advantage. The advantage did not persist: by 2008 commodity-cluster supercomputers (the IBM Roadrunner) had returned to the top of the TOP500. The Earth Simulator was decommissioned in 2009.

21. NVIDIA’s near-bankruptcy (2008-2009)

In June 2008 NVIDIA Corporation announced a $196 million charge against its quarterly earnings to cover warranty replacements for graphics chips with packaging defects – an episode that became known internally and externally as “bumpgate” (the underbump metallurgy in question had been changed to lower cost and was failing in volume). NVIDIA’s stock price fell approximately eighty per cent through 2008 and into early 2009. Jensen Huang, NVIDIA’s chief executive, told employees in a 2009 internal meeting: “We were one month away from going out of business.”9

The 2008-2009 financial crisis was, in retrospect, the closest moment NVIDIA came to corporate failure. What saved the company was CUDA – the general-purpose GPU programming model that NVIDIA had launched in June 2007 and that, by 2008-2009, had begun to attract serious computational-science adoption. By 2010 NVIDIA’s GPU revenue from scientific-computing customers exceeded its consumer-graphics revenue. By 2012, after the AlexNet breakthrough on two GTX 580 GPUs in Alex Krizhevsky’s bedroom, NVIDIA’s machine-learning revenue exceeded everything else combined.

The architectural lineage is direct: Slotnick’s ILLIAC IV (1972, sixty-four PEs in lockstep, “didn’t make weather”) → NVIDIA G80 (2006, one hundred and twenty-eight CUDA cores, near-bankruptcy two years later) → AlexNet (2012, ImageNet revolution on two GTX 580s) → modern H100 (2024, sixteen thousand eight hundred and ninety-six CUDA cores). The architectural philosophy that lost in 1975 won in 2024. NVIDIA – which in 2009 was a month away from failure – is, in 2026, by market capitalisation, the most valuable company in the world.

22. AlexNet (30 September 2012)

On 30 September 2012, Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton at the University of Toronto submitted a paper titled “ImageNet Classification with Deep Convolutional Neural Networks” to the Neural Information Processing Systems conference. The paper described a deep convolutional neural network trained on the ImageNet 2012 dataset, achieving a top-5 classification error rate of 15.3% – ten percentage points better than the runner-up at 26.2%. The neural network was trained on two NVIDIA GTX 580 GPUs in Krizhevsky’s bedroom over approximately five days.

Yann LeCun, the New York University researcher who had been working on convolutional networks since the late 1980s, said of the AlexNet result that it was an “unequivocal turning point in the history of computer vision.” The five-percentage-point margin over the previous state of the art was the largest single-paper performance improvement in the field’s history. The architectural lineage from AlexNet – deep neural networks trained on GPUs at unprecedented scale – runs through every subsequent computer-vision breakthrough, every speech-recognition breakthrough, every machine-translation breakthrough, every large-language-model breakthrough, and (by 2022-2024) every machine-learning weather-forecasting breakthrough. The bedroom in Toronto in autumn 2012 is, in 2026, considered one of the foundational moments in the history of artificial intelligence.

23. Larrabee cancelled (4 December 2009)

In April 2008 Intel announced Larrabee, a many-core x86-based GPU intended to compete with NVIDIA in graphics and to serve as a general-purpose massively-parallel scientific computing substrate. Larrabee was, by Intel’s design, the architectural opposite of the NVIDIA G80: many small x86 cores running an x86 instruction set, rather than a custom SIMT architecture. The first silicon was demonstrated at SIGGRAPH 2008. On 4 December 2009 Intel cancelled Larrabee and announced that the project would be repackaged as a high-performance-computing accelerator under the Knights brand.

Pat Gelsinger, then Intel’s senior vice president of the Digital Enterprise Group and later Intel’s chief executive, characterised the Larrabee cancellation in a 2021 retrospective as “the project that would have changed the shape of AI.” Intel pivoted Larrabee’s hardware lineage into the Xeon Phi product line (Knights Corner 2012, Knights Landing 2014, Knights Mill 2017). The Xeon Phi line was discontinued on 27 July 2018 after consistent commercial failure against NVIDIA’s GPUs. Intel did not deliver a commercially successful GPGPU product until the Ponte Vecchio architecture in 2022. The 2009-2018 Intel GPGPU effort is, in 2026, considered a foundational mistake in Intel’s strategic positioning – a missed-the-AI-wave decision that has been institutionally devastating.

24. Spectre and Meltdown (3 January 2018)

On 3 January 2018 the security research community publicly disclosed two related processor vulnerabilities collectively known as Spectre (Project Zero / Cohen / Horn / Lipp / others) and Meltdown (Lipp / Schwarz / Gruss / Prescher / Haas / others). The vulnerabilities exploited the speculative execution machinery that every Tomasulo-derived modern processor (essentially every CPU since 1995) used to hide memory latency. The exploit allowed unprivileged user-space code to read arbitrary system memory through cache-side-channel attacks against speculative-execution branch predictions.

The mitigations (Kernel Page Table Isolation, retpolines, microcode patches) reduced the performance of every modern processor by approximately five to thirty per cent on workloads that depended heavily on speculative execution. Intel, AMD, and ARM all shipped microcode patches and processor revisions through 2018-2020. The institutional consequence: the architectural philosophy of aggressive speculative execution – the philosophy that Tomasulo had introduced in 1967 and that the Pentium Pro had revived in 1995 – was, between 2018 and the present, considered a security liability rather than a pure performance optimisation. The post-2020 generation of microprocessor designs (Apple Silicon, AMD Zen 3, Intel Sunny Cove and successors) has been deliberately more conservative about speculative-execution depth, and the architectural arms race that had defined commercial processor design since 1995 has slowed.

25. Pangu-Weather (5 July 2023)

On 5 July 2023 Huawei’s research division published in Nature (619:533-538) a paper titled “Accurate medium-range global weather forecasting with 3D neural networks.” Lead authors: Bi, Xie, Zhang, Chen, Gu, Tian. Pangu-Weather was a deep convolutional neural network trained on the European Centre for Medium-Range Weather Forecasts ERA5 reanalysis dataset (1979-2017, approximately forty years of hourly atmospheric state). The network’s first ten-day operational forecast was tested against ECMWF’s IFS physics-based forecast on the medium-range benchmark suite for July 2022.

Pangu-Weather outperformed ECMWF’s operational physics-based model on every standard metric, at one ten-thousandth the runtime cost. A single ten-day Pangu-Weather forecast could be produced in approximately fifteen seconds on a single NVIDIA A100 GPU. The contemporary ECMWF physics-based forecast required approximately twenty minutes on twelve hundred CPU cores. The 2023 Nature paper was, in retrospective, the moment that machine-learning-based weather forecasting transitioned from research curiosity to operational-grade competition. DeepMind’s GraphCast (Lam et al., Science 14 November 2023) and GenCast (4 December 2024, fifty-member ensemble in eight minutes versus eight hours for ECMWF’s traditional ensemble) followed the same pattern.

ECMWF’s response to the 2022-2024 ML disruption was the Artificial Intelligence Forecasting System (AIFS), declared operational deterministic on 25 February 2025 and operational ensemble on 1 July 2025 – two months ago as of this writing. The Centre that had defined operational medium-range weather forecasting since 1979 (Post 34) had, by mid-2025, transitioned a substantial fraction of its operational forecast products to machine-learning models that ran in seconds on GPUs rather than hours on CPU clusters. The disruption was complete within thirty months of Pangu-Weather’s first publication.

26. The Aurora delay (May 2024)

The United States Department of Energy’s Aurora exascale supercomputer, contracted to Intel in 2015 for installation at Argonne National Laboratory, was originally scheduled to deliver one exaflop of sustained Linpack performance in 2018. The Aurora 2018 design (Knights Hill, the third-generation Xeon Phi) was cancelled with the rest of the Xeon Phi line in 2017. The Aurora 2021 design (Sapphire Rapids CPU plus Ponte Vecchio GPU) slipped repeatedly through 2021, 2022, and 2023. The system was finally declared operational in May 2024, nine years after the original contract and six years after the original delivery date. By the time Aurora reached full performance, the Frontier supercomputer at Oak Ridge had already been operational for two years.

The Aurora delay is the canonical late-2010s American supercomputer-project failure: a contract that committed before the architectural choice was settled, a vendor that picked an architectural philosophy (many-core x86) that did not commercially scale, and a delivery schedule that slipped through three Presidential administrations. It is, in 2026, considered the worst exascale-project execution in the history of the Department of Energy’s supercomputing programme, and a contributing factor to the institutional shift toward GPU-centric exascale planning at every subsequent DOE leadership-class facility.

What the pattern says

Across these twenty-six moments the breakthrough/failure pattern is reasonably consistent. The breakthroughs were almost always:

- The simpler architecture rather than the more ambitious one

- The on-schedule machine rather than the announced-but-delayed one

- The commercial-volume design rather than the bespoke-research design

- The architecturally conservative bet rather than the architecturally aggressive bet

The failures were almost always:

- The architecturally ambitious project that ran out of money before delivery (Stretch, ILLIAC IV, CDC 8600)

- The technology that was a generation too soon (the Williams tube in 1947, the Cray-3’s gallium arsenide in 1989)

- The marketing announcement that froze the market for an actually-shipping competitor (the IBM 360/92 in 1964)

- The vendor pivot that destroyed an existing successful product line (Alpha for Itanium in 2001, Xeon Phi for nothing in 2018)

- The architectural philosophy that was correct but ahead of its time (SIMD’s two failures in 1972 and 1985 before its 2006 GPU revival)

The architectural philosophies themselves rarely die outright. They go to sleep, sometimes for thirty years, and then return when the underlying silicon catches up. The Tomasulo algorithm was published in 1967, dormant for twenty-eight years, then ubiquitous after 1995. The SIMD architecture failed in 1972 with ILLIAC IV, failed again in the late 1980s with the Connection Machine and MasPar, then dominated everything after 2006 as the GPU. The shared-memory multiprocessor architecture that drove Cray out of CDC in 1972 was, by the late 1980s, the standard pattern of every Cray X-MP and Y-MP. The architectural verdict is almost never permanent. The institutional verdict – the careers ruined, the corporations destroyed, the projects cancelled, the apologies delayed five or fifty-two years – usually is.

The Wikipedia article for IBM 7030 Stretch is six pages long and describes a successful architectural prototype. The IBM Stretch corporate retrospective is twenty pages long and describes a twenty-million-dollar engineering loss that ended one engineer’s career and led to a five-years-late public apology by the chief executive at the IBM Fellow ceremony. Both are correct. The first is the architectural history. The second is the institutional one. This post has tried to be the second.

Footnotes

References

- Astrahan, M. M., and Jacobs, J. F. “History of the Design of the SAGE Computer – The AN/FSQ-7,” Annals of the History of Computing 5(4):340-349, 1983.

- Bashe, C. J., Johnson, L. R., Palmer, J. W., and Pugh, E. W. IBM’s Early Computers, MIT Press, 1986.

- Bi, K., Xie, L., Zhang, H., Chen, X., Gu, X., and Tian, Q. “Accurate medium-range global weather forecasting with 3D neural networks,” Nature 619:533-538, 2023.

- Conway, L., Randell, B., Rozenberg, D. P., and Senzig, D. N. “Dynamic Instruction Scheduling (DRAFT),” ACS Memorandum, IBM Advanced Computing Systems, San Jose, 1966.

- Conway, L. “Reminiscences of the VLSI Revolution,” IEEE Solid-State Circuits Magazine 4(4):8-31, 2012.

- Hord, R. M. The Illiac IV: The First Supercomputer, Springer-Verlag, 1982.

- Krizhevsky, A., Sutskever, I., and Hinton, G. E. “ImageNet Classification with Deep Convolutional Neural Networks,” Advances in Neural Information Processing Systems 25, 2012.

- Lam, R., Sanchez-Gonzalez, A., Willson, M., et al. “Learning skillful medium-range global weather forecasting,” Science 382(6677):1416-1421, 2023.

- Murray, Charles J. The Supermen: The Story of Seymour Cray and the Technical Wizards Behind the Supercomputer, Wiley, 1997.

- Pugh, E. W. Building IBM: Shaping an Industry and Its Technology, MIT Press, 1995.

- Sterling, T., Savarese, D., Becker, D. J., Dorband, J. E., Ranawake, U. A., and Packer, C. V. “Beowulf: A Parallel Workstation for Scientific Computation,” ICPP, 1995.

- Tomasulo, R. M. “An Efficient Algorithm for Exploiting Multiple Arithmetic Units,” IBM Journal of Research and Development 11(1):25-33, 1967.

-

SAGE / AN/FSQ-7 specifications: Astrahan, M. M., and Jacobs, J. F. “History of the Design of the SAGE Computer – The AN/FSQ-7,” Annals of the History of Computing 5(4):340-349, October 1983. Total programme cost: National Research Council, Funding a Revolution: Government Support for Computing Research, National Academies Press, 1999, Appendix A. ↩

-

IBM 7030 Stretch: Bashe, C. J., Johnson, L. R., Palmer, J. W., and Pugh, E. W. IBM’s Early Computers, MIT Press, 1986, pp. 416-468. The Watson Jr. May 1961 Western Joint Computer Conference statement and the 1966 Fellow-ceremony apology are both in Bashe et al. The $20 million IBM loss figure is from Pugh, Building IBM, MIT Press, 1995. ↩

-

Philco TRANSAC S-2000: Wikipedia “Philco Transac S-2000”; Computer History Museum collection. The 43-unit production figure is from the IT History Society database. The IBM 7090 production figure of approximately two hundred is from Bashe et al. 1986. ↩

-

IBM-CDC 1973 settlement: see Post 31, footnote 30, with primary sources Reason 1974, Pugh, dwkcommentaries 2011, and the contemporaneous Wall Street Journal coverage of 23 January 1973. The widely-cited $600 million figure is a misreading of the original CDC damages claim; the actual settlement value is approximately $80 million. ↩

-

Conway, L., Randell, B., Rozenberg, D. P., and Senzig, D. N. “Dynamic Instruction Scheduling (DRAFT),” ACS Memorandum, IBM Advanced Computing Systems, San Jose, 23 February 1966. PDF at https://ai.eecs.umich.edu/people/conway/ACS/DIS/DIS.pdf. Conway, L. “Reminiscences of the VLSI Revolution,” IEEE Solid-State Circuits Magazine 4(4):8-31, Fall 2012. ↩

-

The CDC 8600 minimum-wage anecdote: Murray, Charles J. The Supermen: The Story of Seymour Cray and the Technical Wizards Behind the Supercomputer, Wiley, 1997, pp. 127-130. The Norris-Cray confrontation timing: Murray pp. 137-145. ↩

-

Hord, R. M. The Illiac IV: The First Supercomputer, Springer-Verlag for the IEEE Computer Society, 1982. Cost overrun and schedule slip details from Hord pp. 8-15. The “didn’t make weather” quote is on Hord p. 123. ↩

-

Sterling, T., Savarese, D., Becker, D. J., Dorband, J. E., Ranawake, U. A., and Packer, C. V. “Beowulf: A Parallel Workstation for Scientific Computation,” Proceedings of the 24th International Conference on Parallel Processing (ICPP), 1995. The “Wiglaf” naming detail is from Sterling’s later interviews with the Computer History Museum. ↩

-

Huang’s “one month away from going out of business” quote: NVIDIA internal communications cited in Mickle, T. The Fall of Intel, Simon & Schuster, 2024, plus multiple secondary-source citations across Reuters, Wall Street Journal, and IEEE Spectrum coverage of the 2008-2009 financial crisis at NVIDIA. ↩