Some time in the spring or summer of 1975, in the central computer facility of the National Aeronautics and Space Administration’s Ames Research Center on the south end of San Francisco Bay, a large air-cooled cabinet of integrated circuits called the ILLIAC IV ran, to completion, a converted version of the Goddard Institute for Space Studies general circulation climate model. The simulation produced what its operators afterwards described as negative atmospheric pressures.1

The Goddard model was, in its previous existence, a derivative of the UCLA general circulation model that Yale Mintz and Akio Arakawa had built at the University of California in the early 1960s. Mintz had carried the model east to NASA’s Manhattan campus when he moved to GISS in the late 1960s; James Hansen would later inherit it. The 1975 conversion to the ILLIAC IV was the most ambitious atmospheric-science computation that anybody had attempted to port to the most ambitious supercomputer of the 1960s. The port did not work. R. Michael Hord, the project’s official historian, recorded the failure on page 123 of his 1982 book The Illiac IV: The First Supercomputer in twelve plain words:

“the Illiac version of the code ran to completion but it didn’t make weather.”2

This is the story of the machine that didn’t make weather.

Where Post 32 left off

Our previous post ended at NCAR’s Mesa Laboratory in Boulder, where the CDC 7600 serial number twelve ran from 3 May 1971 to 1 April 1983 – twelve years – carrying the Kasahara-Washington general circulation model and the early NCAR Community Climate Model. The 7600 was the canonical scientific supercomputer of the early 1970s: a single fast central processor with nine deeply-pipelined functional units, a two-level main-memory hierarchy of small core memory and large core memory, a C-shaped chassis cooled in freon, about seventy-five units sold worldwide, the architectural philosophy that Seymour Cray would carry from CDC to Cray Research and into the Cray-1 of 1976.

The 7600 was not the only architectural philosophy on offer in 1971-1972, however. Eight hundred and eighty miles south-west of Boulder, on Moffett Field beside the south end of San Francisco Bay, NASA Ames Research Center took delivery in April 1972 of a different machine entirely. Where Cray’s 7600 had nine deeply-pipelined functional units running in parallel under one instruction stream, the ILLIAC IV had sixty-four arithmetic processors running in lockstep under one instruction stream: a Single Instruction Multiple Data architecture. Where the 7600 was a single fast scalar machine, the ILLIAC IV was sixty-four slow processors broadcasting the same operation to sixty-four pieces of data each cycle. Where the 7600 cost five to fifteen million dollars, the ILLIAC IV cost approximately thirty-one million. Where the 7600 sold seventy-five units, the ILLIAC IV sold one. Where the 7600 ran the world’s research-supercomputer workload from 1969 to 1976, the ILLIAC IV did not produce its first useful operational result until November 1975 – three and a half years after delivery – and was decommissioned six years later, on 7 September 1981.3

The architectural philosophy that the ILLIAC IV embodied – many small processors running in lockstep against many parallel data items – was a commercial dead end in 1981. It would re-emerge, decades later, as the dominant computational substrate of the 2020s: the architecture of every modern graphics processor, of every machine-learning accelerator, of every gigaflop-per-watt mobile compute engine. The lineage from the ILLIAC IV’s sixty-four processing elements at NASA Ames in 1975 to the ten thousand and twenty-four CUDA cores of an NVIDIA H100 in 2024 is direct. It was just very, very slow.

Daniel Slotnick

The architect of the ILLIAC IV was Daniel Leonid Slotnick, born 1931 in New York City. He took his Bachelor of Arts at Columbia in 1951, his Master of Arts and Doctor of Philosophy in mathematics at New York University, and joined IBM at Poughkeepsie in 1957. Three years later, in 1960, he moved to Westinghouse Electric Corporation in Pittsburgh, where between 1960 and 1965 he led the design of two prototype parallel computers: SOLOMON I (built 1962) and SOLOMON II (built 1963). Both were Single Instruction Multiple Data array processors – in Slotnick’s own framing in the 1962 AFIPS Fall Joint Computer Conference paper, “a parallel network computer of one thousand twenty-four arithmetic units providing a single broadcast instruction to all units simultaneously.” The SOLOMON machines were too small to do useful work and Westinghouse cancelled the programme in 1965.4

In 1965 Slotnick joined the University of Illinois at Urbana-Champaign and almost immediately wrote the architectural proposal that would become the ILLIAC IV. The proposal targeted a much larger version of the SOLOMON SIMD architecture: two hundred and fifty-six processing elements organised as four quadrants of sixty-four PEs each, all running in lockstep, with a peak rate of approximately one billion floating-point operations per second. This was the first machine-design proposal in history that explicitly targeted gigaflop performance. The technology that would have allowed it – emitter-coupled-logic integrated circuits, thin-film magnetic memory, microsecond-latency cross-bar switching networks – did not yet exist in 1965. Slotnick proposed to invent it.

The proposal won funding from the United States Department of Defense Advanced Research Projects Agency through a contract administered by the Air Force Rome Air Development Center. Burroughs Corporation of Detroit, Michigan, was selected in 1967 as the prime industrial contractor and built the system at its Paoli Pennsylvania plant. Texas Instruments built the ECL integrated circuits at Dallas; Fairchild Semiconductor built the bipolar logic; Burroughs built the thin-film memory. The University of Illinois was to host and operate the assembled machine in a purpose-built building – the Digital Computer Laboratory annexe – on the Urbana campus. The original budget was eight million dollars and the original schedule called for first operational use in 1969 or 1970.5

Neither held. The cost overran from eight million dollars to twenty-four million dollars by January 1970, and to approximately thirty-one million dollars by the time of delivery in April 1972 – a fourfold increase. The schedule slipped from 1969 first operational use to 1971 to 1972 to, eventually, November 1975 first declared operational. Slotnick later attributed the cost overruns to “the cost-plus-fixed-fee environment in the company’s defense business.” The decision to build only one quadrant of the planned four, made in 1969 to control cost overruns, reduced the planned peak rate from one billion floating-point operations per second to about two hundred and fifty million, and the planned commercial scientific market for the machine – already small – to almost zero.6

The intervening years were not kind in other ways either.

Vietnam at Urbana

On 6 January 1970 the University of Illinois student newspaper The Daily Illini ran a story under the headline “Department of Defense to employ UI computer for nuclear weaponry.” The story reported that two-thirds of ILLIAC IV operating time would be reserved for Department of Defense classified projects, and only one-third for university research. The story was technically true; ARPA’s funding agreement reserved that fraction of the machine’s wall-clock time for federal-priority workloads. The story was also extraordinarily ill-timed.7

In the political climate of January 1970, anti-Vietnam War protest at United States universities had been building since 1968 and had begun to acquire a militant fringe. The Military Procurement Act of 1970, signed by Richard Nixon on 19 November 1969, had specifically pressured Department of Defense funding agencies to demonstrate “direct, apparent, and clearly documented” military relevance for every research dollar – which forced the previously-quiet ARPA-Illinois ILLIAC IV partnership into public sight. Through the spring of 1970:

On 24 February 1970 the Reserve Officers’ Training Corps lounge in the Armory at Urbana-Champaign was firebombed. Two more firebombs were found in Altgeld Hall the same week. On 9 May 1970, five days after the Kent State shootings, U of Illinois protesters declared a “day of Illiaction,” with rallies on the Quad and a march to the under-construction ILLIAC IV building. On 24 August 1970 a truck bomb destroyed Sterling Hall at the University of Wisconsin-Madison, which housed the United States Army Mathematics Research Center. The bombing killed Robert Fassnacht, a thirty-three-year-old physicist working overnight on a thesis-related experiment, and injured three others. The Sterling Hall bombing was the proximate alarm bell for ARPA: it had demonstrated that anti-Department-of-Defense-campus-research protest could be lethal.8

In January 1971 ARPA’s Information Processing Techniques Office, then directed by Lawrence Roberts, made the decision to deliver the ILLIAC IV to a federal site rather than to the University of Illinois. Roberts later told the IEEE Spectrum journalist Howard Falk: “University people who might run it … are unwilling to look at some kinds of problems; maybe the classified ones, maybe just sensitive ones … Was the university the right organization to manage a large operational undertaking? … The answer was generally no.” The destination of the machine was unsettled in early 1971.9

The institution that came forward to take it was NASA Ames.

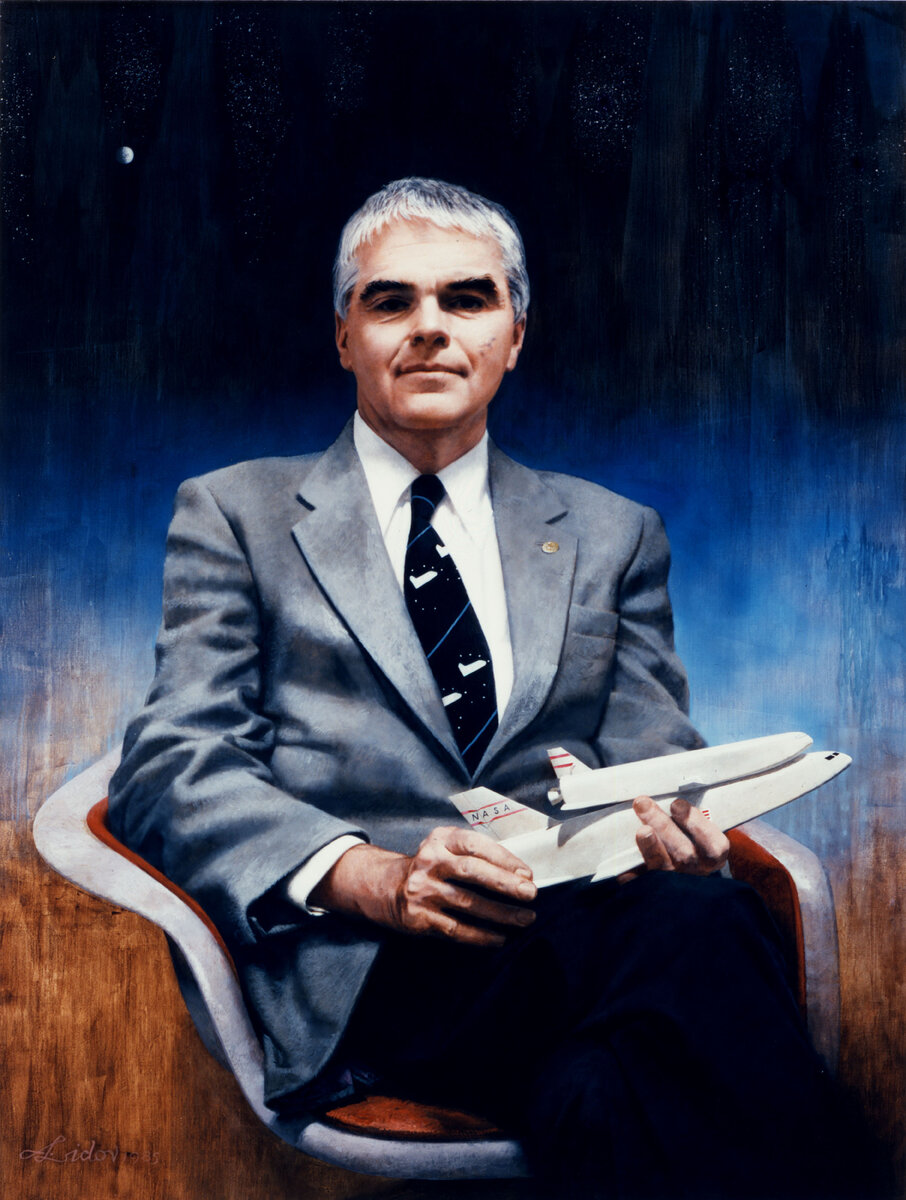

Hans Mark and Edward Teller

Hans Michael Mark, born 17 June 1929 in Mannheim Germany, son of an Austrian chemistry professor, emigrated to the United States in 1940, took his doctorate in physics at the Massachusetts Institute of Technology in 1954, and held positions at Berkeley, Lawrence Livermore (where he worked under and befriended Edward Teller), and Stanford before being appointed Director of NASA Ames Research Center on 20 February 1969. He served as Director of Ames for eight and a half years, until 15 August 1977, when he resigned to become Under Secretary and then Secretary of the United States Air Force under Carter and Reagan. Later he served as Deputy Administrator of NASA, then as Chancellor of the University of Texas System from 1984 to 1992, and finally as Director of the Department of Defense’s Advanced Technology Office through the early 1990s. He died on 18 December 2021 in Austin Texas at the age of ninety-two.10

In early 1971 – after the January 1971 ARPA decision to move the machine but before a destination had been settled – Mark heard through Teller, his former Livermore colleague, that the ILLIAC IV “was in play.” Mark dispatched two senior staff members to negotiate with ARPA: Dean Chapman, the Ames Thermo- and Gas-Dynamics Division chief, and Loren Bright, Ames’s Director of Research Support. Chapman and Bright promised ARPA that Ames could “get the Illiac to work and prove the concept of parallel processing” and “would get a return on DARPA’s $31 million investment by generating applications in the emergent field of computational fluid dynamics.” ARPA accepted Ames’s offer.11

The institutional structure under which the machine ran at Ames was the Institute for Advanced Computation (IAC), formed 1971 under an interagency agreement between DARPA and NASA Ames, jointly funded. Mel Pirtle was its long-serving Director; he had been at Ames since 1969 working on the centre’s IBM 360/67 surplus from the cancelled Manned Orbiting Laboratory project, and would run the IAC throughout the ILLIAC IV’s operational life. The interagency agreement expired in June 1979, after which the machine became NASA-only and IAC staff was reduced from approximately 115 to approximately 85 people.12

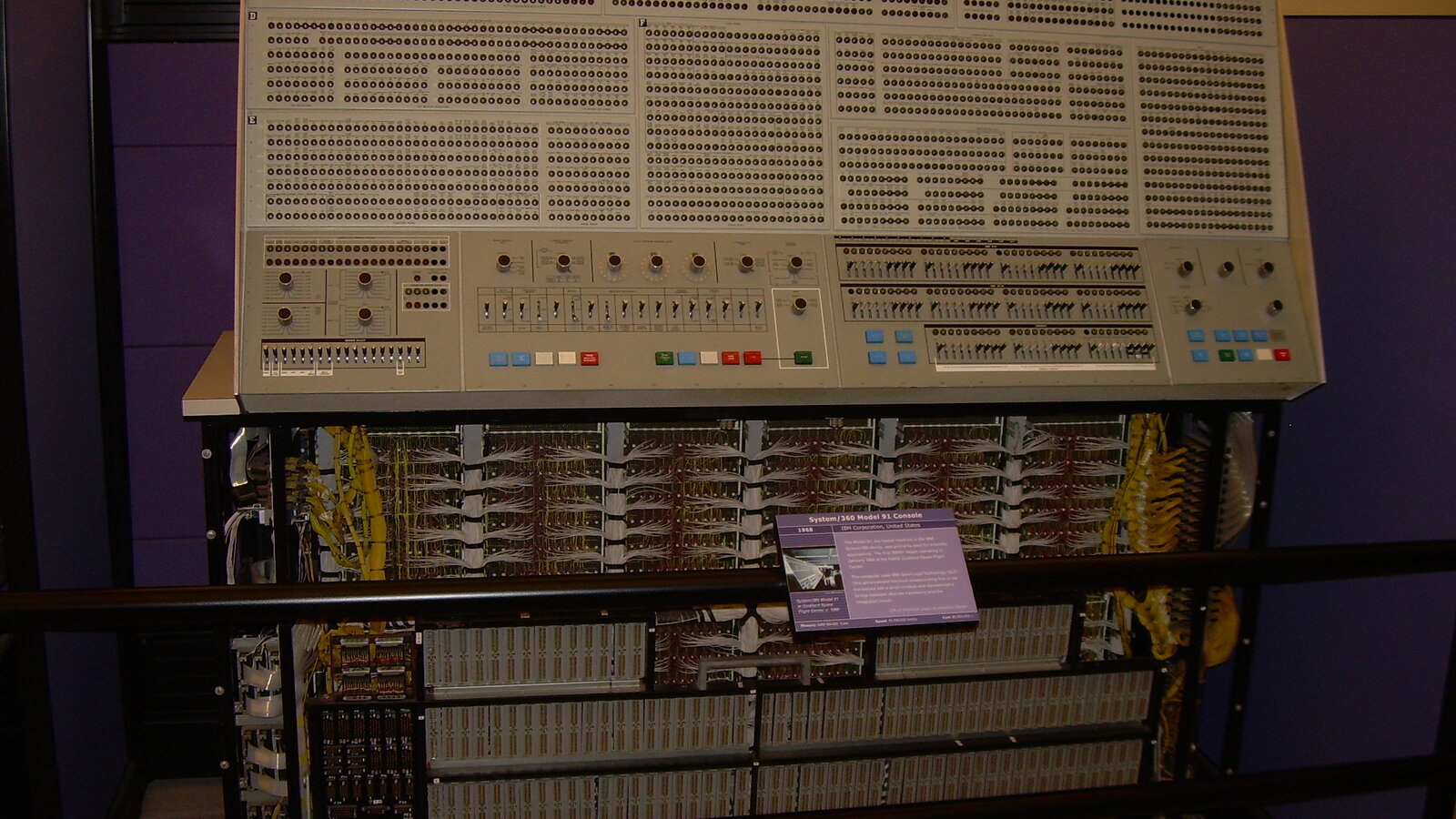

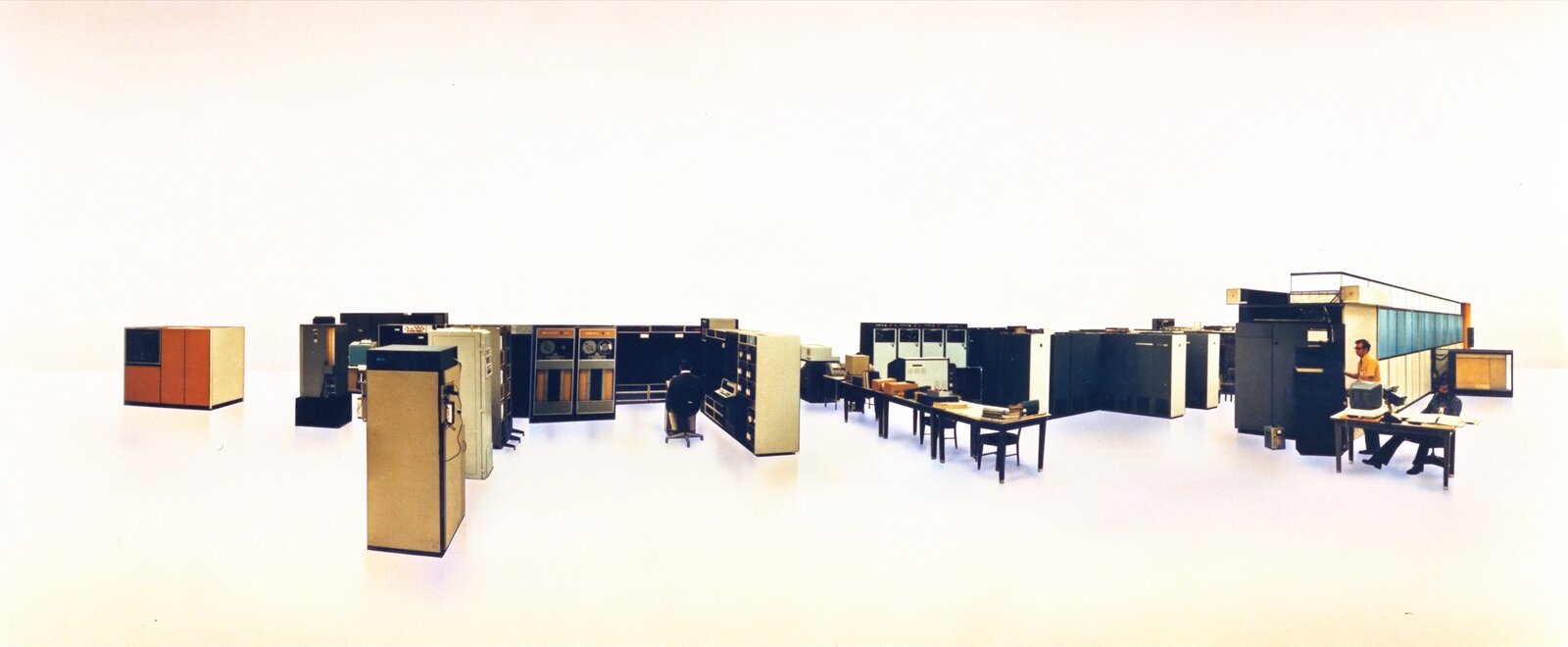

The first sixty-four-PE quadrant – the only quadrant ever built – was assembled by Burroughs at Paoli through 1971-1972 and delivered to NASA Ames in April 1972. It was installed in the Central Computer Facility, Building N-233, on Moffett Field, Mountain View California. The institutional partition that defined American supercomputing for the rest of the decade was now in place: NCAR had its CDC 7600 in Boulder, Lawrence Livermore had its CDC 7600 plus three more, NASA Ames had its ILLIAC IV in Mountain View, the National Meteorological Center at Suitland Maryland would have its three IBM 360/195s from March 1974 onward, and the Geophysical Fluid Dynamics Laboratory at Princeton had its Texas Instruments Advanced Scientific Computer. Five different machines for five different sites, four different vendors, four different architectures.

The lost three years

The machine arrived at Ames in April 1972 and was not declared operational until November 1975. Three and a half years passed in pre-operational struggle. The official Ames history, Glenn Bugos’s Atmosphere of Freedom (NASA SP-4314, 2010), summarises the period in two sentences:

“For three years, the Illiac was little used as researchers tried to program the machine knowing the results would likely be erroneous. In June 1975, Ames made a concerted effort to shake-out the hardware – replace faulty printed circuit boards and connectors, repair logic design faults in signal propagation times, and improve power supply filtering to the disk controllers.”13

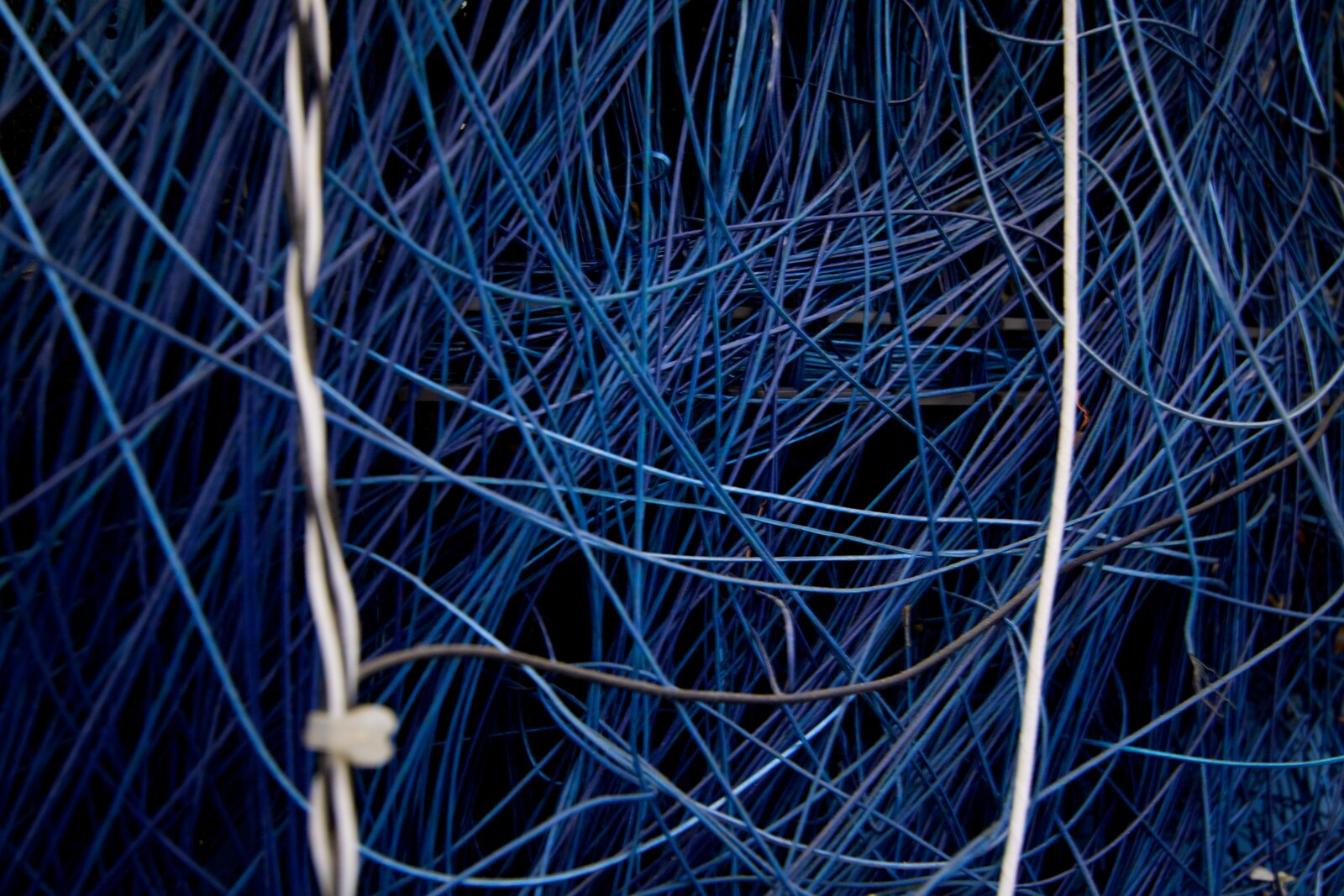

Hord’s 1982 book describes the same period in more vivid terms. “The Illiac was not ready; it was down almost all of the time and when it was available, arithmetic errors without diagnostics were rampant.” A program would crash with a wrong answer and the machine had no facility for telling the operator which of the sixty-four processing elements had produced the wrong result. Some user groups developed an unusual workaround:

“During this period some programmers divided the Illiac into three sections of 21 processors each and worked the problem three times in parallel. Frequently the intermediate results from the three sections would be compared. If any two agreed, that would be taken as correct and the calculation would proceed. If no two agreed, the program would branch back to the previous checkpoint to try again. The program would be allowed to branch back dozens of times before giving up and aborting.”14

The triple-redundancy-on-twenty-one-processors workaround is a small vivid window onto what early ILLIAC IV programming was like. The machine was so unreliable that users built majority-vote schemes into their codes to detect arithmetic errors at runtime. Twenty-one processors agreed; the result stood. Twenty-one disagreed; you started over. Three thousand miles east, at IBM Poughkeepsie and at the Watson Research Center in Yorktown Heights, Robert Tomasulo’s algorithm sat dormant in the literature; in another twenty years it would be revived in the 1995 Pentium Pro and would form the architectural backbone of every reliable microprocessor in the world. The ILLIAC IV was not a Tomasulo machine. It was sixty-four processors broadcasting their state to a single central control unit, with no facility for catching and recovering from individual processor faults at runtime, and the resulting reliability profile was the polar opposite of Tomasulo’s algorithm. The lockstep SIMD philosophy and the dynamic out-of-order philosophy, designed in the same building in Yorktown one floor apart in 1965-66, were as different as two computer architectures could be. One produced ILLIAC IV; the other produced the Pentium Pro and Apple Silicon.

The November 1975 operational milestone coincided with a second equally important event: the ILLIAC IV’s connection to ARPANET. The machine was the first network-accessible supercomputer in history, beating the Cray-1 (which would not connect to a wide-area network until much later in its life) by approximately twelve months. Ames users could access the ILLIAC IV remotely from anywhere on the network. This network-first architecture was as important as the SIMD architecture itself: it foreshadowed, by approximately fifteen years, what the National Science Foundation supercomputer centres of the late 1980s would do in production.

Sixty-four processors in lockstep

The architecture, as built, consisted of sixty-four Processing Elements (PEs) operating under a single Control Unit. Each PE was a 64-bit floating-point arithmetic processor with a 1+15+48 bit format (one sign bit, fifteen-bit exponent, forty-eight-bit mantissa) running on a sixteen-megahertz clock. Each PE had two thousand and forty-eight words of local memory, called PE Memory or PEM. Eight PEs shared a disk channel; sixty-four PEs shared a single Control Unit. The Control Unit broadcast each instruction simultaneously to all sixty-four PEs; each PE either executed the instruction on its local data or, depending on the value of its “mode bit,” sat idle for that cycle while the others ran. Branches were emulated by setting and unsetting mode bits across the array.15

Inter-PE communication was through a chordal-ring network: each PE was connected to four neighbours, the PEs at offsets ±1 and ±8 in modulo-64 wraparound. (Equivalently, this is an eight-by-eight toroidal mesh.) Communications between non-adjacent PEs took multiple hops. The bandwidth was high but the latency was non-uniform across the array, which complicated programming in subtle ways.

The chassis was a single quadrant standing approximately ten feet high, eight feet deep, fifty feet long. The full installation occupied an eleven-thousand-seven-hundred-square-foot computer bay in NASA Ames Building N-233. Cooling was forced air: two hundred and eighty-one tons of air-conditioning, the equivalent of the air-conditioning capacity of an entire mid-size office building, dedicated to a single computer. (The CDC 7600 of the previous post, by contrast, was cooled in liquid freon. The Cray-1 of 1976 would also be freon-cooled. The ILLIAC IV’s air-cooling was a deliberate Burroughs design choice and an unusual one for a supercomputer of its class.) Total system power consumption was 1.2 megawatts.16

The logic family was emitter-coupled-logic integrated circuits in the array proper, with transistor-transistor logic in the peripheral and front-end systems. The original front-end machine was a Burroughs B6500; this was later replaced at Ames by a DEC PDP-10. The disk system was the Burroughs Disk File, a thin-film-and-rotating-disk hybrid storage system that was itself a substantial engineering project; later replaced by a Burroughs DSDF.

The cost of the system, including the abandoned three quadrants, was approximately thirty-one million dollars in 1972. In 2026 dollars that figure is approximately two hundred and twenty million. The total system included the array proper, the Burroughs front-end, the Disk File, the air-conditioning plant, and a dedicated mass-storage backing system; the array proper accounted for about half the cost. The cost per delivered floating-point operation per second was approximately one hundred and twenty-five dollars per FLOPS at sustained rate. (The CDC 7600, by way of contrast, was approximately fifteen dollars per FLOPS sustained.) The ILLIAC IV was eight times more expensive per delivered FLOPS than the CDC 7600 it competed with.

Eighty per cent seismic

The most surprising fact about the ILLIAC IV’s operational life at Ames is the workload mix. Hord 1982 quotes the late-1976 split:

“These equations [aerodynamic flow] were important to the NASA Ames users, who now take up about 20 percent of Illiac IV operating time solving aerodynamic flow equations. The remaining 80 percent of Illiac IV time is taken up by a diverse, and often anonymous, group of users, many of whom still use the GLYPNIR language.”17

Twenty per cent of the machine’s wall-clock time went to NASA Ames Computational Fluid Dynamics. Eighty per cent went to remote users on ARPANET, principally Department of Defense classified seismic-data-processing and signal-processing work funded by DARPA’s Nuclear Monitoring Research Office. The connection ran back to NORSAR, the Norwegian Seismic Array, established in 1968 as a Norwegian-American collaboration funded jointly by ARPA and the Norwegian government as a treaty-verification project for nuclear-test detection. NORSAR generated very large volumes of seismic data; the offline processing in particular suited a Single Instruction Multiple Data architecture because the same algorithm was applied independently to each of many seismic channels.18

The principal seismic codes that ran on the ILLIAC IV at Ames were:

TRES / I4TRES, a three-dimensional finite-difference earthquake-source simulation code. Originally developed by Systems Science and Software Inc. for the UNIVAC 1108, ported to ILLIAC IV by A. Stewart Hopkins, “successfully completed all acceptance tests early in 1978.” Used to discriminate between natural earthquakes and underground nuclear tests for treaty-verification purposes; also used by the United States Nuclear Regulatory Commission to assess seismic hazard for the San Onofre nuclear power plant.19

FKCOMB, a long-period seismic signal analysis procedure for “calculating discriminants between earthquakes and nuclear explosions.” Hord notes: “it may become an integral part of data processing on the seismic network.” Used by Teledyne-Geotech for the United States Air Force Geophysics Laboratory under DARPA contract.20

The Fixed/Mobile Experiment, a classified DARPA Tactical Technology Office project run from November 1975 to October 1976 on which the entire ILLIAC IV was dedicated almost exclusively. Principal contractor: Ensco Inc., Springfield Virginia. “The details of the activity cannot be discussed here but the effort was ultimately successful and developed confidence in some sectors that the Illiac could be counted upon for useful work” (Hord 1982 p. 124). The precise mission remains classified to the United States Department of Defense secret level. Generally interpreted in the secondary literature as a real-time signal-processing experiment for tactical signals-intelligence purposes.

The first full year of ILLIAC IV operational life – November 1975 to October 1976 – was therefore approximately one hundred per cent classified DARPA work. The machine that had been built, sold, and politically defended as a tool for science was, in its first operational year, an instrument of the United States nuclear-weapons-treaty-verification programme and of tactical signals intelligence. The remaining six years 1976-1981 ran approximately the four-to-one DARPA-to-NASA mix. NASA Ames Computational Fluid Dynamics was a minority workload on the machine NASA Ames had taken delivery of.

What ran for the climate

The ILLIAC IV’s original sponsor list – the application areas Slotnick and the ARPA programme office had identified in 1965-1966 to justify funding the project – had explicitly included climate modelling and numerical weather prediction. Hord lists the original target application areas: “ballistic missile defense analyses, reactor design calculations, climate modelling, large linear programming, hydrodynamic simulations, seismic data processing, and a host of others.”21 In other words, when the United States Department of Defense paid the cost overruns from $8 million to $31 million between 1966 and 1972, one of the things it expected to get from the machine was a working tool for atmospheric general circulation modelling.

It did not get one.

Two general-circulation-model conversions to ILLIAC IV were attempted at NASA Ames between 1973 and 1975. Both failed. Hord 1982 records the failure on page 123 in two short paragraphs:

“To some degree the reputation [of being a ‘disaster machine’] was deserved. The Goddard Institute for Space Sciences Global Circulation Climate Model implementation (conversion) effort, for example, was undertaken during this period; it was never validated as working. At first direct line for line conversion was attempted. Later a restructuring of the code to better match the Illiac characteristics was tried. At last report, after a major effort, the Illiac version of the code ran to completion but it didn’t make weather. Negative atmospheric pressures would occur in the course of the simulation.”

“To some degree the reputation was not deserved. The conversion of the Fleet Numeric Weather Central Primative Equation Weather Model conversion was another project that was begun and later abandoned. This exercise depended not only on an Illiac advertised as experimental, but also on the IVTRAN compiler that was advertised not yet to have been debugged. The failure of this project is not properly ascribed to the Illiac, but to impatience to use systems not yet in place.”22

The Goddard Institute for Space Studies general circulation climate model was, as we have already seen, the descendent of the Mintz-Arakawa UCLA general circulation model that had been built in Akio Arakawa’s UCLA group from the early 1960s onwards. Yale Mintz had moved East from UCLA to GISS in the late 1960s; he had taken the model with him; the resulting GISS GCM was a nine-level primitive-equation model that ran in 1973-1975 on NASA Goddard’s IBM 360/95. The conversion of the GISS GCM to the ILLIAC IV was undertaken in two phases. The first phase was a line-by-line port; that did not work, because of inevitable differences in floating-point semantics between the IBM 360/95 and the ILLIAC IV. The second phase restructured the code to vectorise per-PE, mapping the grid columns of the model onto the sixty-four processing elements; this completed but produced negative atmospheric pressures during simulation – meaning the numerical scheme was not stable on the SIMD machine. The most plausible technical explanation is that the lockstep PE assignment broke down at the convergence of meridians at the polar grid, where the longitudinal grid spacing approaches zero and finite-difference schemes need careful special-case handling that the SIMD model could not gracefully accommodate. The conversion was never validated. The model never produced a publishable scientific result on the ILLIAC IV.23

The Fleet Numerical Weather Central Primitive Equation Model had a different fate. FNWC, sited at the Naval Postgraduate School in Monterey California (a story already partly told in our post on the CDC 1604, which arrived at NPS as serial number one in January 1960), ran the United States Navy’s operational global weather forecasting model on a CDC 6500. The conversion of the FNWC model to ILLIAC IV was undertaken using the as-yet-undebugged IVTRAN FORTRAN compiler. It was abandoned. Hord’s careful phrasing – “The failure of this project is not properly ascribed to the Illiac, but to impatience to use systems not yet in place” – absolves the hardware. The compiler, not the architecture, killed the FNWC port.

Two attempted GCM ports, two failures. No general circulation model is recorded as having produced validated scientific output on the ILLIAC IV.

Robert Rogallo and the one real result

The one piece of atmospheric-science work that did produce results on the ILLIAC IV at Ames was the direct numerical simulation of homogeneous incompressible turbulence by Robert S. Rogallo, an Ames Computational Fluid Dynamics Branch scientist. Rogallo had begun looking at the ILLIAC IV’s architecture and assembly language in 1971, before the machine arrived. By 1973 he had written a Fortran-like programming language called simply CFD that “looked like Fortran, and could be debugged on a Fortran computer, but that forced programmers to take full advantage of the parallel hardware by writing vector rather than scalar instructions.” CFD’s distinctive design move was that it had five primitive data types – CU INTEGER, CU REAL, CU LOGICAL, PE REAL, PE INTEGER – where the type statically encoded the home of the variable, either the central control unit or the per-PE memory. The programmer had to think explicitly about which data lived where, all the time.24

In CFD, with the ILLIAC IV programmed at the data level, Rogallo extended the spectral-method work of Steven Orszag and Geoffrey Patterson (1972) to the simulation of homogeneous turbulence subjected to uniform deformation or rotation. He used a truncated triple Fourier series in space and a fourth-order Runge-Kutta time scheme, on a 128 by 64 by 64 grid – approximately five hundred thousand grid points, a very large simulation by the standards of 1976. The work was published as NASA Technical Memorandum TM-81315 in 1981, “Numerical experiments in homogeneous turbulence,” and is the single most cited atmospheric-fluid-dynamics result from the ILLIAC IV. Rogallo’s paper is foundational to all subsequent direct numerical simulation of turbulence – a field of substantial atmospheric, oceanic, and engineering relevance.25

A direct numerical simulation of homogeneous turbulence is not a weather forecast and is not a climate model. It is a basic fluid-dynamics calculation that sits one or two abstraction levels below where the GCMs of the 1970s operated. But it produced results, on the ILLIAC IV, that the wider scientific community could use and build on. It is the answer to the question “did anything atmospheric work on the ILLIAC IV?” The answer is: Rogallo’s homogeneous turbulence DNS, and Fred Alyea’s MIT stratospheric chemistry-transport model running over ARPANET (a different application again – chemistry rather than dynamics – and one that produced perhaps two hundred ILLIAC IV hours per year through the late 1970s), and not much else.

Decommissioning, and Slotnick

The ILLIAC IV was decommissioned on 7 September 1981, six years after it had been declared operational and almost ten years after it had arrived at Ames. The official decommissioning ceremony was understated; Mel Pirtle’s IAC staff was reduced; the chassis was kept in storage at Ames for several years before one Processing Element and one Control Unit chassis were transferred to the Computer History Museum in Mountain View California (less than a mile from where the machine had run), where they remain on display today.

NASA Ames replaced the ILLIAC IV with a Cray 1S in 1981, a CDC Cyber 205 and a Cray X-MP/22 in 1984, a Cray X-MP/48 in 1986, and finally the new NAS (Numerical Aerodynamic Simulation) facility in March 1987, which opened with a Cray-2 acquired in September 1985. The NAS facility represented Hans Mark’s revenge: he had been forced out of Ames in August 1977 in part because the ILLIAC IV had not delivered on its promise, and he had subsequently used his Department of Defense and NASA Headquarters appointments to push for the NAS facility’s establishment. The hand-written OMB note in his files reads simply, “sold it.” NAS and its successors – the Pleiades supercomputer cluster of 2008 and onwards – carried Mark’s vision of NASA Ames as a leadership-class supercomputing centre forward into the modern era. Without the Cray 1S, the X-MP, the Cray-2, and the NAS facility, the institutional memory of Ames-as-a-supercomputing-site might have died with the ILLIAC IV. Instead Ames is, as of 2026, one of the most consequential supercomputing centres in the United States Federal Government.26

Daniel Slotnick survived his machine by four years. He had stayed at the University of Illinois through the entire ILLIAC IV episode and had continued to teach and supervise graduate students after the machine moved to Ames in 1972. He received the IEEE W. Wallace McDowell Award in 1983 “for his pioneering work in parallel processing including the design and construction of the ILLIAC IV computer.” On 25 October 1985, while jogging in Baltimore Maryland during a visit, he suffered an apparent heart attack and died at age fifty-four. The Eckert-Mauchly Award that year went to John Cocke for his work at IBM.27

The architectural arc that returned

The ILLIAC IV was a commercial dead end in 1981. The architectural philosophy it embodied – many small processors operating in lockstep against parallel data, programmed at the data level rather than at the instruction level – did not die with the machine. Less than four years after Slotnick’s death, in 1985, W. Daniel Hillis at the Massachusetts Institute of Technology Artificial Intelligence Laboratory completed his doctoral thesis on a parallel computer he called the Connection Machine. Hillis incorporated Thinking Machines Corporation in Boston in 1983 to commercialise the design. The Connection Machine CM-1 (1985) had 65 536 processing elements – one thousand and twenty-four times as many as the ILLIAC IV’s sixty-four – arranged in a hypercube communication network and broadcasting from a host front-end. The CM-2 (1987) and CM-5 (1991) were direct descendants. Thinking Machines went bankrupt in 1994, but the architectural pattern survived: the MasPar MP-1 (1990), the Burroughs Scientific Processor (which never shipped commercially), the NEC SX vector machines (which were not strictly SIMD but used many of the same compilation patterns), and a long thin scattering of academic and research SIMD efforts through the 1990s.

The technique that finally made SIMD pay off commercially, after thirty years of dead-ending, was the graphics processing unit. NVIDIA’s G80 (delivered 2006) was the first GPU with a fully programmable Single Instruction Multiple Thread architecture; the architectural philosophy of one instruction broadcast to many parallel processing elements, with per-element mode bits to handle conditional branches, came back almost unchanged from the ILLIAC IV. NVIDIA’s CUDA programming model (2006-2007) was the first commercially-successful evolution of Slotnick’s CFD-language idea – programming explicitly at the data level, with the type system tracking which data live in shared and which in per-thread memory. Modern GPU SIMT (Single Instruction Multiple Thread) is the direct descendant of ILLIAC IV SIMD. The lineage runs ILLIAC IV (1975) → Connection Machine (1985) → NVIDIA G80 (2006) → modern H100 (2022) → the entire deep-learning compute substrate of the 2020s.

The atmospheric scientific community, whose Goddard Institute general circulation model in 1975 had produced “negative atmospheric pressures” on the ILLIAC IV, would by the late 2010s and 2020s be running large fractions of its general circulation models on GPU clusters. The architectural philosophy that could not be made to do weather in 1975 had become, by 2025, the dominant computational substrate for weather and climate modelling in the world. The graphical processing units in NCAR’s CISL Casper supercomputer cluster, in the European Centre for Medium-Range Weather Forecasts’s new generation of forecast models, in the UK Met Office’s Cray-EX systems, in NOAA EMC’s Hera and Mojave: every one of them is, in microarchitectural ancestry, a descendant of the machine that NASA Ames decommissioned on 7 September 1981.

The Mintz-Arakawa-Hansen GISS general circulation model, the model that had failed to converge on the ILLIAC IV in 1975 and had produced negative atmospheric pressures during simulation, was the same model in whose direct conceptual descendants the modern GISS climate model – run today as part of the Coupled Model Intercomparison Project that the Intergovernmental Panel on Climate Change synthesises – runs on GPU clusters at Goddard. The failure of 1975 was the architectural choice that came back. It just took fifty years for the silicon to catch up to the philosophy.

The path not taken, until it was

The ILLIAC IV’s place in the history of high-performance scientific computing has, since the early 2000s, been increasingly framed by computer architects in terms of “the path not taken.” Cray’s vector philosophy – one fast central processor with deeply pipelined functional units, plus vector registers to hide memory latency – won the commercial supercomputer market from 1976 (Cray-1 first delivery) through approximately 2000 (the last Cray T90 deployments). Slotnick’s SIMD philosophy – many small processors broadcasting one instruction across many data items – was the loser. Two architectural philosophies, designed in the same decade by competing teams at Westinghouse, Illinois, Burroughs, IBM, and CDC, with the SIMD philosophy winning the funding fight at ARPA and the vector philosophy winning the commercial market.

The retrospective shift since approximately 2006 has been to recognise that Slotnick’s philosophy was not wrong, only too early. Sixty-four PEs operating in lockstep at sixteen megahertz against two-thousand-and-forty-eight-word per-PE local memories was simply too small a substrate to make the SIMD model commercially relevant in 1975. The same architectural pattern at sixty-five thousand processing threads operating in lockstep at gigahertz clock speeds against gigabyte-class on-chip memories – which is what an NVIDIA H100 is in 2024 – is one of the most consequential computer designs of the twenty-first century. The architectural insight was Slotnick’s. The commercial vehicle came forty years later.

NASA Ames Building N-233 still exists. The ILLIAC IV is gone, but the central computer facility itself – now the Pleiades and Aitken supercomputer halls, with their tens of petaflops of GPU compute – runs the descendants of the architectural philosophy that the building was built for in 1972. The CDC 7600 of the previous post won the 1975 contest. The ILLIAC IV won the 2025 contest. Both are real history. The path that Slotnick walked through Westinghouse, Illinois, Burroughs, ARPA, Hans Mark, and Mel Pirtle, the path that ended in negative atmospheric pressures and a Computer History Museum exhibit, was not the wrong path. It was the right path with the wrong technology, in the wrong decade. By the time the technology caught up, Slotnick had been dead for twenty years.

Footnotes

References

- Bouknight, W. J., Denenberg, S. A., McIntyre, D. E., Randall, J. M., Sameh, A. H., and Slotnick, D. L. “The Illiac IV system,” Proceedings of the IEEE 60(4):369-388, April 1972.

- Bugos, G. E. Atmosphere of Freedom: 70 Years at the NASA Ames Research Center, NASA SP-4314, 70th anniversary edition, 2010.

- Burroughs Corporation. ILLIAC IV Systems Characteristics and Programming Manual, NASA Contractor Report CR-2159, February 1973.

- Falk, H. “Reaching for a giga-FLOP,” IEEE Spectrum 13(10):65-70, October 1976.

- Gilliam, E. “ILLIAC IV and the Connection Machine,” Freaktakes, https://www.freaktakes.com/p/illiac-iv-and-the-connection-machine.

- Hord, R. M. The Illiac IV: The First Supercomputer, Springer-Verlag for the IEEE Computer Society, 1982.

- Manabe, S. and Bryan, K. “Climate Calculations with a Combined Ocean-Atmosphere Model,” Journal of the Atmospheric Sciences 26(4):786-789, July 1969.

- Rogallo, R. S. “Numerical experiments in homogeneous turbulence,” NASA Technical Memorandum TM-81315, 1981.

- Slotnick, D. L., Borck, W. C., and McReynolds, R. C. “The SOLOMON Computer,” AFIPS Conference Proceedings 22, Fall Joint Computer Conference 1962, pp. 97-107.

-

Hord, R. M. The Illiac IV: The First Supercomputer, Springer-Verlag for the IEEE Computer Society, 1982, p. 123. Hord was the official historian of the Institute for Advanced Computation and edited the IAC’s newsletters; the book is the canonical primary-source reference for the operational period 1975-1981. Springer PDF at https://link.springer.com/content/pdf/10.1007%2F978-3-662-10345-6.pdf. ↩

-

Hord 1982, p. 123. ↩

-

ILLIAC IV operational dates: November 1975 first declared operational, decommissioned 7 September 1981. Source: Hord 1982 p. 11; Bugos, G. E. Atmosphere of Freedom: 70 Years at the NASA Ames Research Center, NASA SP-4314, 2010, p. 216 (operational); Wikipedia “ILLIAC IV” (decommissioning date). NASA SP-4314 PDF at https://www.nasa.gov/wp-content/uploads/2023/03/sp-4314-2010.pdf. ↩

-

Slotnick, D. L., Borck, W. C., and McReynolds, R. C. “The SOLOMON Computer,” AFIPS Conference Proceedings 22, Fall Joint Computer Conference 1962, pp. 97-107. The 1024-processor figure was the SOLOMON I target; the actual built machines were smaller prototypes. Westinghouse cancelled the programme in 1965. ↩

-

ARPA contract administered by U.S. Air Force Rome Air Development Center, signed 1966. Burroughs contract signed 1967. Original budget eight million dollars. Source: Hord 1982 pp. 8-15; Eric Gilliam at freaktakes.com, “ILLIAC IV and the Connection Machine,” https://www.freaktakes.com/p/illiac-iv-and-the-connection-machine. Burroughs facility location: Paoli, Pennsylvania, with assembly at Great Valley PA. Some derivative secondary sources erroneously give “Pasadena California” for the Burroughs facility; this is incorrect, contradicted by every primary source. ↩

-

Cost figures: $8M original (Feb 1966), $24M (Jan 1970), $31M (delivery April 1972). Source: Hord 1982 p. 15. The Slotnick “cost-plus-fixed-fee environment” quote: Hord 1982 p. 15. ↩

-

The Daily Illini, 6 January 1970, “Department of Defense to employ UI computer for nuclear weaponry.” Quoted in Hord 1982 p. 8. ↩

-

24 February 1970 ROTC firebombing: U of Illinois Library, “March Riots (1970)” research guide. 9 May 1970 “day of Illiaction” rally: UIAA Alumni magazine 2020, “Flash Point” article. 24 August 1970 Sterling Hall bombing at UW Madison: see Wikipedia “Sterling Hall bombing”; library.wisc.edu/archives/exhibits/sterling-hall-bombing-of-1970. Robert Fassnacht’s killing was the proximate alarm bell for ARPA. The “Christmas Eve 1970” attempt on the Digital Computer Lab building that some popular sources mention is unsupported in primary documentation; the event chronology that is documented is the spring 1970 firebombings + the 24 August 1970 Sterling Hall bombing + the January 1971 ARPA decision. ↩

-

Lawrence Roberts quoted in Falk, H. “Reaching for a giga-FLOP,” IEEE Spectrum 13(10):65-70, October 1976. Reprinted in Hord 1982 chapter II.A.2. ↩

-

Hans Mark biographical: NASA biography at https://www.nasa.gov/people/hans-mark/; NASA Ames Center Directors page at https://history.arc.nasa.gov/centerdirs.htm; Bugos 2010 p. 19. Mark’s tenure as Ames Director: 20 February 1969 to 15 August 1977. He died 18 December 2021 in Austin Texas. ↩

-

Hans Mark, Edward Teller, Dean Chapman, Loren Bright: Bugos 2010 pp. 215-216, citing Mark’s recollections and an interview with Jack Boyd. ↩

-

Mel Pirtle and the Institute for Advanced Computation: Hord 1982 p. 11; Burroughs ILLIAC IV Systems Characteristics and Programming Manual, NASA CR-2159, February 1973, p. ii (signed “Mel W. Pirtle, Director, Institute for Advanced Computation”). DARPA-NASA interagency agreement formally expired June 1979. ↩

-

Bugos 2010 p. 216. ↩

-

Hord 1982 p. 123. ↩

-

ILLIAC IV architectural specifications: 64 PEs, 1+15+48 floating-point format, 16 MHz clock, 2048-word PEM, chordal-ring connectivity ±1/±8. Source: Bouknight, W. J., Denenberg, S. A., McIntyre, D. E., Randall, J. M., Sameh, A. H., and Slotnick, D. L. “The Illiac IV system,” Proceedings of the IEEE 60(4):369-388, April 1972, DOI 10.1109/PROC.1972.8647. The canonical primary architectural source. ↩

-

Physical dimensions, cooling (281 tons of forced-air AC), power consumption (1.2 MW), floor space (11 700 sq ft computer bay): Hord 1982 pp. 49-55. ↩

-

Hord 1982 p. 13 (quoting Falk 1976). ↩

-

NORSAR background: https://www.norsar.no/about-us/history; Wikipedia “NORSAR.” Established 1968 as Norwegian-American collaboration funded jointly by ARPA and the Norwegian government for nuclear-test-detection treaty verification. ↩

-

TRES / I4TRES: Hord 1982 chapter V.E.1, pp. 256 ff and chapter V.A.4 p. 130. Principal author: A. Stewart Hopkins. San Onofre seismic hazard run for U.S. Nuclear Regulatory Commission. ↩

-

FKCOMB: Hord 1982 chapter V.E.2, pp. 265 ff. Citing Kerr, Wagenbreth, Smart, Der 1974 SDAC-TR-74-16, Teledyne-Geotech Report to DARPA, October 1974. ↩

-

Hord 1982 p. 4. ↩

-

Hord 1982 p. 123. ↩

-

GISS GCM port: Hord 1982 p. 123. The GISS GCM lineage from UCLA Mintz-Arakawa via Yale Mintz’s move East: see our post on Arakawa and Mintz at UCLA and the GFDL/NASA atmospheric-modelling history at https://www.gfdl.noaa.gov/brief-history-of-global-atmospheric-modeling-at-gfdl/. ↩

-

Rogallo’s CFD language: Bugos 2010 p. 216. ACM DL 800026.808404 “CFD – A FORTRAN-like language for the ILLIAC IV.” The five primitive types (CU INTEGER, CU REAL, CU LOGICAL, PE REAL, PE INTEGER) statically encoded the home of each variable. ↩

-

Rogallo, R. S. “Numerical experiments in homogeneous turbulence,” NASA Technical Memorandum TM-81315, 1981. NASA NTRS at https://ntrs.nasa.gov/citations/19810022965. Extends Orszag and Patterson 1972 spectral method to homogeneous turbulence under uniform deformation/rotation. 128 by 64 by 64 grid; truncated triple Fourier series; fourth-order Runge-Kutta time scheme. ↩

-

NAS facility: Bugos 2010 pp. 217-219. Mark’s “sold it” handwritten OMB note documented in his files. ↩

-

Daniel L. Slotnick biographical: died 25 October 1985 in Baltimore Maryland of an apparent heart attack while jogging, age 54. IEEE W. Wallace McDowell Award 1983 (not the Computer Pioneer Award and not the Eckert-Mauchly Award; the 1985 Eckert-Mauchly went to John Cocke). Source: IEEE Computer Society W. Wallace McDowell Award archive; Prabook biography; Wikipedia “Daniel Slotnick.” ↩