In July 1981 a Control Data Corporation truck pulled up at the Meteorological Office building on London Road in Bracknell, Berkshire, twenty-five miles west of London, and unloaded a two-cabinet CDC Cyber 205 vector supercomputer – the first Cyber 205 ever delivered to a customer. The machine arrived not as a Cyber 205 but as a Cyber 203E, the immediate predecessor; CDC’s field engineers would upgrade it on the Met Office floor over the following months by replacing the vector processor while leaving the scalar unit and the cabinets in place. A library record in the Met Office archive at Exeter, catalogue number 45167, preserves the contemporary slide of the machine in its new home: “Cyber 205, Computer Laboratory Bracknell, July 1981.” The slide is Crown Copyright, the photographer unrecorded, the date a fixed point.

The two cabinets stood approximately seven feet tall each. Inside them, eight megabytes of bipolar static memory (one megaword of 64-bit words) talked to a 512-bit-wide bus at 80 nanosecond access time, feeding two vector pipelines clocked at 20 nanoseconds (50 megahertz). The pipelines were memory-to-memory: vectors were streamed directly from main memory through deep functional units and back to main memory, with no register file in between. A vector add instruction took a 16-bit length field and a 48-bit base address as its operand descriptor; the maximum vector length per instruction was 65535 elements; the peak rate when the pipelines were full was 200 megaflops at 64-bit precision and 400 megaflops at 32-bit. The pipeline cooling was by freon, circulating around copper pipes against which the ECL gate-array chips were pressure-clipped from above. The whole machine drew about a megawatt and rejected its heat into the building’s chilled-water loop. Beside it, an IBM 3081 mainframe acted as front end, receiving observations off the global telecommunications system, formatting jobs, and shipping them across to the Cyber over a network of seven CDC 819 disk drives. Twenty Met Office staff shared four IBM 3270 terminals. Output volume was high enough that most products went to microfiche.

This was the machine on which the 15-level global numerical weather prediction model of the United Kingdom Met Office would run twice a day for the next nine years. A 24-hour global forecast took approximately four minutes of wall time. The 10-level finite-difference model that had run on the IBM 360/195 since 1972, when transferred to the Cyber 205, ran roughly a hundred times faster. In April 1982 the Royal Navy’s task force sailed for the South Atlantic; the same global model that the Cyber 205 had just enabled was, within weeks, providing the meteorological products that the Falklands War carrier and aviation operations consumed. By 1985 the same machine was running a 15 kilometre convection-permitting mesoscale model over the United Kingdom – the first non-hydrostatic, convection-permitting operational model in the world.

The Cyber 205 was, by any straightforward technical measure, one of the most ambitious supercomputers of its decade. It was also the last successful commercial product of Control Data Corporation’s supercomputer line. The architectural bet on which it rested – a memory-to-memory vector pipeline with a 51-clock-cycle startup – would be defeated within five years by Seymour Cray’s register-to-register Cray X-MP at customers across the United States, the United Kingdom, and continental Europe; its 1987 successor, the ETA-10, would be killed at customer sites by an operating system that crashed once every twenty-five hours; and its parent company, having spent approximately 750 million dollars on the ETA Systems spinoff, would shut down ETA on the afternoon of 17 April 1989 and exit the supercomputer business entirely. Of the roughly thirty-five Cyber 205s built, the one that arrived in Bracknell on a July day in 1981 was the first; one of the last surviving examples, decommissioned at Florida State University in October 1989, was sold to Purdue University in March 1988 for one dollar.

This post is the story of that arc – from the architectural bet to its operational triumph to its collapse – told principally through the four customer sites in the North Atlantic NWP community that bet on the CDC line: the Met Office at Bracknell, Florida State University at Tallahassee, the Fleet Numerical Oceanography Center at Monterey, and Purdue University in Indiana. It is also the story of the architect, Neil Robert Lincoln, who sketched the Cyber 205 mentally during a canoe trip in the Boundary Waters of Minnesota and then watched his second-generation machine die at customer sites for reasons unrelated to its silicon. The arc from STAR-100 through Cyber 203 to Cyber 205 to ETA-10 is the single largest commercial failure in the history of the supercomputer industry; it is also one of the more instructive failures, because the technical envelope was correct, the engineering organisation was competent, the customer commitments were real, and the architectural choice was internally coherent. What failed was the matching of architecture to workload, with a long compiler-feedback lag, in a small market with a single ruthless competitor.

Where this post fits

The previous post in this series covered the long quiet career of Edward Norton Lorenz at MIT and the way his 1963 chaos paper and 1969 predictability paper eventually entered the operational forecasting bloodstream of every major weather centre on Earth in the form of the 1992 ensemble prediction systems at ECMWF and at NMC. The connection back to the present post is not abstract. Tim Palmer, who would be the principal architect of the ECMWF Ensemble Prediction System after his 1986 move from the Met Office to Reading, spent his first decade as a meteorologist working at the Met Office building in Bracknell on the same operational corridor as the Cyber 205. Palmer’s stratospheric-dynamics work in the late 1970s and early 1980s, on Rossby wave breaking and the quasi-biennial oscillation, was the kind of fundamental atmospheric-dynamics research that the new vector supercomputer in the basement made tractable.

The other immediate cross-link is to the previous post on Frederick Shuman’s NMC, the National Meteorological Center at Suitland, Maryland. NMC was the second operational weather centre to choose the Cyber 205 – their procurement decision was settled in 1981-1982 under Shuman’s successor William Bonner, and the machine arrived in summer 1983 – and the story of how the Cyber 205 ran the LFM regional model, the Global Spectral Model upgrade from R30L12 to R40L12, the Movable Fine Mesh hurricane model, and the new Medium-Range Forecast configuration is told in detail there. This post will not retread that ground. What this post adds is the wider context: the architectural origins of the machine, the way it lost the commercial competition with Cray, the way the FSU site documented its own life and death in painstaking statistical detail, the way the Met Office’s choice in 1981 cost it Tim Palmer to ECMWF in 1986, and the way the entire CDC supercomputer programme ended on a Monday afternoon in April 1989 with 750 million dollars of writeoff.

The earlier post on ECMWF and the first Cray in Europe covered the parallel European decision – to put the Cyber 175 and Cyber 835 only as front-end machines and to run the operational forecast model on a Cray-1A from October 1978 onwards. ECMWF stayed on Cray throughout the 1980s, against the geographic and political weight that might have pushed it toward the Bracknell sister centre’s CDC choice. That negative case is half of the story of why CDC lost.

The post on the thirty-eight years of spectral modelling covered the spectral transform method itself, including Clive Temperton’s mixed-radix FFT, which Temperton had developed at the Met Office before his move to ECMWF and which the Bracknell Cyber 205 ran in hand-tuned form in the global model’s transform step. The spectral transform was one of the workloads on which the Cyber 205’s long-vector strength most clearly showed; it was also one of the workloads on which the Cray-1’s short-vector chaining most clearly showed an answering strength. The architectural war between memory-to-memory and register-to-register vector was, in operational meteorology, fought largely on spectral transforms.

1. The architect, the canoe, and the architectural bet

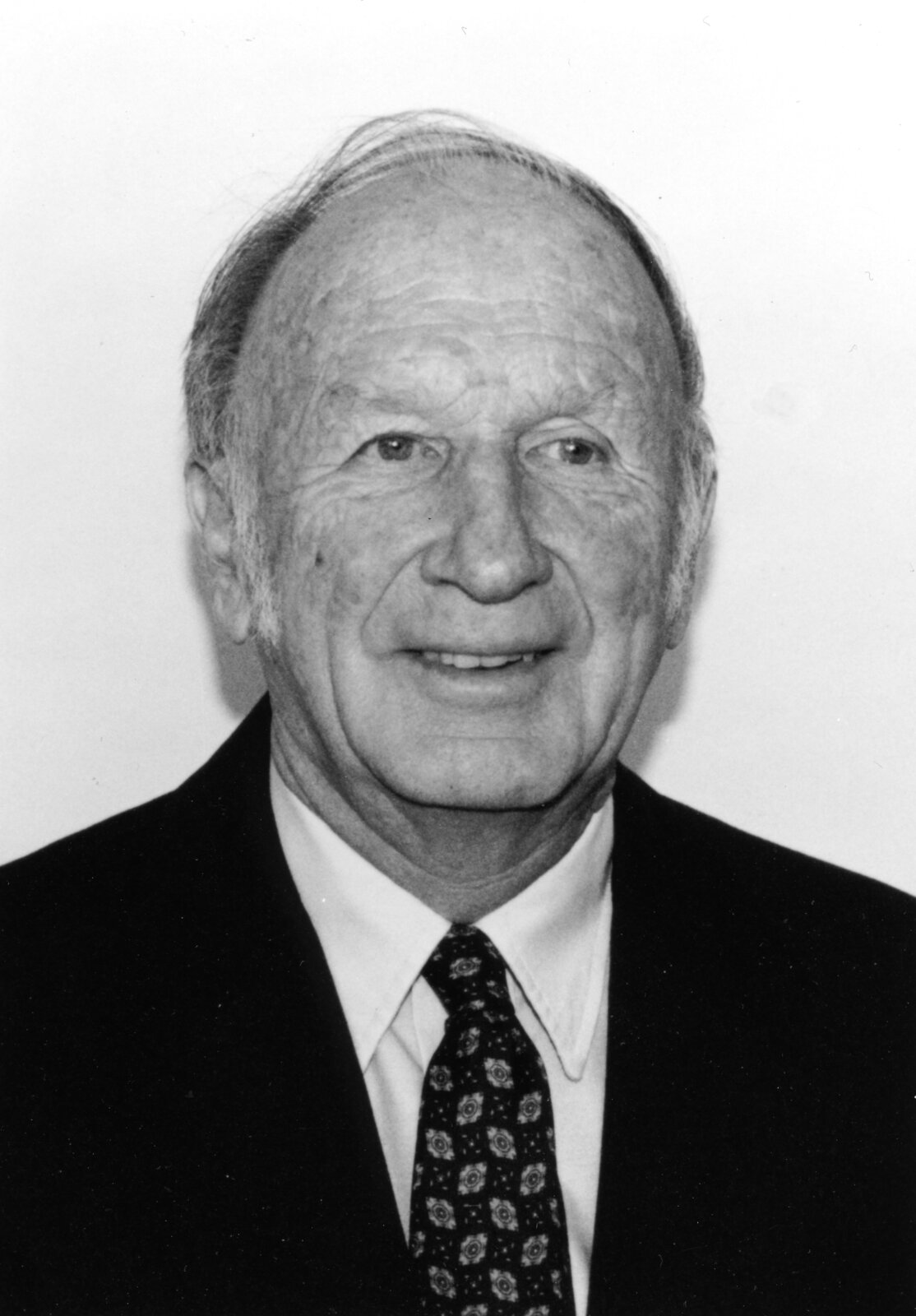

The Cyber 205 was the third commercial machine in a line that ran from the CDC STAR-100 (announced 1973, first delivery 1974) through the CDC Cyber 203 (late 1970s) to the Cyber 205 (announced 1980, first delivery July 1981). All three machines were built around the same architectural commitment: memory-to-memory vector pipelines, no vector registers, very long maximum vector length, and a deep pipeline whose startup cost was paid only once per instruction regardless of length. The architect of the line was Neil Robert Lincoln, who had been born in 1937, had taken his electrical engineering degree at the University of California at Berkeley in 1961, had served briefly in the United States Marine Corps and at United Research Services, and had joined CDC in Minnesota in 1967.

Lincoln took over the STAR-100 line from Jim Thornton, the chief designer of the original STAR. Thornton had earlier been the chief engineer on the CDC 6600 under Seymour Cray; he was, by any account, one of the most senior architects in the CDC engineering organisation. The 1972 departure of Cray himself from CDC, over the cancellation of the 8600 project by William C. Norris (the founder and CEO of CDC, who had decided that the company could not afford to pursue both the 8600 and the STAR-100 in parallel during a difficult financial period), had left the supercomputer line in Lincoln’s and Thornton’s hands. Cray’s resignation, and the founding of Cray Research that immediately followed it, is the institutional fact behind everything that came next. Every Cyber 205 sold over the rest of the decade would be sold against a competitor founded by the former chief engineer of the company building it.

Lincoln himself is one of the more colourful figures in the era’s engineering culture. He held nine United States hardware patents. He was an IEEE Distinguished Lecturer and an ACM National Lecturer. He served on the NSF Advisory Committee on Science and Technology Centers. He famously believed that wearing a necktie “would block the blood flow to the brain and dampen or cut off creativity.” And, as the standard CDC retelling has it, he sketched out the major architectural components of the Cyber 205 in his head during a canoe trip in the Boundary Waters of northern Minnesota, returning to the office to draw them down on paper. After CDC closed ETA Systems in 1989, he founded the wind-resource consultancy SSESCO – which eventually became WindLogics – and worked on wind-farm siting until his death in 2007. His own definition of a supercomputer is the one most worth remembering for this post: a machine that “pushes the technology envelope well beyond today’s.” Whether that definition is operationally useful or operationally fatal depends on what is on the other side of the envelope. In the Cyber 205’s case it was a working compiler and a maintainable operating system, and the envelope ate them both.

The architectural bet itself was coherent. A memory-to-memory machine has, in principle, three advantages over a register-to-register machine of equal silicon. First, vector length is unbounded: a 65535-element instruction can run end-to-end without a strip-mining loop, so the iteration overhead that a register machine pays at every 64-element boundary is paid only once at the start of the operation. Second, the hardware is simpler: there is no vector register file with multiple read/write ports per cycle, and the memory bandwidth is invested directly in the pipelines rather than in a register-to-memory copy stage. Third, the maximum theoretical throughput, for any given chip technology, is higher: the silicon that a Cray-1 spends on its eight 64-element vector registers can, on a CDC machine, be spent on additional pipeline stages or on wider memory paths.

The bet’s failure mode was equally specific. The deep pipeline had a long startup. A vector add instruction on a 2-pipe Cyber 205 took approximately 51 clock cycles – about 1.02 microseconds – before the first result emerged from the back end of the pipeline. A Cray-1 vector add, by contrast, took something like 5 cycles of register-to-register chaining startup. The metric that captures the consequence is N1/2, introduced by Roger Hockney and Charles Jesshope in the textbook Parallel Computers 2 and now standard: the vector length at which the machine reaches half of its asymptotic peak rate. On the Cyber 205 the N1/2 was roughly 60 to 80 elements for typical vector operations. On the Cray-1 the N1/2 was roughly 10 to 15 elements. Both machines had the same asymptotic peak in the long-vector limit; but in the short-vector regime where most real Fortran lived, the Cyber 205 paid its full startup cost on every loop while the Cray-1 reached half-peak by the tenth element of the first inner iteration. A code with average vector length under fifty would run faster on a Cray-1 with half the Cyber 205’s peak rate. A code with average vector length over a thousand would run faster on the Cyber 205. The actual distribution of vector lengths in operational atmospheric models sat awkwardly across this boundary, with spectral codes leaning toward Cray and grid-point codes toward CDC.

The STAR-100 had set the template. It had a 40-nanosecond clock, two 64-bit vector pipelines that could be split into four 32-bit pipelines, a 100 MFLOPS peak rate, 8 megabytes of core memory at 1.28 microsecond cycle, a 512-bit-wide memory bus, and a 100-element break-even point against scalar code. Three customers bought it: Lawrence Livermore took two (one in 1974, one shortly after), NASA Langley took one. The STAR’s failure on operational workloads was the standard one: its peak number was meaningless because the scalar unit ran at about 1 MIPS – slower than the CDC 7600 of Seymour Cray’s that the STAR was supposed to replace – and any non-trivial scalar fraction in a Fortran program crushed its effective rate. The Cray-1, announced in 1975 and shipping in 1976, beat the STAR on every real benchmark the meteorological and physics communities tried, despite having a lower nominal peak. By the time the third STAR shipped, CDC’s flagship supercomputer programme had been comprehensively overtaken.

Lincoln’s response, and the response of CDC’s senior management, was not to abandon memory-to-memory vector. It was to redesign. The bridging product, the Cyber 203, shipped in roughly two customer copies in the late 1970s – one of them to the Fleet Numerical Oceanography Center at Monterey – with a redesigned scalar unit and a loosely coupled I/O design. The 203 was an interim machine. The full second-generation redesign, with semiconductor memory replacing core, with ECL gate-array logic replacing the older TTL, with a faster clock, with a high-performance scalar unit running at 50 MFLOPS rather than 1 MIPS, with freon cooling, with a maximum memory of 16 megawords (128 megabytes), and with linked multiply-add triads that doubled the rated peak per pipeline, became the Cyber 205. The first one shipped to Bracknell in July 1981.

There is one more architectural detail worth pausing on, because it bears directly on the operating-software story that would later kill the line. The Cyber 205 ran an operating system called VSOS (Virtual Storage Operating System) and a Fortran compiler called FTN200. FTN200 had two persistent defects relative to the Cray FORTRAN Translator (CFT). The first was that it put all vectors in memory – there was nowhere else to put them, because there were no vector registers – even when static analysis could prove that a vector held only a handful of elements. The second was that it forced all COMMON variables and all subroutine arguments to memory, scalar or vector. A subroutine call on the Cyber 205 was therefore an unavoidable memory event; the Cray CFT, by contrast, could keep scalars in registers across calls and could keep short vectors in vector registers. Both defects compounded the startup-cost penalty: short loops paid the startup cost, and short scalar codes paid the call-overhead memory cost. Hand-coded assembler, using CDC’s vector-intrinsic library through Fortran-callable Q8 subroutine names (Q8VADD, Q8SDOT, Q8VCMUL, and so on), was the only way to get peak performance out of the machine on any code more sophisticated than a saxpy. Met Office staff in 1983 routinely wrote Fortran routines that were 90 percent Q8 calls; the global model’s FFT, R. Bell’s CSIRO secondment account records, was “around 1300 lines, nearly all Q8 calls.” Across the lifetime of the architecture, no version of the compiler would close this gap to CFT. CDC’s own brochures admitted that the 400 and 800 MFLOPS peaks were “rarely seen in practice other than by handcrafted assembly language.”

2. The decision in Bracknell, 1979-1981

The choice that put the Cyber 205 in front of the IBM 3081 at Bracknell, rather than putting a Cray-1 there, is poorly documented in the open record. The Met Office’s own retrospective, in the Archives of IT interview series, gives the operational rationale: “It had a speed advantage over the Cray because it could do floating point calculations in 32-bit precision, rather than the Cray’s 64 bit.” The 32-bit operation on the 205 doubled the effective flop rate – 400 MFLOPS peak on a two-pipe machine at 32-bit, against 200 MFLOPS at 64-bit – and was, in the operational meteorologist’s judgement, “adequate for most well-conditioned meteorological calculations.” Forecasting Research staff at Bracknell, working under the leadership of section heads who included Alan Dickinson (later Director of Science and Technology at the Met Office), routinely ran the global model in 32-bit precision in production. The Cray-1 had no half-precision mode; the X-MP that arrived later did, but in 1980 the Cray side of the procurement choice was a single-precision 64-bit machine running everything at twice the bit width whether the meteorology needed it or not.

Beyond the precision argument, the procurement record is thin. The Met Office did not at the time publish the technical evaluation that compared the Cyber 205 against the Cray-1 nor the financial terms of either offer. The Director-General of the Met Office at the moment of the procurement decision was Sir John Mason, whose tenure ran from 1965 to 1983; the procurement was likely shepherded through under his authority, with the operational handover to his successor Sir John Houghton occurring after the machine was on the floor. Houghton would be Director-General throughout the operational lifetime of the Cyber 205, from 1983 to 1991. Houghton’s own scientific reputation was in atmospheric radiation – his 1977 textbook The Physics of Atmospheres was a standard graduate text – and his subsequent leadership of the Intergovernmental Panel on Climate Change First Assessment Report in 1990 would, more than the Cyber 205 procurement, define his historical profile. But his decade as Director-General coincided exactly with the operational Cyber 205, and any history of the Met Office’s NWP work in the 1980s is in part a history of his administration.

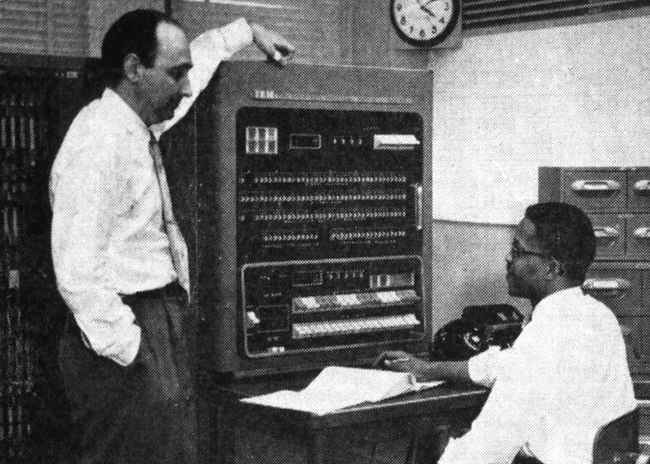

The pre-205 machine at Bracknell, COSMOS – an IBM System/360 Model 195 that had been installed in 1971 in the newly-built Richardson Wing – ran the operational forecast from 1972 until 1982. Its headline numbers were modest: about 4 MFLOPS peak, 250 kilowords of memory, the standard IBM 360 architecture pushed into its most pipelined and most expensive variant. The 360/195 ran an operational 10-level Bushby-Timpson model on a latitude-longitude finite-difference grid, hydrostatic primitive-equation, with parametrisations of radiation and convection that had been refined incrementally since the late 1960s. The 360/195 was IBM’s last gasp of the 360 line; the same model number was operating at NMC Suitland until 1983, where it ran in parallel with the new Cyber 205 during the transition year. By 1979, when the procurement decision for the next Bracknell machine had to be settled, the Met Office’s operational requirement was for the order-of-magnitude jump in throughput that vector hardware promised: a global rather than hemispheric forecast, more vertical levels, finer horizontal resolution, and a model that could be run on twice-daily 6-hour assimilation cycles rather than the twice-daily 12-hour cycle the 360/195 could just manage.

The Cyber 205 arrived as a Cyber 203E in July 1981 and was upgraded to a 205 over the next several months. Operational transfer of the new 15-level model from the 360/195 to the 205 happened in 1982, after a year of parallel running. The 360/195 was retired in 1982. The new 15-level model – a development of the 10-level Bushby-Timpson dynamical core extended with parametrisations derived from the General Circulation Model that the Met Office had developed for the First GARP Global Experiment (FGGE) campaign of 1979 – ran on a latitude-longitude grid at roughly 150 kilometre horizontal resolution and 15 levels in the vertical, with approximately one third of a million grid points. A 24-hour global forecast ran in four minutes of Cyber 205 wall time. The model was run twice daily with a T+0320 (3 hours 20 minutes) cutoff after observation time, with products on the desk by T+0430. Forecast products went to T+36 hours for aviation customers and to T+6 days for the general public. The backup arrangement against the 205 going down was the previous 12-hour run’s forecast extended a further 12 hours, with a secondary fallback to NMC Washington’s product set when both Bracknell runs failed.

That global model’s first operational pressure came in April-June 1982. The Falklands War sent a Royal Navy task force to the South Atlantic, an oceanic theatre where the Met Office had previously provided only short-range and hemispheric forecasts. The new global capability on the Cyber 205, only a few months operational, was rapidly extended to provide military meteorological support for the carrier and aviation operations of the task force. The Met Office’s own retrospective notes this as the practical pressure that pushed global capability from a developmental aspiration into an operational requirement.

The 1982 cutover from 10-level to 15-level was visible in the verification scores. The Met Office’s standard diagnostic, the 12-month-mean T+48 root-mean-square error of the 500-hPa height field, had been declining slowly from about 80 metres in 1966-1967 to about 50 metres by 1981-1982 under the old 10-level model. The 1982-1983 cutover produced a discrete drop of about 10 metres – clearly distinguishable from the underlying trend. The 15-level model’s T+72 error rapidly became approximately equal to the 10-level model’s T+48 error of five years earlier: a full day of forecast skill recovered by the architectural step from finite-difference 10-level to finite-difference 15-level on a hundredfold faster machine. T+24 vector wind errors at 200 hPa over the Europe-Atlantic-East America sector fell from the high teens of knots in 1978 to around 14 knots by 1984. This is the verification record that justified, after the fact, the 1981 procurement decision.

The third operational model that ran on the Cyber 205 from 1985 onward is the one for which the Met Office, with some justification, claims a global first. The mesoscale model went operational in 1985 at a 15 kilometre horizontal resolution over the United Kingdom only, with more sophisticated parametrisations of cloud and turbulence than the global or regional models, and with what was described in the Met Office literature as an Interactive Mesoscale Initialisation (IMI) scheme that downscaled the regional model’s analysis and then added detail from radar, satellite, and surface observations. The mesoscale grid was fine enough that the model resolved the precipitating convection it was trying to forecast, rather than parametrising it away; the model was non-hydrostatic in spirit if not always in formulation. Colin Flood’s 1988 NASA-archived talk on the Bracknell operations gave a representative case: the Brize Norton fog forecast, midnight to 0600 local, in which the mesoscale captured the transition from radiation fog to rain at approximately the right hour. Running a convection-permitting model on a 1-megaword two-pipe Cyber 205 in 1985, even over only the British Isles, was a serious computational achievement. The lineage runs directly forward from the 1985 mesoscale model to the modern UKV (UK Variable-resolution model) that the Met Office runs in 2026 over the same domain at 1.5 kilometre resolution.

The fourth operational model that ran on the Cyber 205 was the fine-mesh regional system covering Europe, the Atlantic, and just into the United States. It used the same dynamical core as the global model on a finer grid covering a limited area, and its output was the principal Bracknell contribution to the World Area Forecast products that the three centres of the World Area Forecast System – Bracknell, Washington, and a European centre that varied between Paris and Frankfurt over the period – produced for international aviation.

3. The people who wrote the code

The Met Office Forecasting Research Branch in the early 1980s was a small CDC-vector-programming shop, working in a building whose air conditioning had been designed for IBM 360 series machines and which had to be retrofitted for the Cyber 205’s freon-cooled cabinets and IBM 3081 front end. The branch head through the operational lifetime of the Cyber 205 was Mike Cullen, now Professor Mike Cullen OBE, who was at the time working on what would become the Unified Model integration scheme – the semi-Lagrangian semi-implicit dynamics that, after 1987-1991 development on the Cyber 205 and its successor, would underpin the operational Unified Model from June 1991 onward. Alan Dickinson was a section head under Cullen during the first half of the Cyber 205 period and would later become Director of Science and Technology.

Clive Temperton – the spectral-transform specialist who wrote the mixed-radix FFT algorithms that became foundational across European NWP – had been on the Bracknell staff during the late 1970s but left for ECMWF before the Cyber 205 arrived. His 1983 paper on the mixed-radix FFT, published from ECMWF rather than from the Met Office, would underpin the spectral transforms in the first ECMWF spectral model that same year. The trans-Reading move was the first of several senior departures from Bracknell to ECMWF over the 1980s; the Met Office’s commitment in 1981 to the CDC architectural line, in some sense, made some of these departures more attractive by separating the operational software environments of the two centres.

The most significant such departure, for the wider narrative arc that this post sits in, was Tim Palmer’s. Palmer joined the Met Office in the late 1970s, having taken a DPhil in general relativity at Oxford under Dennis Sciama, and worked on stratospheric dynamics – specifically on Rossby wave breaking, the quasi-biennial oscillation, and sudden stratospheric warmings – through the period when the Cyber 205 was the operational machine. In 1986 he moved to ECMWF to lead the new Predictability and Diagnostics Division. That move would, six years later, produce the singular-vector-based ECMWF Ensemble Prediction System that the previous post in this series described in detail. Whether the architectural choice that put the Cyber 205 rather than a Cray X-MP into Bracknell influenced Palmer’s decision to move is, again, undocumented; what is clear is that ECMWF in 1986 had a Cray X-MP/48 of 1985 vintage running on UNICOS, and the operational research environment around it was, by comparison with the FTN200/Q8/VSOS environment at Bracknell, much closer to what an academic relativist turned operational meteorologist would have wanted to work in.

The man who most extensively documented the Bracknell environment for outside observers was a CSIRO secondee, R. Bell, who visited the Met Office from 15 November 1983 to 16 May 1984 and whose memoir of the six-month visit – preserved on the CSIROpedia website as “Chapter 3b” of his computing-history reminiscences – is one of the most detailed first-hand accounts of any operational Cyber 205 site. Bell shared an office with Rex Roskilly, Sue Ballard, and Steve Forman, all Forecasting Research staff. His own documented contribution to the operational system was a small but consequential one: he replaced the global model’s Fourier chopping (a truncation scheme used near the poles to keep the meridional resolution roughly isotropic) with Fourier damping, which sped up the operational model by approximately 10 percent and stopped the recurrent September “blow-up” of the operational forecast that had been caused by spectral aliasing during the equinoctial period of strongest polar jets. The change is the kind of small algorithmic refinement, invisible from outside the operational shop, that decides whether a model is up for the morning forecast or being rerun by hand.

4. The American customers, 1982-1985

The Cyber 205 in Bracknell was the first; the second, in the United States, was at Purdue University in January 1983; the third, at the National Meteorological Center at Suitland, in summer 1983. Beyond those three the customer base for the 205 across its production life was small – estimates put total deliveries at approximately 35 machines worldwide, against the approximately 80 Cray-1s and the several hundred Cray X-MPs that Cray Research would sell over the same period. The 205 was a niche product through its entire life. The atmospheric and oceanographic NWP community in the United States contained five customer sites of relevance to this post: NMC Suitland (the subject of its own dedicated post), FNOC Monterey, FSU Tallahassee, Purdue West Lafayette, and a brief evaluation engagement at the National Aeronautics and Space Administration that did not produce a permanent installation.

FNOC and the Navy’s NOGAPS

The Fleet Numerical Oceanography Center at Monterey, California, the United States Navy’s centralised numerical forecasting facility, traced its lineage to 1958 when the Navy Numerical Weather Problems (NANWEP) group was formed at Suitland, Maryland, alongside the Joint Numerical Weather Prediction Unit that this series has covered in earlier posts. NANWEP moved to the Naval Postgraduate School in Monterey in 1959, was reorganised as the Fleet Numerical Weather Facility (FNWF) in 1961, moved out of NPS into its own standalone building in 1974, and was renamed Fleet Numerical Oceanography Center (FNOC) in 1979 to absorb the ocean modelling mission alongside atmospheric forecasting. On 1 October 1993 it would be renamed again to Fleet Numerical Meteorology and Oceanography Center (FNMOC), the title it retains in 2026.

FNOC’s mission has been continuous since 1958: produce centralised weather and ocean forecasts in support of every Navy operation worldwide – carrier strike groups, submarine optimal-track routing, anti-submarine-warfare acoustics products, amphibious operations, search-and-rescue, polar-ice operations, helicopter and aircraft hazard forecasts, ballistic re-entry weather, missile flight conditions. The Navy is the only United States service that runs its own global atmospheric model rather than consuming NMC’s or AFGWC’s; this dates from the era when meteorological data flow over military communications was unreliable and the Navy wanted operational independence from civilian providers.

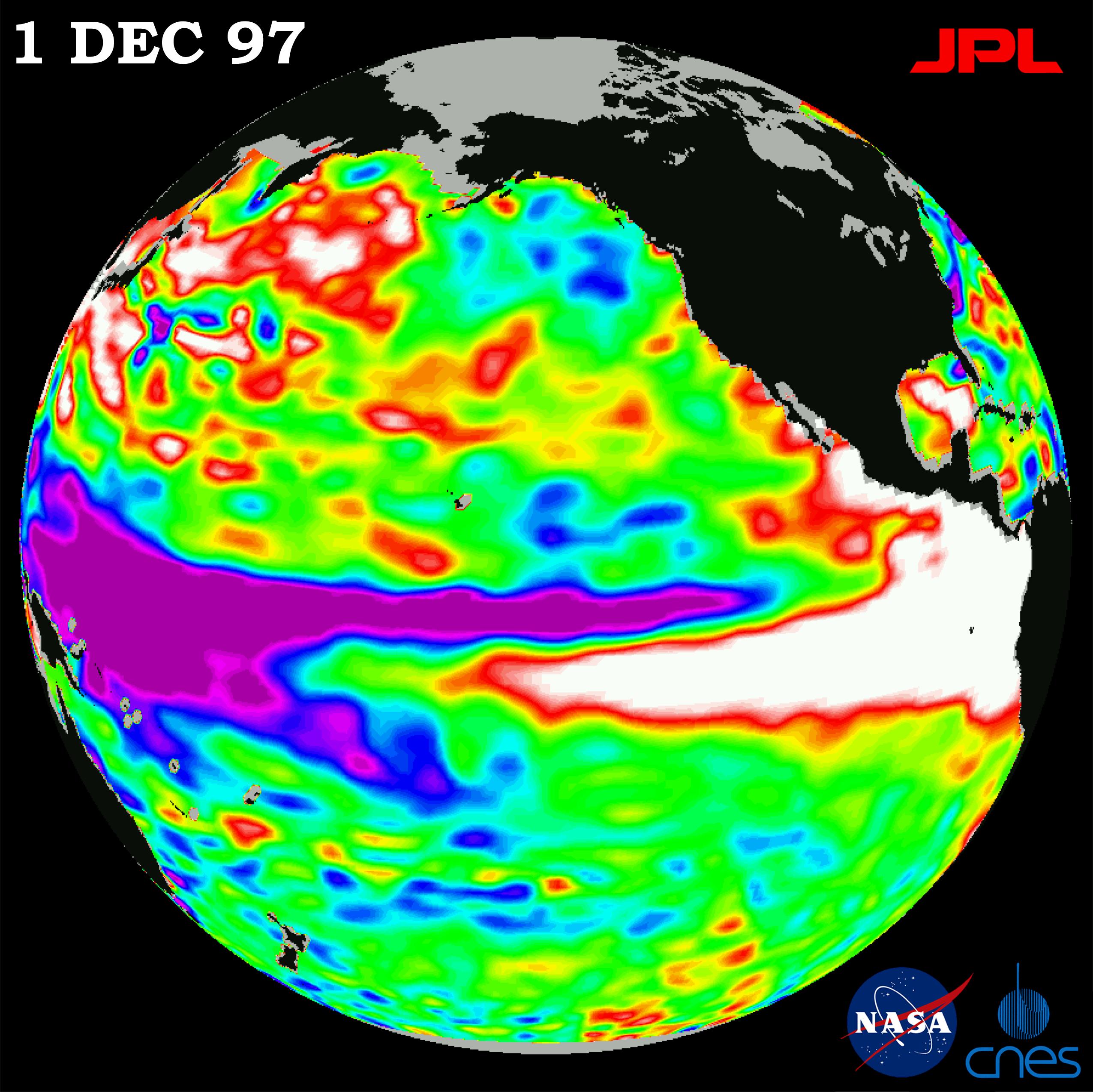

FNOC’s hardware lineage through the 1970s was CDC: a CDC 6500 in the late 1960s, a CDC Cyber 175 through much of the 1970s, then a CDC Cyber 203 delivered in 1980. The 203 was, as noted, the interim STAR-100-derived machine that filled the slot between the original STAR-100 and the eventual Cyber 205. CDC put substantial engineering effort into getting the UCLA general-circulation model to run on the 203; Tom Rosmond’s later retrospective slide deck described it as “heroic work by CDC to get UCLA model to run fast.” The 203 was replaced by a Cyber 205 in 1982, almost simultaneously with the first operational version of the Navy Operational Global Atmospheric Prediction System (NOGAPS), which Rosmond and Edward Barker dated as operational from August 1982. NOGAPS 1 used a rhomboidal-truncation spectral dynamical core derived from the earlier Naval Research Laboratory research model. By 1988 the operational version was NOGAPS 3 at T47L18 (triangular truncation at total wavenumber 47, eighteen vertical levels), the version that Timothy F. Hogan and Thomas E. Rosmond documented in their 1991 Monthly Weather Review paper that became the canonical NOGAPS reference.

The FNOC scientific establishment of the 1980s spanned two organisations co-located in Monterey: FNOC itself was the operational shop (operations, dissemination, the duty meteorologists, the production schedule) and the Naval Environmental Prediction Research Facility (NEPRF, later NRL Monterey after the 1992 NRL reorganisation) was the research-and-development counterpart on Mark Avenue. The Cyber 205 lived at FNOC; the NOGAPS codebase was owned and developed at NEPRF and NRL. Rosmond was the central scientific figure through the entire arc, leading NOGAPS development from the late 1970s into the 2000s. Hogan was the second co-developer of the operational dynamical core through the late 1980s and 1990s. Barker appears as a senior NRL Monterey scientist in the AMIP-era NOGAPS work and in the later Navy Data Assimilation System (NAVDAS) development. FNOC’s commanding officers were Navy line officers on standard three-year tours, typically captains; the technical continuity was maintained by the civilian science staff rather than by the rotating military commanders.

FNOC moved off the Cyber 205 to a Cray Y-MP in 1990, contemporaneously with the identical transition at NMC. Both centres exited the CDC line in the same year, a small but pointed data point about the end of the architecture in operational United States NWP.

FSU and the loneliest reliability database in supercomputing

The Florida State University acquisition of a Cyber 205 in March 1985 is, for the purposes of any history of the architecture, the single most valuable site because FSU documented its own machines in painstaking statistical detail through the entire 205-ETA-Cray transition. The documentation – preserved on the FSU Research Computing Center website at docs.rcc.fsu.edu/history and on Dave Duke’s mirror at ed-thelen.org/comp-hist/super-users-view.html – is the canonical user-side narrative of the late-1980s supercomputer transition.

The institutional context: in 1984 the United States Department of Energy funded the Supercomputer Computations Research Institute (SCRI) at FSU under a collaborative agreement. The motivation was national. The early-1980s United States scientific community was alarmed at Japanese and European progress in supercomputing while the National Science Foundation and DOE had no national-scale academic supercomputer access programme. The NSF supercomputer centres programme – NCSA at Urbana-Champaign, SDSC at La Jolla, PSC at Pittsburgh, JVNC at Princeton, the Cornell Theory Center – would not start until 1985-1986. SCRI at FSU was a parallel DOE initiative with the explicit goal of giving DOE-funded researchers across the country supercomputer access on a national basis.

SCRI took delivery of a CDC Cyber 205 in March 1985. The configuration was a two-pipe machine with 32 megabytes of central memory (4 megawords), 7.2 gigabytes of online disk, a Cyber 835 file-server front end, and a LINPACK 100x100 score of approximately 17 megaflops – a small fraction of the 200 MFLOPS theoretical peak, reflecting the short-vector LINPACK kernel that the architecture’s long-vector strength could not exploit. The machine was in production by April 1985, running code from local FSU researchers (most prominently Krishnamurti’s group, on which more in a moment) and from DOE-funded researchers across the United States.

The reliability record, transcribed from the FSU documentation, is the centrepiece of any honest assessment of the architecture. From March 1985 to October 1989, the FSU Cyber 205 logged approximately 38000 usable hours over 4.5 years, giving an overall wall-clock availability of approximately 95 percent. When it was up, CPU utilisation was approximately 96 percent. The mean time between failure (MTBF) before the installation of an Uninterruptible Power Source in December 1986 was 34.7 hours. After the UPS installation, the MTBF rose to 127.2 hours. By any standard of mid-1980s supercomputing, this was a robust and well-managed machine.

Its successor at FSU, the ETA-10E, would not look anything like as good in the same measurement framework.

Purdue and the dollar

The second Cyber 205 installed in the United States arrived at Purdue University in West Lafayette, Indiana, in January 1983. The Purdue computing-history page at pucc.me/timeline is unusually detailed for an American university computing record and is the source for most of what follows. The configuration: a single vector processor plus a scalar processor, with a memory described in the source as “10^6 64-bit words” (one megaword, eight megabytes, equivalent to the Bracknell 205’s initial configuration), and three gigabytes of disk. A new underground extension to the MATH building was constructed to house it.

In 1984 the National Science Foundation awarded Purdue 5.5 million dollars to provide Cyber 205 access to the United States national research community. This made Purdue one of the first NSF-funded national supercomputing providers, alongside the University of Minnesota and Boeing Computing Service – effectively a prototype for the formal NSF supercomputer centres programme that began in 1985.

In March 1988 Purdue acquired a second Cyber 205 from FSU SCRI for one United States dollar. It was shipped from Tallahassee, installed in West Lafayette, and running by late autumn 1988. This is the FSU Cyber 205 that had been displaced by the ETA-10. The dollar price – a peppercorn fee that satisfied the legal requirement for consideration in the transfer of title from FSU to Purdue – is the closing data point of the Cyber 205 secondary market. By 1988 a four-year-old two-pipe Cyber 205 with two megawords of memory, eight pipelines of operational engineering, and four and a half years of production atmospheric and physics workload could be transferred between research universities at a price set by the cost of paperwork rather than by any negotiated valuation. The architecture had become commercially worthless within seven years of its first delivery.

The original 1983 Purdue Cyber 205 – the “LCD” machine in the local nomenclature – was finally powered down at 9:45 am on Friday 1 July 1994. The 1988 ex-FSU machine was retired earlier. Purdue’s use of both machines was overwhelmingly engineering and physics rather than meteorology; the PUCC timeline does not record any atmospheric or oceanographic modelling on either 205. Purdue meteorology in the 1980s was not at the spectral-global-model scale of FSU. The Cyber 205s at Purdue were NSF national-access machines for chemistry, computational fluid dynamics, particle physics, computational structural mechanics, and engineering design.

NCAR’s negative case, and AFGWC’s

Two other large United States atmospheric-computing centres did not buy a Cyber 205, and the reasons matter.

The National Center for Atmospheric Research at Boulder, Colorado, stayed exclusively Cray throughout the 1980s. NCAR’s machine list – preserved on the Computational and Information Systems Laboratory’s “NCAR supercomputing history” page – runs: Cray-1A serial number 3 commissioned 11 July 1977 and decommissioned 1 February 1989 (the first Cray-1 sold to a science customer); Cray-1A serial number 14 commissioned 2 May 1983 and decommissioned 1 October 1986; Cray X-MP/48 commissioned 1 October 1986 and decommissioned 30 September 1990; Cray Y-MP/8 from around 1988. NCAR never bought a Cyber 205, never bought an ETA-10, and never seriously evaluated either. The institutional reasons, in roughly descending importance, were: historical priority (NCAR was Cray’s first serious customer in 1977, with deep software and user-community investment in the architecture before the 205 was even available); architecture preference (NCAR’s modelling community had a strong preference for register-to-register vector with short-loop friendliness, which fit climate-model inner loops better than the memory-to-memory long-vector design of the 205); software ecosystem (by 1981 CFT was mature and CTSS was well understood, while the Cyber 205 software stack was less developed); and a single-vendor risk that NCAR’s annual reports occasionally flagged but never acted on.

The Air Force Global Weather Central at Offutt Air Force Base, Nebraska – the United States Air Force counterpart to FNOC, serving the worldwide Air Force with particular emphasis on the Strategic Air Command nuclear-force support mission – has sometimes been described in secondary sources as a Cyber 205 site. It was not. AFGWC’s NWP platform from the mid-1980s onward was a Cray X-MP, on which the Advanced Weather Analysis and Prediction System (AWAPS) ran from 1986. AWAPS comprised a Global Spectral Model (GSM) at approximately 493 kilometre resolution and 14 layers, the High Resolution Analysis System (HIRAS) data-assimilation component, a Relocatable Window Model (RWM) for movable regional forecasts conceptually similar to NMC’s MFM, the Real-Time Nephanalysis (RTNEPH) cloud analysis (AFGWC’s signature product, since cloud forecasts were Air Force operational gold), and the older 5-LAYER cloud forecast model. The clearest direct evidence is the 1986 DTIC document AFGWC’s Advanced Weather Analysis and Prediction System (AWAPS) (DTIC ADA172801), which introduces AWAPS in 1986 with a Cray X-MP as the computational engine. AFGWC never ran a Cyber 205 and never ran an ETA-10. The Air Force operational NWP shop was a Cray shop throughout the 1980s. This corrects a recurring misattribution in the secondary literature, which conflates AFGWC at Offutt with the Air Force Geophysics Laboratory (AFGL) at Hanscom AFB, Massachusetts – AFGL had its own spectral model adapted to the Cray-1 in 1984, but AFGL was a research lab and AFGWC was the operational shop. The two acronyms are easily confused.

A general summary of the United States atmospheric-computing customer pattern circa 1985: two operational weather centres on the Cyber 205 (NMC and FNOC), one operational weather centre on the Cray X-MP (AFGWC), one civilian research centre on the Cray X-MP (NCAR), one academic site as the eventual ETA-10 launch customer (FSU), one academic site as the second United States Cyber 205 installation but used for engineering and physics rather than weather (Purdue). NCAR and AFGWC are the two centres that could have gone CDC and chose not to.

There is one important negative item to flag explicitly here, because the prompt that started this series had it the other way round: ECMWF never operated a Cyber 205. The European Centre for Medium-Range Weather Forecasts at Shinfield Park, twenty-five miles from Bracknell, had Cyber 175 (from 1978), Cyber 835 (from January 1982), and Cyber 170-855 (from December 1983) as front-end machines – general-purpose computers for job submission, archiving, and product dissemination – but the supercomputer that ran the operational forecast model was a Cray throughout the 1980s. ECMWF’s chronology runs: Cray-1A serial 9 installed 24 October 1978, operational 1 August 1979 (8 MB, 160 MFLOPS peak, 50 MFLOPS sustained, 5 hours for a 10-day forecast); Cray X-MP/22 installed 13 March 1984 (dual-processor); Cray X-MP/48 installed 30 December 1985 (four-processor); Cray Y-MP/8 around 1990; Cray C90/12 and then C90/16 in 1991-1994; Cray T3D in 1994; Fujitsu VPP series from 1996. The CDC machines at Shinfield Park existed; they were never supercomputers. The Cray-CDC architectural war was decided at ECMWF in 1978, three years before the Cyber 205 was a product, when ECMWF chose the Cray-1A serial 9 for its operational forecast engine. The Bracknell decision in 1981 went the other way; the consequence, as the next two sections will track, would shape the international NWP-computing landscape through the rest of the decade.

5. The Cray competitor in the field

The machine that killed the Cyber 205 commercially was not the Cray-1 – it was the Cray X-MP. The X-MP was announced in 1982, with principal designer Steve Chen, and shipped in volume from 1983 onward. Its specifications, against which every Cyber 205 of 1983-1985 vintage had to defend itself in procurement reviews, were: a 9.5 nanosecond clock (105 MHz, half again faster than the Cyber 205’s 50 MHz); 1, 2, or 4 CPUs sharing memory (the first shared-memory parallel vector supercomputer in production, against the Cyber 205’s single CPU); 200 to 235 MFLOPS per CPU peak at 64-bit precision, giving a four-CPU X-MP/48 an aggregate peak of approximately 800 MFLOPS at 64-bit – equal to the four-pipe Cyber 205’s 800 MFLOPS at 32-bit, and double the two-pipe 205’s 200 MFLOPS at 64-bit. Each X-MP CPU had two read ports and one write port to memory (against the Cray-1’s combined single port, tripling the per-CPU memory bandwidth). Vector chaining was improved over the Cray-1, and the 1984 X-MP models added gather-scatter instructions for sparse-data operations. Memory ran from 2 to 8 megawords (16 to 64 megabytes), later expanded. The operating system was COS through 1985 and UNICOS (a Cray-specific UNIX variant) from 1986. The X-MP was, with full justification, marketed as “the world’s fastest computer from 1983 to 1985.”

The X-MP’s specific advantage over the Cyber 205 was the combination of three things. First, the scalar performance was excellent. Cray Research had inherited from the Cray-1 the engineering tradition that the scalar unit was a first-class citizen rather than an afterthought; the X-MP’s scalar was, like the Cray-1’s, simultaneously among the fastest scalar machines in the world. Any Fortran program with a non-trivial scalar fraction ran well on the X-MP and ran badly on the Cyber 205. Second, the short-vector performance was excellent. The X-MP inherited the Cray-1’s 64-element vector registers and the chaining architecture; its N1/2 stayed in the 10-to-15-element range that had given the Cray-1 its real-application dominance over the STAR-100 ten years earlier. Third, the compiler. CFT (Cray FORTRAN Translator) was the first commercial Fortran compiler that vectorised standard ANSI 1966 source code without programmer modification – the user wrote a do-loop in straight Fortran, and CFT decided whether to vectorise it. FTN200 on the Cyber 205 required programmer hints in the form of Q8 calls or explicit descriptor syntax to vectorise anything but the most trivial loops, and even then it forced all temporaries to memory and lost most of the compiler’s chaining opportunities.

The fourth and fifth factors, on which the competition was decided in some procurement reviews, were softer. The fourth was customer service. Cray Research in the early 1980s was a small, hands-on engineering company headquartered in Chippewa Falls, Wisconsin. Customers got direct access to the engineers; Seymour Cray himself was known for replying to bug reports. CDC, by 1983, was a vast Minnesota corporation with the institutional unresponsiveness of a much larger firm; bug reports went through a customer-support layer that distanced the customer from the engineering organisation. The fifth was personality. Cray was, by then, a public figure in the engineering press – the genius engineer in jeans, the man who reportedly built tunnels under his Wisconsin house for relaxation, the architect who refused to use computers in his own design work and worked on paper with a pencil. William Norris and Neil Lincoln were respected technologists but did not generate the same mythology. In a small market where prestige influenced procurement decisions, especially in the academic and government segment, Cray’s persona was a real commercial asset.

The result was that across the 1983-1985 window, when most United States atmospheric-computing centres were making their major supercomputer procurements, the Cray X-MP won most of the contested decisions. NCAR went Cray X-MP/48 in October 1986. AFGWC went Cray X-MP for AWAPS in 1986. ECMWF had already gone Cray X-MP/22 in March 1984 and X-MP/48 in December 1985. The Cyber 205 won at NMC and FNOC, where the procurement decisions had been settled earlier (NMC’s in 1981-1982 under William Bonner; FNOC’s by extension of the existing Cyber 203 contract in 1982). The 205 won at FSU’s SCRI in March 1985, after which FSU would also become the ETA-10 launch customer. Beyond that, the 205 ran out of victories. A four-pipe 205 sold at GFDL but the principal GFDL machine was a Cray X-MP. The Cyber 205 sold at a few German and Dutch academic sites (Ruhr-Universität Bochum, SARA Amsterdam, Deutscher Wetterdienst), a Canadian university (University of Calgary), and a small list of others. The total customer base across the production life of the architecture was approximately 35 units. Cray Research sold approximately 80 Cray-1s alone and would sell hundreds of X-MPs.

The Hockney-Jesshope framework captures the verdict cleanly. In Parallel Computers 2 (Hilger, 1988), Hockney and Jesshope plot every contemporary supercomputer in the (R-infinity, N1/2) plane: peak asymptotic rate on the x-axis, half-peak vector length on the y-axis. The Cray-1 sits at high R-infinity and low N1/2. The Cyber 205 sits at high R-infinity and high N1/2. Real applications, the framework argues, contain a distribution of vector lengths; a machine with low N1/2 handles the short-vector tail of that distribution efficiently while a machine with high N1/2 pays its setup cost on every short loop. The integrated performance over the realistic distribution of vector lengths therefore favours the low-N1/2 machine. The framework is more abstract than the operational reality but the operational reality matches its prediction across the contested 1983-1985 procurements with embarrassing fidelity.

6. The 1983 spinoff and the 1986 machine

CDC’s response to the commercial loss to Cray Research over the 1982-1983 period was to spin off a separate subsidiary, ETA Systems, to build the architectural successor to the Cyber 205. The announcement came on 18 April 1983; the new company was legally constituted in August or September 1983. Headquarters were in Bloomington, Minnesota, later moving to St Paul. Lloyd M. Thorndyke, a long-time CDC executive, left CDC in September 1983 to become president and chief executive of ETA Systems; he “took the cream of the company’s supercomputer scientists with him,” in the standard phrase, with Neil Lincoln himself as the new company’s chief architect. William Norris’s initial funding commitment to ETA was reported as approximately 40 million dollars; the actual investment, by the time CDC shut the subsidiary down in April 1989, would be of the order of 750 million dollars when all writeoffs were counted. The strategic rationale Norris gave for the spinoff was that CDC’s internal structure had become “increasingly ossified” and that ETA needed to operate outside the parent’s institutional weight to keep up with Cray’s pace of redesign. The name “ETA” was deliberately non-acronym; one account ties it to the seventh letter of the Greek alphabet on a linotype keyboard, the frequency-rank-seventh letter of English.

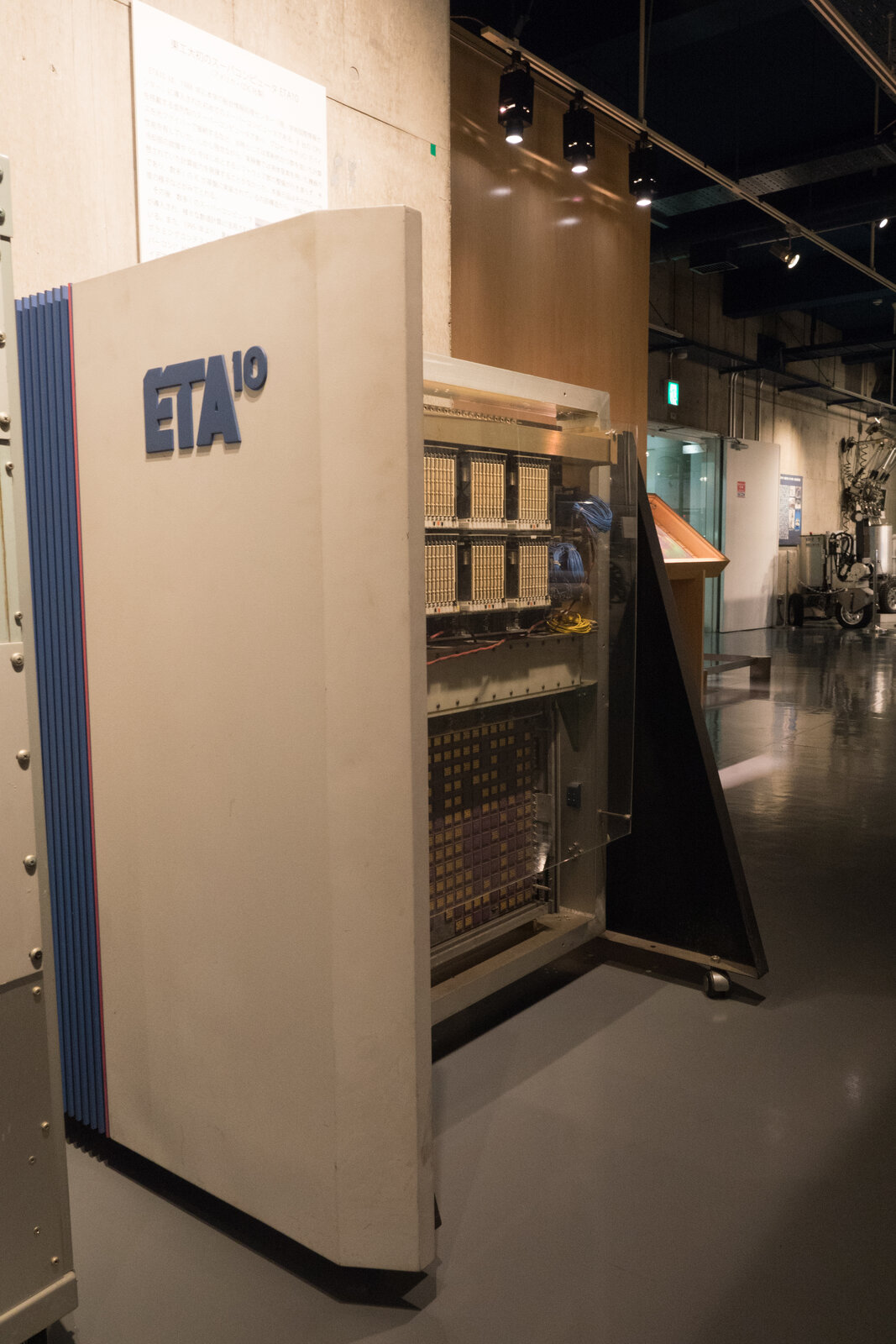

The machine ETA Systems built, the ETA-10, was, in a phrase that captures its architecture more precisely than any longer description, eight Cyber 205s sharing memory. Each CPU was a two-pipe memory-to-memory vector processor with the same instruction set as the Cyber 205, with the same FTN200 compiler (literally the same compiler, retaining the Q8 vendor-specific subroutine call interface) targeting it. The CPUs communicated through a shared-memory bank of up to 256 megawords of DRAM and a separate communication buffer for synchronisation. Each CPU had 4 megawords of local SRAM. The logic was CMOS – the first all-CMOS supercomputer ever produced – using 250 CMOS gate arrays per CPU on a 44-layer printed circuit board, with each array containing 20000 gates at a 1.25 micrometre feature size from the Honeywell Very High Speed Integrated Circuits (VHSIC) programme, well ahead of the 3 to 5 micrometre commercial CMOS of the same period.

The four ETA-10 models were differentiated principally by cooling and clock speed. The ETA-10E and ETA-10G were liquid-nitrogen cooled at approximately -196 degrees Celsius, with the entire CPU board mounted in a sealed cryostat through which a closed-loop LN2 system circulated. The 10E had a 10.5 nanosecond clock and an 8-CPU peak of 8.32 GFLOPS; the 10G had a 7 nanosecond clock and an 8-CPU theoretical peak of 10.3 GFLOPS. The ETA-10P (Piper) and ETA-10Q were air-cooled, with longer clock cycles (24 and 19 nanoseconds respectively) and price tags approaching the one-million-dollar mark intended to compete in the entry-level supercomputer market. The cryogenic cooling on the E and G models was, in theory, the source of a fourfold speedup of the CMOS over room-temperature operation; in practice it delivered approximately twofold. Liquid-nitrogen consumption at FSU, where the operational ETA-10G ran a two-cryostat configuration, was approximately 7000 gallons per week. The cryostat had no commercial precedent in supercomputing; ETA Systems had to design the closed-loop LN2 circulation, the cabinet seals, the safety interlocks, and the supply infrastructure from scratch.

The Cyber 205 single-CPU peak was 200 MFLOPS at 64-bit. The ETA-10E single-CPU peak was approximately 1040 MFLOPS at 64-bit, an order-of-magnitude improvement; the 8-CPU configuration’s 8.3 GFLOPS theoretical peak put it nominally ahead of every other supercomputer in 1987. The LINPACK 100x100 score on a single-CPU ETA-10E was 52 MFLOPS, which when published in 1988 led a benchmark table that placed it ahead of the NEC SX-2 (43 MFLOPS) and the Cray X-MP/4 (39 MFLOPS) on that specific benchmark. Sustained 8-CPU multi-tasked performance, however, remained far below the 8.3 GFLOPS theoretical peak, and effectively never approached it because of software problems that would dominate the rest of the ETA Systems story.

The first ETA-10E shipped to Florida State University SCRI on 5 January 1987 – serial number 1, formally announced at SCRI in April 1987 as the launch customer. The prototype clocked at 12.5 nanoseconds; by autumn 1987 the production E-model ran at 10.5 nanoseconds. Within two weeks of installation the prototype was running a FORTRAN job that had been ported from the SCRI Cyber 205. By the standards of the architectural transition, that initial port – enabled by the shared instruction set with the Cyber 205 – was a success.

It was the operating system that destroyed the machine.

7. The software disaster

The ETA-10 hardware was reasonably solid. The software ruined it.

The first-shipment ETA-10E arrived at customers in 1986 and 1987 without an operating system. Programs had to be hand-loaded from attached Apollo workstations – a process that turned the supercomputer into an expensive batch-processing peripheral of a 1985-vintage minicomputer. ETA Systems had committed, at founding, to writing its own new operating system in Cybil, a Pascal-like language that CDC had used for systems programming since the 1970s. The new operating system was called EOS – the ETA Operating System. Its development was the strategic centre of the ETA software effort.

EOS reached “early user access” maturity, in the W15 release, only in January 1988. Subsequent releases EOS 1.1, EOS 1.1A, EOS 1.1B, and EOS 1.1C continued to crash. The Florida State University statistical record, from the same documentation that gave the Cyber 205 its 127.2-hour post-UPS MTBF, gave the ETA-10 a mean time between failure of 25.4 hours. The cause was overwhelmingly software: kernel panics, file-system corruption, scheduler deadlocks, and the occasional catastrophic crash of the cryogenic cooling subsystem that took the hardware down with it. “Software failure once every 30 hours” was the documented FSU experience.

The other software defect, equally consequential, was that the FORTRAN compiler on the ETA-10 was literally the same compiler as the Cyber 205. FTN200 ran on the ETA-10 with the same Q8 vendor-specific subroutine call interface, the same lack of register-vector temporaries, the same forced-to-memory subroutine arguments, the same lack of modern optimisations, the same lack of portability features that the rest of the industry was developing through the 1980s. The ETA-10 inherited the Cyber 205’s compiler defects, ten years on, against competitors whose compilers (CFT77 on Cray, IBM Fortran 8x on IBM, Sun’s f77 on workstations) had moved to modern automatic vectorisation, software pipelining, register allocation, and inter-procedural optimisation.

The third software disaster was the multi-user restriction. Under EOS the ETA-10 was constrained to two users per CPU; on an eight-CPU machine that meant a maximum of sixteen concurrent users. Compared to the Cray X-MP under UNICOS, which routinely supported dozens of interactive users with a mature time-sharing scheduler, this was an unsupportable limitation in a research-computing environment. The ETA-10 under EOS was effectively a remote batch machine when the customers wanted an interactive supercomputer.

The escape route was UNIX. HCR Corporation of Canada (sometimes described as Lachman Associates in older sources) had ported AT&T’s UNIX System V to the ETA-10 by autumn 1988. The port was made available to customers in October 1988. FSU and most other ETA-10 customers switched immediately, even though System V on the ETA-10 ran the user programs themselves more slowly than EOS could have done on the same hardware. The reason was simple: UNIX worked. Under EOS the ETA-10 was a remote batch machine that crashed every twenty-five hours; under UNIX System V it was an interactive supercomputer that crashed much less often. FSU made the operational switch over a single weekend in October 1988 (“with little difficulty,” the FSU documentation records).

The retrospective lesson, drawn by the FSU team in their later writeup, was uncompromising: “It is ironic that even with such careful attention to almost identically matching the instruction set between the 205 and the ETA-10, the approach to operating system development appeared to be an effort almost from scratch…. if the UNIX effort had been the original operating system then it would have been more timely and widely accepted, perhaps to ensure ETA’s success.” ETA Systems had wasted three years on Cybil and EOS instead of porting the operating system the rest of the industry was already adopting. By the time UNIX System V arrived on the ETA-10 in October 1988, the company had six months left to live.

8. The MTBF moment

The full Florida State University reliability table, from the documentation preserved at docs.rcc.fsu.edu/history and ed-thelen.org/comp-hist/super-users-view.html, is the single most damning statistical record in the history of the architecture. The numbers are reproduced below in their published form, all in hours of mean time between failure:

| System | MTBF (hours) | Period at FSU |

|---|---|---|

| Cyber 205 (without UPS) | 34.7 | March 1985 – December 1986 |

| Cyber 205 (with UPS) | 127.2 | December 1986 – October 1989 |

| ETA-10 | 25.4 | January 1987 – approximately 1990 |

| Cray Y-MP | 2064.2 | April 1990 onwards |

The Cyber 205 with UPS was roughly five times more reliable than the ETA-10 that was meant to be its successor. The Cray Y-MP that replaced the ETA-10 was approximately eighty times more reliable than the ETA-10 it displaced. This single ratio captured the institutional difference between Cray Research’s hardware-and-software discipline and CDC/ETA Systems’ hardware-focused, software-afterthought approach. The Cyber 205 and ETA-10 were engineered as silicon-and-cooling problems; the operating system was an afterthought delegated to a separate effort that started late, picked the wrong language, picked the wrong target, and never recovered. Cray Research, by contrast, engineered the OS, the compiler, the libraries, and the customer-service infrastructure as first-class components of the product. The result is the difference between 25.4 hours and 2064.2 hours: roughly the difference between a machine you can use for production scientific computing and a machine that cannot survive the working week.

The 80x reliability gap is what ended the architecture. It was not the peak rate; the ETA-10’s peak rate at single-CPU was nominally ahead of the Cray Y-MP’s at the same date. It was not the silicon technology; the all-CMOS ETA-10 was three years ahead of Cray’s bipolar ECL. It was not even the price-performance ratio; ETA Systems claimed at the shutdown, with some justification, that it had “the best price-performance ratio of any supercomputer on the market,” especially with the air-cooled Piper and Q models at approximately one million dollars. It was, simply and exactly, that customers buy realised performance, not paper performance, and a machine that crashes every twenty-five hours cannot realise its paper peak no matter how impressive that peak is.

The institutional lesson is worth stating clearly because it has been re-learned at every subsequent supercomputer transition. The compiler matters more than the chip. The operating system matters more than the cooling. The customer-service organisation matters more than the press release. The technologies that fail at the customer site are usually the ones where the software stack was budget-cut against the hardware stack. The Cyber 205 and the ETA-10 were both engineered as hardware-led products with software brought along behind; the Cray-1, X-MP, Y-MP, and C90 were engineered with the compiler-and-OS team alongside the hardware team and with the customer-service organisation alongside both. The result was a four-decade survival for Cray Research as a corporate entity and a six-year survival for ETA Systems.

9. The shutdown

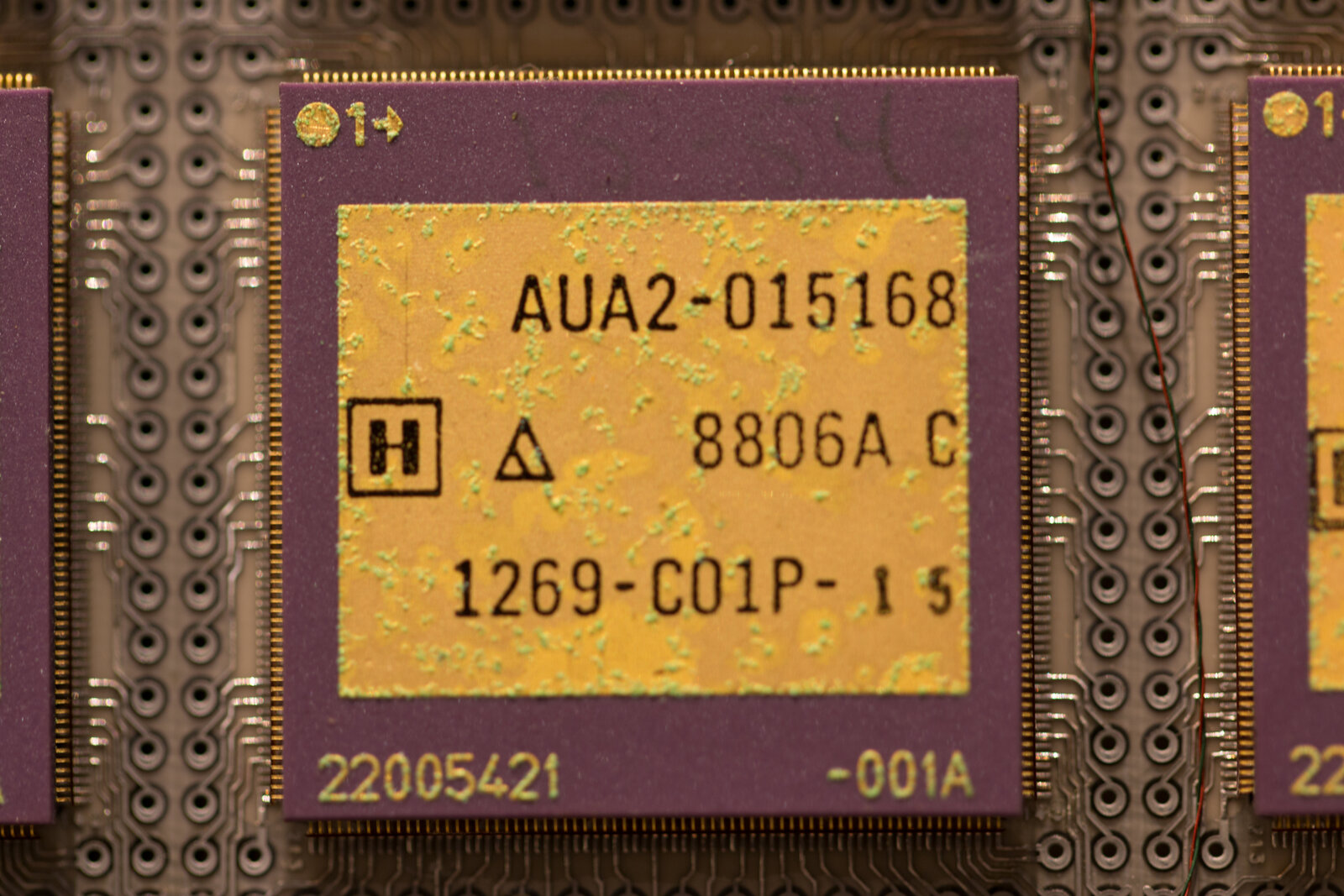

On 17 April 1989 – a Monday afternoon in Bloomington, Minnesota – Control Data Corporation abruptly closed ETA Systems and terminated all of its employees. A four-processor ETA-10G had been installed at Florida State University on 21 April, four days after the parent company had killed the line. The writeoff against the ETA Systems failure was approximately 750 million dollars, recorded against CDC’s 1989 fiscal results. At the time of shutdown, ETA had shipped 7 liquid-cooled (ETA-10E and G) systems and 27 air-cooled (ETA-10P and Q) systems, for a total of approximately 34 customer systems across the company’s six-year operational life. The customer list at shutdown included Florida State University, NASA Johnson Space Center, the John von Neumann Center at Princeton (which had ordered two systems, both of which were later destroyed to prevent unauthorised access after the JVNC closure), Purdue University, the Minnesota Supercomputer Center, Tokyo Institute of Technology (ordered in autumn 1987, delivered 1988), Meiji University in Tokyo, Academia Sinica in Taiwan, Deutscher Wetterdienst, the UK Met Office (about which more in a moment), TNO-FEL in the Netherlands, and Thomas Jefferson High School for Science and Technology in Fairfax, Virginia, which received an air-cooled system as a donation. The remaining inventory at shutdown was donated to high schools via the SuperQuest science competition.

The institutional cascade after 17 April 1989 was rapid. Lloyd Thorndyke had fought to free ETA Systems from CDC’s control during the company’s decline and had been replaced by another leader before the shutdown; the company died regardless. CDC exited supercomputing entirely after 17 April 1989. CDC itself dissolved as a major computer company in 1992, splitting into Ceridian (the services arm) and Control Data Systems (the computing remnants); the company that William Norris had founded in 1957 ceased to exist as a coherent commercial entity within three years of the ETA shutdown.

For the operational meteorology customers, the cascade meant a forced migration off the architecture. Florida State University announced on 15 November 1989 an agreement to swap its ETA-10G for a Cray Y-MP/432 (4 CPUs, 1.3 GFLOPS peak, 256 MB memory). The Cray Y-MP was installed in March 1990 and went into production on 9 April 1990; an interim air-cooled ETA-10Q bridged the gap from March to November 1990. Deutscher Wetterdienst in Offenbach made the same transition to a Cray Y-MP. The National Meteorological Center at Suitland, which had been a Cyber 205 site since 1983 rather than an ETA-10 site, moved to a Cray Y-MP/8 in 1990; the Fleet Numerical Oceanography Center at Monterey also moved to a Cray Y-MP in 1990. By the end of 1991 every operational meteorological centre in the United States that had previously bought a CDC supercomputer was running on a Cray.

The Met Office story has one ambiguous chapter that the public record cannot quite resolve, and it is worth being explicit about. There is a photograph in the Science Photo Library archive, catalogue number C011/4772, captioned “Met Office ETA10 supercomputer, 1988,” taken at Bracknell in October 1988. The photograph is real and well-documented. The Met Office’s own chronological list of operational supercomputers, however, jumps from the Cyber 205 (1982) directly to the Cray Y-MP C90/16 (1991), with no ETA-10 in the operational sequence. The Wikipedia ETA-10 customer list draws from ETA Systems documentation that names FSU, JSC, JVNC, Purdue, Tokyo Tech, Meiji, Academia Sinica, Deutscher Wetterdienst, and Thomas Jefferson High School – with the UK Met Office named as a customer in some lists and not others. The plausible reading is that the Met Office took an ETA-10, probably an air-cooled ETA-10P, for evaluation or short-term trial in 1988 as the natural Cyber 205 architectural successor, but never moved operational workload onto it before ETA Systems collapsed in April 1989. The operational machine at Bracknell remained the Cyber 205 from 1982 to 1990 or 1991, when it was replaced by Cray. A 1988 trade-press headline from Tech Monitor reading “UK MET OFFICE TO GET ETA 10” – behind a paywall in 2026 and not retrievable – is consistent with an order or trial having been announced but with the deal not surviving the April 1989 shutdown.

The wider lesson the Bracknell ETA-10 ambiguity points at, regardless of the specific resolution, is that the Met Office in 1988 was facing the same architectural-trap problem that FSU, DWD, and the other Cyber 205 customers faced. Having bet on the CDC vector lineage in 1981, the Met Office had now to either pivot to Cray (the same vendor it had rejected eight years earlier) or wait for ETA Systems to deliver an architectural successor. The brief ETA-10 evaluation, if that is what the October 1988 photograph captured, was the last attempt to stay within the CDC architectural family. By April 1989 the family had ceased to exist.

10. Bracknell to C90: the Unified Model and the operational endpoint

The Met Office’s successor to the Cyber 205 was a Cray Y-MP C90/16, installed in 1990-1991 and going operational with the new model on 12 June 1991. The new model that was the operational reason for the hardware transition was the Unified Model, a single dynamical core that handled both the short-range numerical weather prediction problem and the climate-simulation problem within the same software framework. The Unified Model’s integration scheme had been developed at Bracknell by Mike Cullen’s group through 1987-1991; the initial operational configuration at the 12 June 1991 launch ran the short-range forecast at 0.833 degrees by 1.25 degrees (approximately 90 kilometres) on a 19-level vertical grid, and the climate configuration at 2.5 degrees by 3.75 degrees (approximately 300 kilometres). The C90/16 sustained approximately 10 GFLOPS on the new model – a step-change up from the Cyber 205’s ~50 to 80 MFLOPS sustained on the older 15-level model and a factor-of-100 increase on what was, in 1991, the operational throughput of the Met Office numerical forecast.

Mike Cullen received the UK Met Office and Royal Air Force L.G. Groves Memorial Award in 1991 for his work on the Unified Model integration scheme. The Unified Model itself would, over the next three decades, undergo two major rewrites (the New Dynamics scheme around 2002 and ENDGame around 2014) and is now in the transition to the LFRic framework that the Met Office and the Cambridge atmospheric-dynamics community are jointly developing in 2026. The lineage runs unbroken from the C90 of 1991 through every subsequent Met Office machine to the present operational system: Unified Model on C90 (1991), Cray T3E (1996-1997), Cray T3D and T3E successors, NEC SX cluster in the early 2000s, IBM Power systems in the mid-2000s, Cray XC30 and XC40 from 2014, the Microsoft Azure cloud system contracted in 2020 and operational from 2024. The Cyber 205 was the platform on which the Met Office made the transition from finite-difference 10-level hemispheric forecasting to global 15-level forecasting and then to convection-permitting mesoscale forecasting; the C90 of 1991 was the platform on which it made the transition from finite-difference to semi-Lagrangian semi-implicit dynamics on the unified climate-and-weather code base.

The closing institutional cross-reference is to ECMWF. The Reading centre’s parallel transition, from Cray X-MP/48 of December 1985 to Cray Y-MP/8 around 1990 to Cray C90 in 1991-1994 to Cray T3D in 1994 to Fujitsu VPP from 1996, ran on a different vendor pipeline but at a similar tempo to the Met Office’s. By 1996 the Cray-CDC architectural war had been comprehensively settled in Cray’s favour at every European NWP centre; the subsequent vendor competition was between Cray Inc. (the company that emerged from the bankruptcy reorganisation of Cray Research’s original parent), Japanese vector vendors (Fujitsu, NEC, Hitachi), and the emerging IBM Power line. The CDC lineage in operational NWP ended on the day in 1991 when the Met Office cut over from the Cyber 205 to the Cray Y-MP C90, and FNOC and NMC’s identical transitions in 1990. Within the wider scientific-computing community, the CDC lineage had ended on 17 April 1989 in Bloomington, Minnesota.

11. Five reasons Cray won

The summary verdict on the architectural war, drawn from contemporary comparisons (the SIGNUM Newsletter Cray-1 vs Cyber 205 paper of 1981, the NASA Langley and Lawrence Livermore reports of 1982-1985, the Hockney-Jesshope textbook of 1988, the Thorndyke “Demise of ETA Systems” essay of 1994, and the FSU retrospective on docs.rcc.fsu.edu), comes down to five reasons in roughly descending order of importance.

First, scalar performance. The Cray-1 was, simultaneously with being among the fastest vector machines in the world, the fastest scalar machine in the world at 80 MIPS. Amdahl’s law penalised the Cyber 205 ruthlessly on any code with a non-trivial scalar fraction. CDC tried to fix this in the Cyber 205’s high-performance scalar unit (50 MFLOPS scalar with five independent functional units for add, multiply, logical, single-cycle, and divide-sqrt-convert), but it never closed the gap fully. On a Fortran program that spent thirty percent of its time in scalar code, the Cray-1 ran the scalar fraction at 80 MIPS and the Cyber 205 ran it at 50 MFLOPS-equivalent (substantially slower than 80 MIPS in real terms); the Cyber 205’s higher vector peak was eaten by Amdahl’s law before the comparison even reached the vector regime.

Second, short-vector performance. Real Fortran inner loops in operational meteorology, on the workloads of the 1980s, were not thousands of elements long. They were tens or low hundreds. The N1/2 of approximately 15 elements on Cray against approximately 60 to 80 on the Cyber 205 decided the race on the actual codes that customers needed to run. Spectral transforms decomposed into many short vector operations along the longitudinal direction at each latitude; grid-point physics decomposed into vector operations over rows or columns of the global grid. Both workloads, in the inner-loop sizes that the Met Office, NMC, and FSU codes actually generated, favoured the Cray.

Third, compiler maturity. CFT (and its 1980s successor CFT77) was years ahead of FTN200. CFT was the first commercial Fortran compiler to automatically vectorise ANSI 1966 source code without programmer modification. FTN200 forced everything to memory, required Q8 hints for non-trivial vectorisation, and never developed the inter-procedural and register-allocation infrastructure that the rest of the industry was building. The Met Office’s documented experience of writing 1300-line “Q8 call” FFT routines was the symptom of this gap. Atmospheric modellers across the community gradually restructured their codes for CFT, which made them faster on Cray and equally hard to optimise for CDC.

Fourth, customer service. Cray Research’s small, hands-on, Chippewa-Falls engineering culture in the early 1980s gave customers direct access to the engineering organisation; CDC’s larger, more layered corporate structure distanced bug reports from the engineering team that could fix them. The institutional difference compounded itself over the life of an installation: a Cyber 205 customer who needed a vector-pipeline bug fixed waited longer than a Cray-1 customer who needed the same fix, and the operational meteorology community noticed.

Fifth, personality and prestige. Seymour Cray was a public figure in a way that William Norris and Neil Lincoln were not. The mythology of the genius engineer in jeans, building tunnels under his Wisconsin house for relaxation, refusing to use computers in his own design work, replying personally to bug reports – whether literally true or partly embroidered – was a real commercial asset in a small market where procurement decisions were made by senior scientists who valued the personal touch of the architect. In academic and government procurement especially, Cray’s persona translated into procurement-committee votes.

The deeper question – why CDC, having lost on memory-to-memory with the STAR-100 and then with the Cyber 205, repeated the architectural bet a third time with the ETA-10 – has a similarly multi-part answer. Sunk-cost commitment to the FTN200 compiler base. Institutional path dependence in the Lincoln-Thorndyke team that had built two memory-to-memory machines and knew the design space intimately; pivoting to register-to-register vector would have meant essentially starting over. Belief that the technology would save the architecture: CMOS at 1.25 micrometres and liquid-nitrogen cooling were genuinely ahead of the industry, and the team believed raw transistor speed could overcome the architectural disadvantage. It could not, because the disadvantage was in the compiler-visible architecture rather than in the gate speed. And, fatally, the strategic mistake on software: investing in EOS instead of UNIX from day one, which delayed the ETA-10’s operational debut by three years and then made the eventual UNIX port a hurried retrofit rather than a planned first step.

The contemporaneous machine that Cray had ready against the ETA-10 was the Cray Y-MP, sold from 1988. Its specifications were modest by ETA-10G theoretical-peak standards but ruthlessly mature in software terms: a 6 nanosecond cycle (167 MHz), 2 to 8 vector CPUs, 333 MFLOPS per CPU peak, 2.667 GFLOPS aggregate at 8 CPUs, 256 to 1024 megabytes of SRAM, ECL VLSI, a new liquid cooling system, UNICOS as the operating system, and the mature CFT77 compiler. The Y-MP held the “world’s most powerful supercomputer” title in 1988-1989 at 2.144 measured gigaflops. The ETA-10G had a higher theoretical peak. The Y-MP had the working operating system. At every site where the choice was made between them in 1989-1991 – FSU, DWD, the Met Office (whatever the precise resolution of the 1988 Bracknell evaluation), FNOC, NMC – the Y-MP won. The 80x MTBF gap was the headline number; the underlying difference was that Cray had engineered the OS as part of the product and ETA Systems had not.

12. Coda: the architecture and the institutions

The Cyber 205 was a coherent architectural bet that lost. The bet was that memory-to-memory vector pipelines with very long maximum vector length and very high peak rate would defeat register-to-register vector pipelines with shorter vector length and lower peak rate, on the workloads of the 1980s scientific-computing community. The bet was made in 1971-1973 with the STAR-100 design, was revised through the Cyber 203 of the late 1970s, and was committed for a second generation with the Cyber 205 of 1980 and a third generation with the ETA-10 of 1986. The bet was wrong, and the wrongness became visible at customer sites between 1976 (when the Cray-1 demonstrated the register-to-register alternative on real codes) and 1985 (when the Cray X-MP demonstrated the multi-CPU shared-memory alternative). CDC’s response was to double down rather than pivot; the consequence was the 1989 shutdown of ETA Systems and the dissolution of CDC itself as a major computer company by 1992.

The institutional consequences for the operational meteorology community were mixed. NMC at Suitland ran a productive Cyber 205 era from 1983 to 1990, producing the R40L12 spectral model upgrade, the LFM-to-NGM transition, the Movable Fine Mesh hurricane model, and the new Medium-Range Forecast configuration; the dedicated post on Shuman’s NMC covers this in detail. FNOC at Monterey ran NOGAPS 1, 2, and 3 on its Cyber 205 from 1982 to 1990, producing the operational global Navy forecast system that would underpin Navy meteorological products through the 1990s and 2000s. FSU SCRI ran the Krishnamurti group’s single-model FSU Global Spectral Model on the Cyber 205 from 1985 onwards – a forerunner of the superensemble technique that Krishnamurti’s group would publish in 1999 from later Cray Y-MP and T3E hardware, but during the Cyber 205 era at FSU the work was the single-model FSU GSM rather than the multi-model superensemble. The Met Office at Bracknell ran the 15-level global model from 1982, the fine-mesh regional model, and the 15-kilometre mesoscale model from 1985 – the world’s first non-hydrostatic, convection-permitting operational model.