There is an old, revered software engineering proverb: “Think twice, or maybe thrice, then code.”

It’s a beautiful sentiment. It evokes images of pristine whiteboards (probably paper sheets pulled out of a printer), perfectly documented UML diagrams, and software that writes itself over a cup of artisanal tea.

But in the highly competitive, publish-or-perish meat grinder of academia? We don’t do that here.

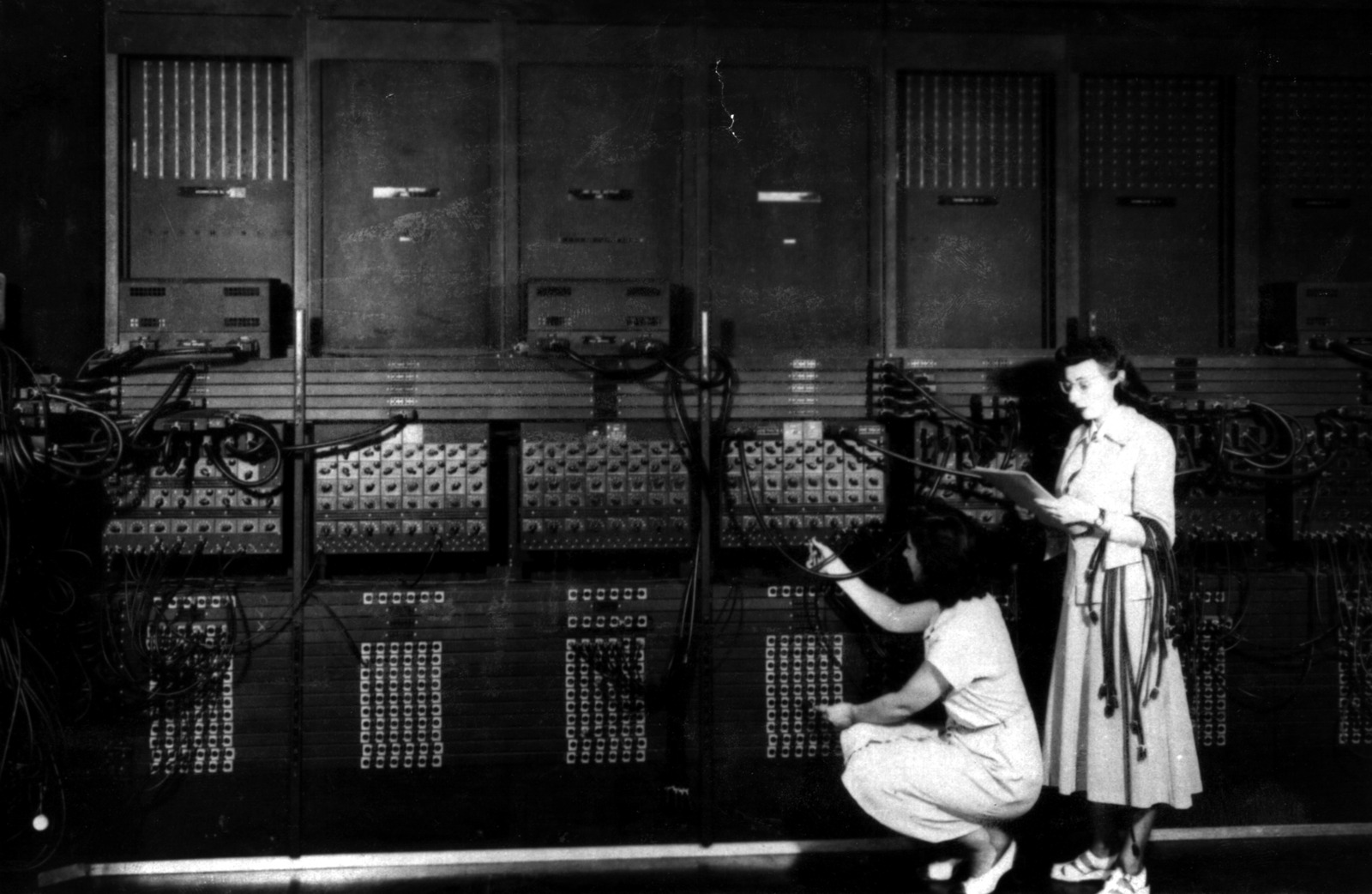

The actual academic approach is more like: Go fast, poke it with exploratory data analysis, and see what catches fire.

The Anatomy of an Academic Codebase

In a perfect world, we all adhere to the principle of writing “good code” - the mythical, self-explanatory script that requires not a single comment.

In the real world, an academic codebase survives on thousands of comments, a loosely correlated methods paper, and exactly 799 panicked little tweaks held together by silver tape and the glory of God. Yes, it works. It produces the plots. It gets the paper submitted.

But then the trap springs. You wait long months in the peer-review purgatory, completely flushing the project from your working memory. Suddenly, Reviewer #2 emerges from the shadows, demanding answers to hyper-specific methodological questions.

You open the script. You stare at this strange, alien piece of code.

Who wrote this? you wonder. Oh right. I did.

From Grinding Code to Herding Agents

This brings me back to the GeoTOP hackathon and why, exactly, I approached a massive C++ architecture problem not with a compiler and a prayer, but with knowledge and a team of synthetic brains.

Here is the truth: I am rusty in C++. Somewhere along the line, my career shifted. I went from grinding code in the trenches to managing teams. My mental compiler for C++ is covered in cobwebs. But while my syntax memory has faded, my grasp of process and solid architecture hasn’t. I know how a system should be built, even if I no longer want to manually type out every pointer allocation.

So, when a colleague lost hope staring down the barrel of an impossible simulation timeline, I didn’t sit down to write C++. I sat down to manage.

The Real Loot Drop

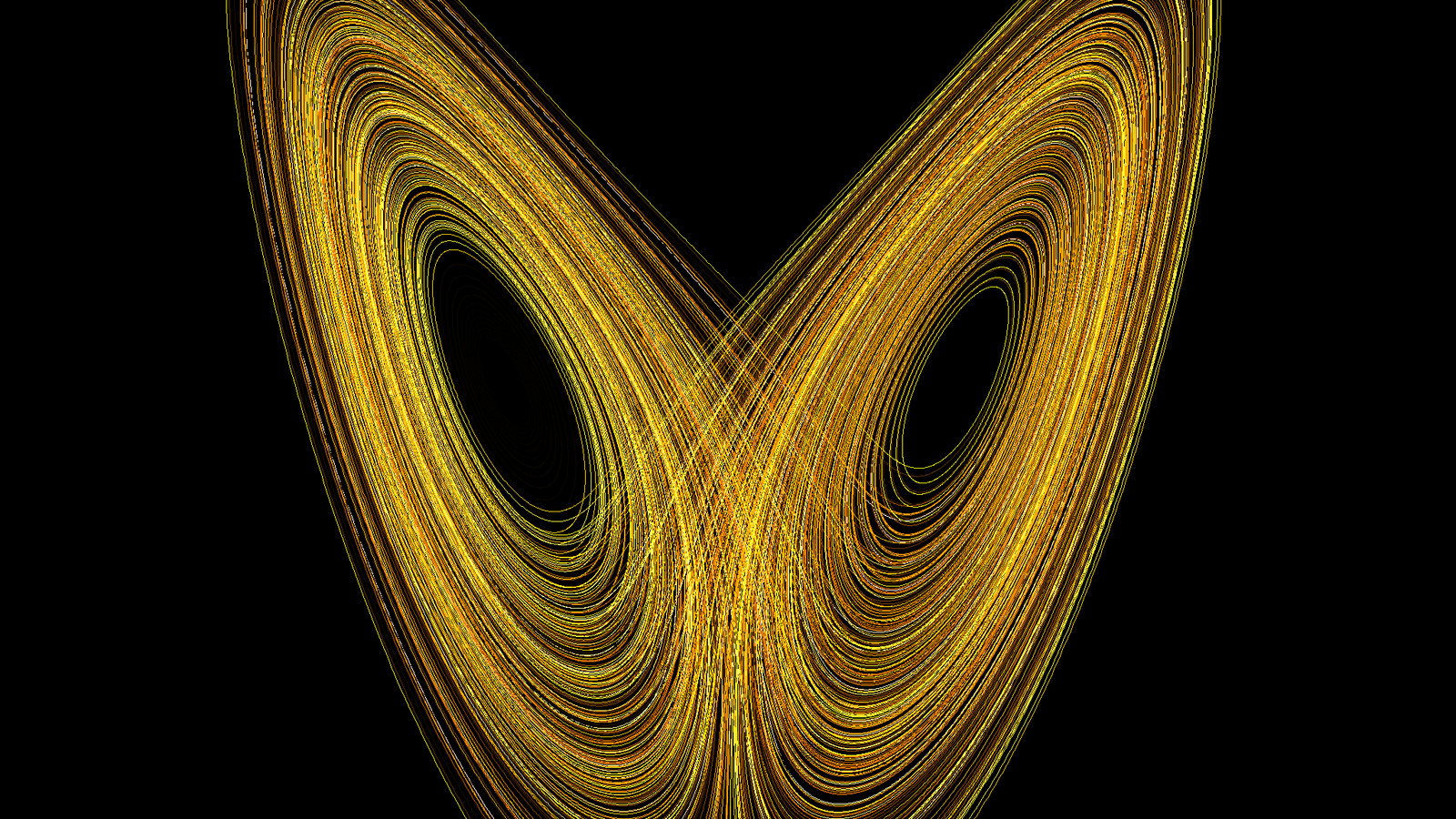

Honestly, though? It has been a genuinely cool side project. It kept my brain running at high efficiency between the iterations of my own primary project. Having to digest a stack of interesting papers just to internalize the underlying hydrological algorithms turned out to be a surprisingly fun and enriching exercise. It was a chaotic detour, but a highly profitable one for the intellect. And also remember what the proverb says - architecture first, so I had to get intimate with the flow (of work, of course, what else). For yer enjoyment, find the references:

References

- Bui, M. T., Lu, J., & Nie, L. (2020). A Review of Hydrological Models Applied in the Permafrost-Dominated Arctic Region. Geosciences, 10(10), 401. https://doi.org/10.3390/geosciences10100401

- Formetta, G., Capparelli, G., David, O., Green, T., & Rigon, R. (2016). Integration of a Three-Dimensional Process-Based Hydrological Model into the Object Modeling System. Water, 8(1), 12. https://doi.org/10.3390/w8010012

- Giuseppe, F., Simoni, S., Godt, J. W., Lu, N., & Rigon, R. (2016). Geomorphological control on variably saturated hillslope hydrology and slope instability. Water Resources Research, 52, 4590-4607. https://doi.org/10.1002/2015wr017626

Enter the AI Task Force

Having no spare second, and considering AI models like Claude are currently writing a 4% of the code on GitHub anyway (the projections say 20% end of 2026), why not speed things up? We are brute forcing hope here, remember?

I didn’t just ask a chatbot to fix a script. I spun up a team of Claude agents with strict, unbending rules of engagement and an MCP server. I treated them exactly like a team of junior developers.

And here is the absolute most important rule I gave them: Explain yourself.

They had to explain every bug fix and every rewrite to me before a single line of code was committed. After all, I know how the process works and what solid architecture looks like. Secondly, the algorithm is the effort. And You can’t let the AI have all the fun.

The result? We didn’t just get a faster model; we got a masterclass in applying modern architectural principles to legacy academic code, orchestrated by a rusty C++ programmer and executed by highly disciplined synthetic agents.

Sometimes, the best way to write code isn’t to write it at all. It’s to know exactly what questions to ask the entity doing the typing.

Current status:

- Silver tape: Still holding.

- God’s glory: Intact.

- AI Agents: Awaiting their next sprint.